Today's Overview

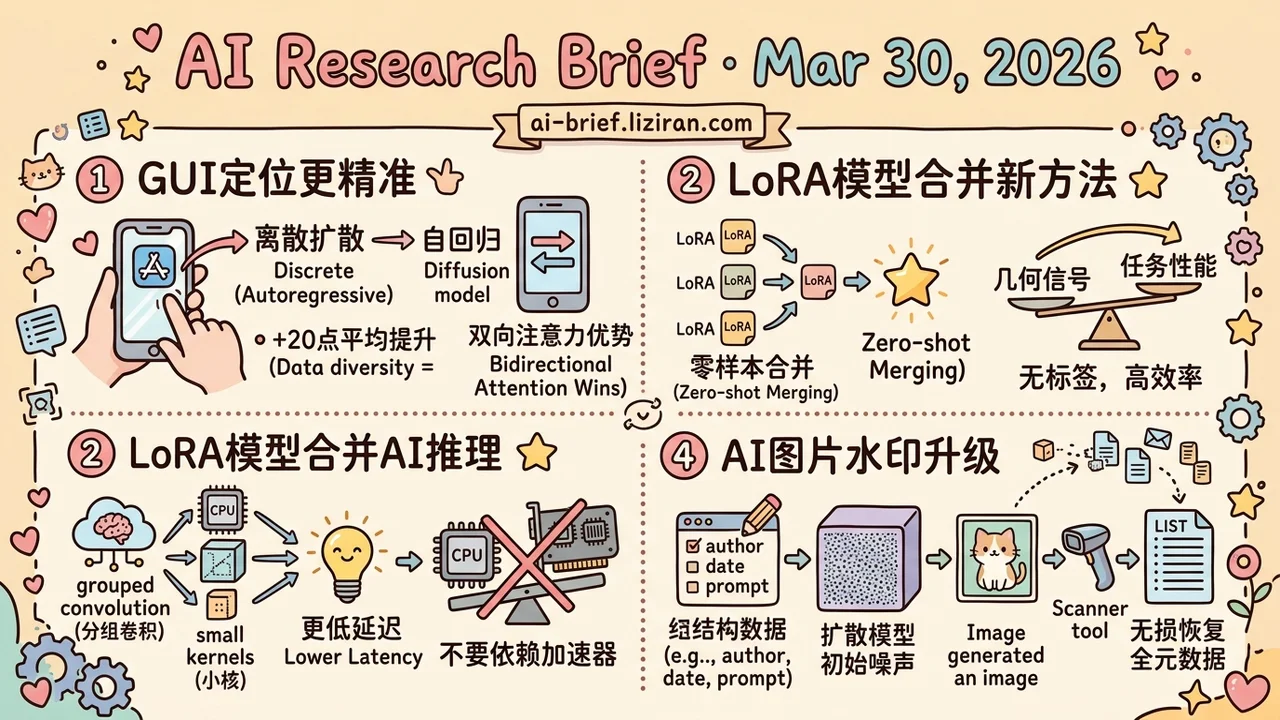

- Discrete diffusion VLMs validated for GUI grounding for the first time. Bidirectional attention shows structural advantages on spatial tasks. Data diversity alone yields a 20-point average gain. CVPR accepted.

- LoRA's null-space compression correlates with task performance and works directly as a merging weight signal. Label-free, task-agnostic, SOTA on 20 heterogeneous vision tasks.

- Vision backbone efficiency research almost universally assumes high-parallelism hardware. CPUBone targets edge devices with no AI accelerator. On CPUs, fewer MACs does not mean lower latency.

- AI watermarking upgrades from threshold detection to precise information recovery. Structured data embedded in diffusion model initial noise can be losslessly recovered as full generation metadata, with zero quality impact.

Featured

01 Diffusion Models Challenge Autoregressive Dominance in GUI Grounding

GUI grounding has defaulted to autoregressive VLMs. Nobody seriously tested whether that's optimal. This CVPR paper adapts a discrete diffusion VLM to the task, betting that bidirectional attention has structural advantages over left-to-right generation for spatial localization.

The core contribution is a hybrid masking strategy combining linear and deterministic masks to capture bounding box hierarchy. It improves grounding accuracy by up to 6.1 points over pure linear masking. Across four datasets spanning web, desktop, and mobile, the diffusion model already trades blows with autoregressive models despite limited pretraining data. Expanding training data to cover more GUI domains cut latency by ~1.3 seconds and boosted accuracy by 20 points on average. Data diversity matters far more than architecture tricks for GUI generalization.

Ablations reveal a practical constraint: more diffusion steps improve accuracy, but latency scales with them and accuracy saturates past a certain step count. A solid starting point. Generalization to complex multi-step GUI operations still needs validation.

Key takeaways: - Discrete diffusion models validated for GUI grounding for the first time; bidirectional attention shows structural promise on spatial tasks. - Hybrid masking strategy yields up to 6.1-point improvement; training data diversity drives a 20-point average gain. - Diffusion steps vs. latency is the deployment bottleneck, with accuracy saturating past a threshold.

Source: Towards GUI Agents: Vision-Language Diffusion Models for GUI Grounding

02 Null-Space Compression Predicts LoRA Merging Quality

During LoRA fine-tuning, the down-projection matrix A's null space gets systematically compressed. NSC discovers this geometric signal correlates with task performance and can directly determine merging weights. No labels, no inference required.

This solves a real problem. Existing LoRA merging methods mostly rely on entropy-based proxy signals that only work for classification. Hit a regression or generation task and they break. NSC looks only at adapter geometry, making it inherently task-agnostic. SOTA on 20 heterogeneous vision tasks and outperforms baselines on NLI and VQA. CVPR accepted.

Key takeaways: - Null-space compression correlates with task performance and serves as a label-free LoRA merging signal. - Task-agnostic by design: works across classification, regression, and generation. - Worth attention if you merge heterogeneous LoRAs in production.

Source: Label-Free Cross-Task LoRA Merging with Null-Space Compression

03 Efficiency Research Ignores CPUs. CPUBone Doesn't.

Industrial controllers, edge gateways, low-cost servers — plenty of real deployments have no AI accelerator. Inference runs on CPUs. Yet vision backbones have never been designed for these devices. Even phones and embedded AI modules count as high-parallelism hardware.

CPUBone addresses this gap. It uses grouped convolutions and small kernels to reduce MACs while keeping MACpS (actual throughput per second) high. On CPUs, fewer computations don't automatically mean lower latency; hardware utilization is what matters. Best speed-accuracy trade-off across multiple CPU devices, with results transferring to detection and segmentation.

Key takeaways: - Vision backbone efficiency research almost universally assumes high-parallelism hardware; CPU inference is systematically overlooked. - On CPUs, reducing MACs ≠ reducing latency. Hardware utilization (MACpS) is the real optimization target. - Teams deploying on devices without AI accelerators should look at this design direction.

Source: CPUBone: Efficient Vision Backbone Design for Devices with Low Parallelization Capabilities

04 AI Watermarks: From Detection to Communication

Reframing AI watermarking as a communication channel is an elegant shift. Current schemes are fuzzy matching: score an image, threshold it, declare "watermarked" or not. They can't tell you anything more.

Gaussian Shannon models the diffusion generation process as a Shannon-style noisy channel. It embeds structured information in the initial Gaussian noise and uses error-correcting codes plus majority voting to recover every bit at the receiving end. Instead of just answering "is this watermarked?", it losslessly recovers full metadata: who generated it, when, with what prompt. No model fine-tuning, no quality loss. Bit-level accuracy holds across three Stable Diffusion variants and seven perturbation types. CVPR accepted.

Key takeaways: - Upgrades watermarking from threshold detection to precise information recovery; full generation metadata can be extracted losslessly. - No model fine-tuning required. Embedding happens in initial noise with zero quality impact. - As regulation moves from "was this AI-generated?" to "who generated this and how?", precise provenance becomes a hard requirement.

Source: Gaussian Shannon: High-Precision Diffusion Model Watermarking Based on Communication

Also Worth Noting

Today's Observation

Today's paper list traces a complete accountability chain for generative models. Pre-generation: concept erasure removes content that shouldn't be generated during training. In-generation: watermark embedding writes recoverable identity information into the output. Supply chain: model identity verification confirms the API actually runs the claimed model. Post-distribution: misinformation detection judges authenticity after content circulates.

Four papers from different teams with different methods, but together they cover the full lifecycle from training to deployment to content distribution. Not a coincidence: all were accepted at CVPR. The vision generation community treats "who generated what, and can you prove it?" as a first-class direction on par with generation quality. If your team deploys generative models, inventory these four links now: pre-generation control, in-generation tracking, supply chain verification, post-distribution audit. Check which have usable open-source implementations and which you'd need to build. The accountability toolchain is moving from papers to engineering components. Early investment beats scrambling when regulators come knocking.