Today's Overview

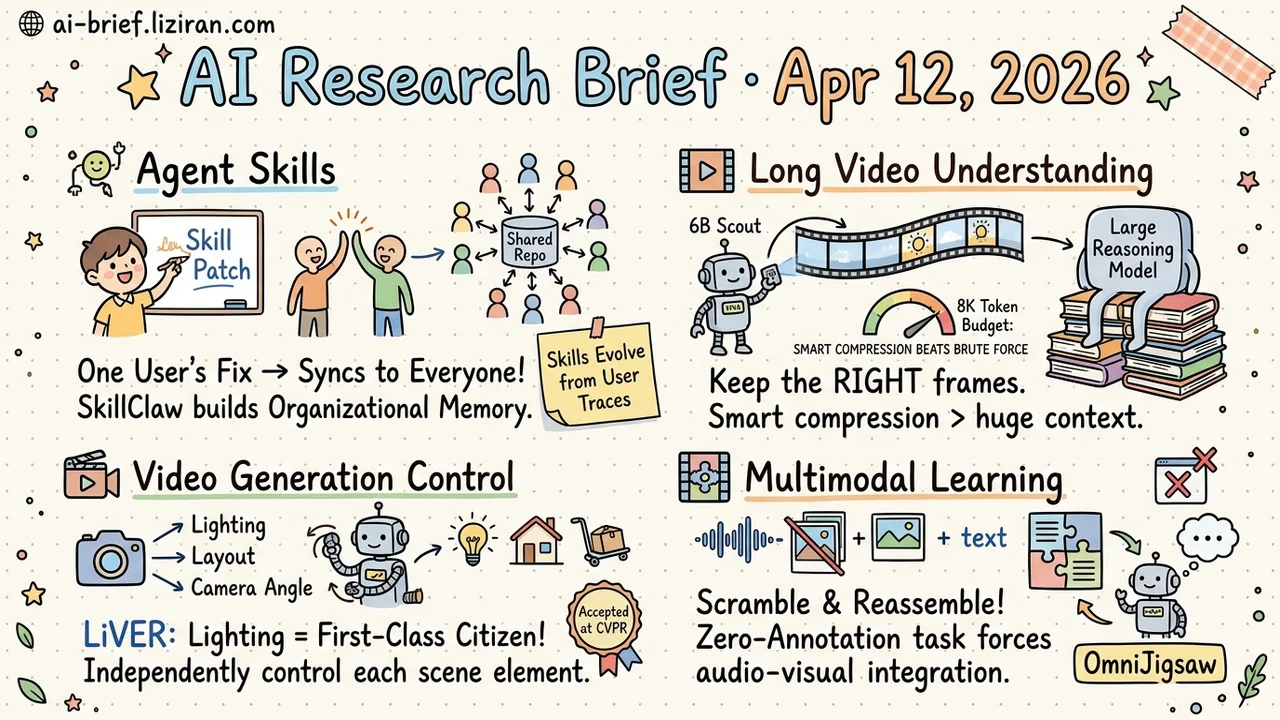

- Agent Skills Should Self-Evolve From User Populations. SkillClaw turns multi-user interaction traces into skill evolution signals. One user's correction auto-syncs to everyone, giving agent systems organizational memory.

- Smart Compression Beats Brute-Force Context Windows. Tempo uses a 6B model to select key frames per query. Under an 8K token budget, it outperforms GPT-4o and Gemini 1.5 Pro.

- Lighting Becomes a First-Class Citizen in Video Generation. LiVER decouples lighting, layout, and camera motion through a physics renderer. Accepted at CVPR, targeting professional production workflows.

- Scramble Audio and Video, Let the Model Reassemble. OmniJigsaw uses zero-annotation temporal reordering as a proxy task, forcing models to integrate audiovisual signals. Validated across 15 benchmarks.

Featured

01 Agent One User's Fix Should Immunize Every User

Agent skills are static after deployment. You spend half an hour teaching an agent to call an API correctly; your colleague hits the same issue and has to teach it again. Experience stays trapped in individual sessions and never becomes system capability. This is the biggest hidden waste in multi-user agent products.

SkillClaw treats all users' interaction traces as evolution signals. An autonomous evolver continuously aggregates multi-user data, identifies recurring failure patterns and successful paths, then converts them into skill patches or extensions. Updated skills live in a shared repository that auto-syncs to all users. One person's lesson becomes everyone's immunity — organizational memory for agent systems.

In experiments on WildClawBench, limited user interaction feedback significantly improved Qwen3-Max's performance in real-world agent tasks. The paper's 204 HF upvotes confirm this pain point resonates broadly among people building agent products.

Key takeaways: - Static skills are the biggest hidden waste in multi-user agent systems. Every user independently re-discovers the same failures. - SkillClaw drives automatic skill updates from cross-user trajectories. One fix benefits everyone. - Teams building multi-user agent products should consider an organizational memory layer. Experience reuse delivers more value than point optimizations.

Source: SkillClaw: Let Skills Evolve Collectively with Agentic Evolver

02 Multimodal A 6B Scout Helps LLMs Handle Hour-Long Video

Using a 6B model to help a larger model "watch" video sounds like the wrong tool. Tempo's logic is clear: for hour-long videos, the bottleneck isn't model size. Too many irrelevant visual tokens flood the context.

A small vision-language model serves as a scout. Given the user's specific question, it judges which frames matter and which are background noise, compressing each frame to 0.5–16 tokens before handing off to the large model. The core mechanism is Adaptive Token Allocation (ATA): the small model's zero-shot relevance judgments drive per-query routing. No extra training required. Computational complexity stays at O(1).

The 6B architecture scores 52.3 on LVBench (average video length: 4,101 seconds) within an 8K visual token budget, outperforming GPT-4o and Gemini 1.5 Pro. What you keep matters more than how much you feed in.

Key takeaways: - Video compression must be query-aware. Uniform sampling and fixed pooling blindly discard key frames. - Small-model-compresses, large-model-reasons is a practical architecture for long video understanding. - Beating GPT-4o under an 8K token budget proves the efficiency route over brute-force context expansion.

Source: Small Vision-Language Models are Smart Compressors for Long Video Understanding

03 Video Gen Lighting Finally Gets Independent Control

Professional production doesn't need "pretty frames." It needs the ability to adjust lighting, swap camera angles, and change layouts independently. LiVER places a physics renderer upstream of the diffusion model. It first renders control signals — layout, lighting, camera motion — from a unified 3D scene representation, then injects those signals as conditions into the video diffusion model.

Decoupling is the core: changing lighting doesn't affect composition, switching camera angles doesn't alter illumination. Each scene element stays independently controllable. A scene agent translates natural language instructions into 3D control parameters, lowering the barrier to entry.

Accepted at CVPR. The direction targets professional workflows, though the paper's evaluation focuses on visual realism and temporal consistency. Real-world production usability still needs further validation.

Key takeaways: - A physics renderer decouples lighting, layout, and camera motion, making each scene element independently controllable in video generation. - A scene agent converts natural language to 3D control signals, reducing the barrier for professional tools. - CVPR acceptance validates the direction, but real production-scenario performance still needs scrutiny.

Source: Lighting-grounded Video Generation with Renderer-based Agent Reasoning

04 Training Scramble Audio and Video to Teach Cross-Modal Reasoning

Multimodal models need to learn how hearing and seeing work together. That usually requires massive human-annotated data. OmniJigsaw's approach is deceptively simple: shuffle audio and visual segments out of order, then have the model reassemble them chronologically. A jigsaw puzzle.

This trivial-sounding proxy task hides a clever mechanism. Sorting correctly requires the model to simultaneously understand visual content and audio cues, forcing genuine cross-modal integration. The researchers also discovered a "dual-modal shortcut": models cheat by relying on just one modality for sorting. The fix is randomly masking one modality at the segment level, compelling true fusion of both signals.

Testing across 15 benchmarks shows significant gains in video understanding, audio understanding, and cross-modal reasoning. The entire process requires zero human annotations and works directly on unlabeled data at scale.

Key takeaways: - A minimal proxy task like temporal reordering forces models to learn cross-modal coordination, eliminating annotation costs. - Segment-level modality masking is essential. Without it, models shortcut to single-modality sorting. - Teams doing multimodal post-training should watch this zero-annotation self-supervised approach.

Source: OmniJigsaw: Enhancing Omni-Modal Reasoning via Modality-Orchestrated Reordering

Also Worth Noting

Today's Observation

Three of today's four featured papers converge on the same engineering shift: learning signals are moving from "purpose-collected labeled data" to "structured signals that already exist at runtime."

SkillClaw extracts skill evolution pressure from collective user interactions. Nobody labels "this skill is broken." The system passively observes which skills fail in which scenarios. OmniJigsaw extracts cross-modal supervision from the natural temporal order of audio and video. No one annotates "this sound corresponds to this frame." Temporal consistency itself is the label. Tempo lets the downstream query define the video compression strategy at inference time. What to keep isn't a preset rule; the query decides.

All three bypass the traditional "collect labels → train model" loop. They tap signals that already exist in running systems but were previously discarded. This isn't about dataset scale. It's not the old self-supervised vs. supervised debate either. The real distinction: whether signals come from a dedicated collection process or from runtime byproducts.

Audit the structured data streams your system already touches: user interaction sequences, temporal media, query-response pairs. If you use them once and throw them away, you're wasting free learning signal. Pick one stream your system already produces and design a minimal proxy task (take cues from OmniJigsaw's temporal reordering). Test whether that signal can drive model improvement. The cost is near zero, but it may open an iteration path that doesn't depend on annotation at all.