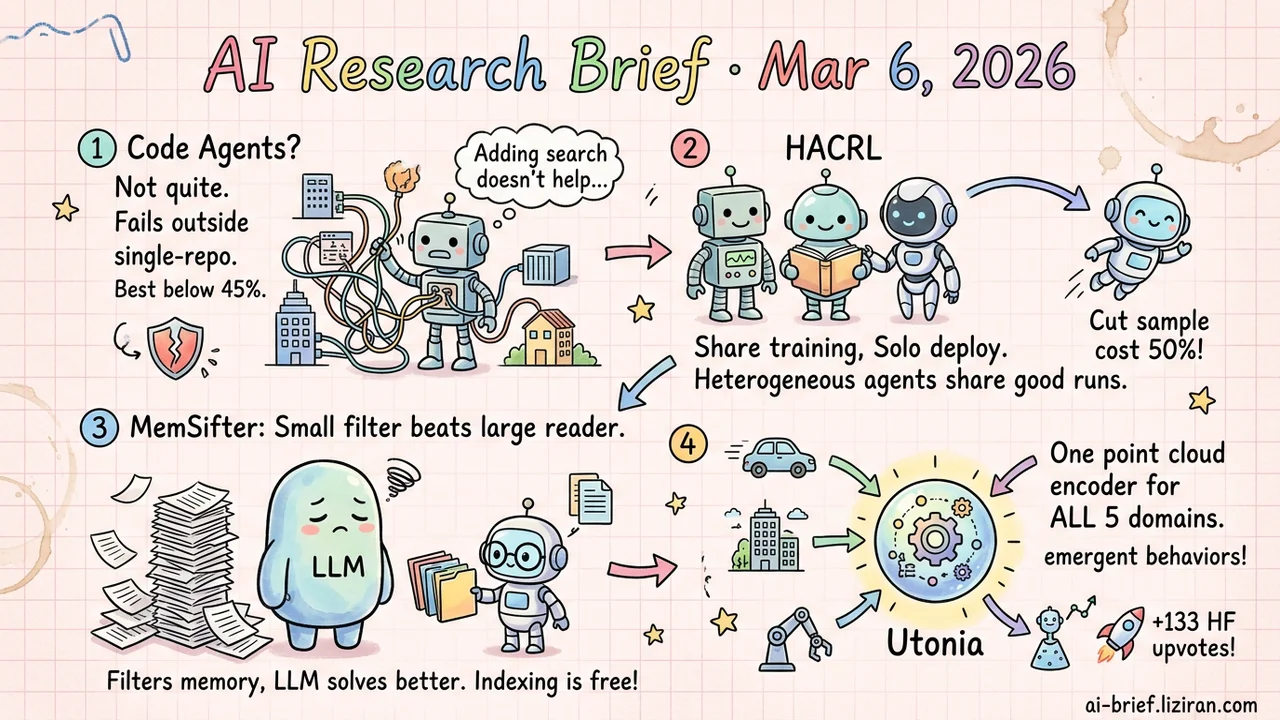

Today's Overview

- Code agents fall apart outside single-repo fixes. BeyondSWE tests four dimensions across 500 instances. The best model stays below 45% success. Adding search doesn't help.

- Train together, deploy alone. HACRL lets heterogeneous agents share verified rollouts during training. Sampling cost drops by half. Zero overhead at inference.

- A small model filtering memory beats a large model reading everything. MemSifter trains a proxy retriever with RL, rewarding task completion directly. Passes all eight benchmarks.

- One encoder handles five point cloud domains. Utonia unifies representations across domains with completely different density and geometry. 133 HF upvotes, today's top community pick.

Featured

01 Code Intelligence SWE-bench Only Tests One Slice of Code Agent Ability

Mainstream code agent benchmarks cover one scenario: single-repo bug fixing. BeyondSWE expands to four directions — cross-repo reasoning, domain-specific problems, dependency-driven migration, and full repo generation — using 500 real instances. The results are unsurprising but worth internalizing: even the strongest frontier models stay below 45% success, and no single model holds consistent performance across all task types.

The team also built SearchSWE, testing whether deep search capabilities improve agent performance. Search helps in some cases, hurts in others. Developers constantly interleave searching and reasoning while coding. Current agents haven't learned that workflow.

For teams using code agents daily, this benchmark offers a practical calibration: your tools are unreliable on cross-repo collaboration and large-scale code generation. Knowing that boundary helps you plan human-AI collaboration more effectively.

Key takeaways: - Single-repo bug fixing is one slice of code agent capability. Four-dimension evaluation reveals much larger gaps. - Frontier models score below 45% on extended tasks, with inconsistent performance across types. - Search augmentation doesn't reliably improve agent performance. The complexity of developer workflows hasn't been effectively modeled.

Source: BeyondSWE: Can Current Code Agent Survive Beyond Single-Repo Bug Fixing?

02 Training Why Not Use Each Other's Correct Answers?

Different models fail on different problems. A can't solve a math problem that B handles easily, and vice versa. HACRL acts on this intuition: since heterogeneous agents already generate rollouts during training, why not share the verified successes?

The method lets agents of different architectures and scales share verified rollouts. HACPO handles the policy distribution shift to keep advantage estimation unbiased. The key constraint: inference stays fully independent. No coordination, no multi-model orchestration. Each agent runs on its own, but has already "borrowed" from peers during training. Results show an average 3.3% gain over GSPO at half the sampling cost. 92 HF upvotes suggest the community finds this "train together, deploy alone" pattern compelling.

Key takeaways: - Heterogeneous agents share verified rollouts for mutual learning, breaking single-agent self-sampling efficiency limits. - Training is collaborative but inference is independent. Zero additional deployment cost. - Halved sampling cost with better performance makes this attractive for resource-constrained RL training teams.

Source: Heterogeneous Agent Collaborative Reinforcement Learning

03 Retrieval Small Model as Memory Filter: Cheaper and More Accurate

The longer an agent runs, the more memory it accumulates, and the harder retrieval gets. Feeding all memory to the primary LLM is too expensive. Building indexes like memory graphs is compute-heavy and lossy. MemSifter adds a small model as a filtering layer: it reasons about what the current task needs, then precisely recalls relevant entries for the primary LLM.

Training is pragmatic. RL rewards tie directly to the primary LLM's task completion after receiving retrieved results, not to traditional retrieval relevance metrics. Across eight memory benchmarks including Deep Research tasks, retrieval accuracy and task completion match or exceed existing approaches. Indexing cost is near zero. Weights and code are open-sourced.

Key takeaways: - A small model pre-filters memory, drastically reducing the primary LLM's context processing cost. - RL rewards tied to task completion rather than retrieval relevance better reflect real usage. - Open-sourced and benchmarked. A practical fit for long-running agent applications.

Source: MemSifter: Offloading LLM Memory Retrieval via Outcome-Driven Proxy Reasoning

04 Architecture One Encoder for Five Completely Different Point Cloud Domains

Point cloud data has always been siloed. Remote sensing, LiDAR, indoor RGB-D, CAD models, video reconstruction: each has wildly different density, geometry, and priors. Nobody expected a single model to handle all of them. Utonia does it anyway: one self-supervised point cloud Transformer encoder trained across five domains. The domains don't drag each other down. Unified representation actually produces emergent behaviors invisible to single-domain training.

Downstream transfer is where it gets practical. Plug Utonia features into vision-language-action policies and robot manipulation improves. Plug them into VLMs and spatial reasoning gets stronger. NLP walked this "unified pretrained representation" path years ago. Point clouds may be at the same starting line now. 133 HF upvotes make it today's top community pick — teams in 3D and embodied AI are paying attention.

Key takeaways: - Five point cloud domains with completely different density and geometry share one encoder. Cross-domain transfer works. - Joint training produces emergent behaviors absent in single-domain training. Unified representation is more than a convenience. - Point cloud features directly benefit robotics and spatial reasoning downstream. Teams working on 3D should follow this closely.

Also Worth Noting

Today's Observation

Three independent papers today converge on the same problem: the gap between "good at one task" and "generalizes across scenarios" can't be filled by scaling parameters alone.

BeyondSWE presents the starkest data. Code agents already perform well on SWE-bench-style single-repo bug fixes, but cross-repo reasoning and dependency migration drop success rates off a cliff to below 45%. Capability doesn't transfer automatically, and search augmentation can't rescue it. Utonia attacks the same gap head-on from the positive side: one point cloud encoder achieves consistent representation across remote sensing, LiDAR, indoor, CAD, and video reconstruction. The solution isn't a bigger model or more data. It's a redesigned cross-domain consistency objective. HACRL offers a third angle: let different-architecture agents share verified training experiences, using collaboration to bypass single-model generalization ceilings while cutting sampling cost in half.

The shared signal: the lever for expanding capability boundaries may not be model scale, but redesigned training paradigms and evaluation dimensions. If you use code agents beyond single-repo fixes, BeyondSWE's four-dimension framework is worth applying to your own team's usage patterns. Knowing where your tools break is more valuable than trusting them blindly.