Today's Overview

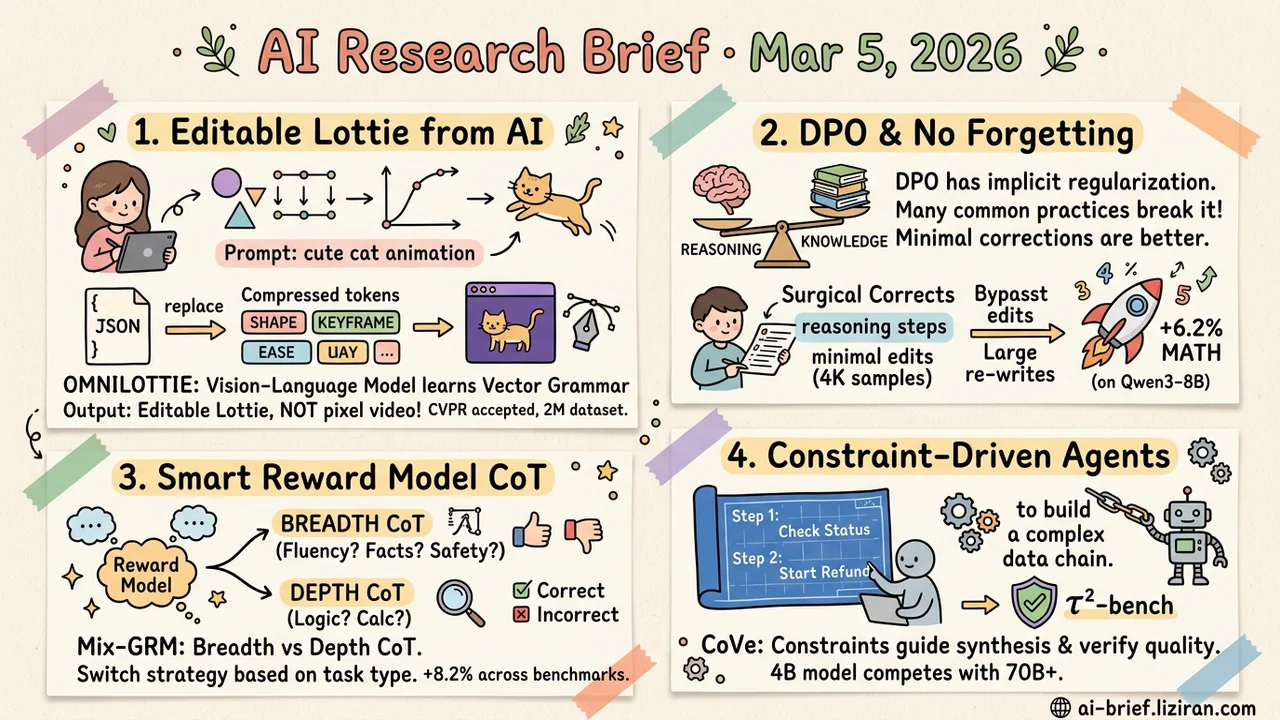

- AI-generated animation now outputs editable project files directly. OmniLottie compresses Lottie's verbose JSON into parameterized token sequences, letting vision-language models generate vector animations with keyframes and easing curves. No format conversion needed. CVPR accepted, 2M-animation dataset open-sourced.

- DPO's reward estimation has implicit regularization that suppresses catastrophic forgetting. SPoT finds many common post-training practices actually break this built-in protection. A minimal 4K-sample correction pushes Qwen3-8B math performance up 6.2%.

- Longer CoT in reward models isn't always better. Mix-GRM distinguishes breadth CoT from depth CoT, each serving different task types. Structured decomposition beats the best open-source reward model by 8.2% across five benchmarks.

- Constraints serve as both generation blueprint and quality check. CoVe uses explicit constraints to drive both synthesis and verification of agent training data. A 4B model competes with models 17x its size on τ²-bench.

Featured

01 Video Gen AI Animation Now Outputs Production-Ready Files

Lottie is the de facto standard for frontend vector animation: lightweight, cross-platform, with keyframes and easing curves. Its JSON files run thousands of lines, packed with structural metadata and formatting tokens. OmniLottie designed a Lottie tokenizer that compresses this verbose JSON into structured "command + parameter" sequences, keeping only shapes, animation functions, and control parameters while stripping all invariant formatting noise. Pretrained vision-language models can now learn to generate Lottie animations directly from text, images, or mixed instructions.

The output matters most. Not pixel video or GIFs — full Lottie project files with vector paths, keyframes, and easing curves. Designers can open them in After Effects or Figma and keep editing. The team also built MMLottie-2M, a dataset of 2 million professionally designed vector animations with text and visual annotations. CVPR accepted, 121 HF community upvotes, code open-sourced.

Key takeaways: - Output is editable Lottie project files, not pixel video. No format conversion step. - The Lottie tokenizer compresses verbose JSON into structured sequences, teaching language models the "grammar" of vector animation. - Teams working on motion design tools or frontend animation should look closely.

Source: OmniLottie: Generating Vector Animations via Parameterized Lottie Tokens

02 Training DPO Already Prevents Forgetting. You Might Be Breaking It.

Every team doing DPO post-training knows the anxiety: reasoning improves, but previously learned knowledge starts collapsing. The standard fix is on-policy data, replay buffers, anti-forgetting mechanisms stacked on top of each other. SPoT's finding is surprising: DPO's reward estimation already contains an implicit regularization mechanism that theoretically suppresses catastrophic forgetting. Nobody had dug into it seriously before.

The counterintuitive part: many common post-training practices actively destroy this built-in protection. SPoT designs a "surgical" training approach. An oracle makes minimal corrections to erroneous reasoning steps instead of rewriting entire answers. Binary cross-entropy replaces DPO's relative ranking objective. With just 4K correction samples and 28 minutes of training, Qwen3-8B improves 6.2% on math tasks, both in-domain and out-of-domain.

Key takeaways: - DPO's reward estimation carries implicit regularization — an overlooked anti-forgetting mechanism. - "Minimal correction" preserves data proximity to the model's distribution better than full rewrites. - Before adding anti-forgetting schemes to your DPO pipeline, check whether your existing setup is inadvertently breaking this built-in protection.

Source: Surgical Post-Training: Cutting Errors, Keeping Knowledge

03 Evaluation Reward Model CoT: Length Isn't the Point

Making reward models think longer before scoring improves quality — that's established. Mix-GRM reveals a distinction everyone missed: breadth CoT (covering multiple evaluation dimensions like fluency, factuality, safety) and depth CoT (deep reasoning on a single dimension) serve fundamentally different purposes. Extending the chain without distinguishing the two actually hurts.

Breadth CoT works better for subjective preference tasks like style judgment. Depth CoT wins on objective correctness. Use the wrong one and performance drops. Mix-GRM structurally separates these two reasoning modes, then trains with RLVR (reinforcement learning with verifiable rewards) so the model learns to switch strategies based on task type. It beats the best open-source reward model by 8.2% across five benchmarks.

Key takeaways: - Reward model CoT needs structural design, not just more tokens. Breadth and depth serve different task types. - RLHF pipeline teams can use this to optimize reward model reasoning templates. - RLVR lets the model automatically match reasoning style to task requirements, reducing manual tuning.

Source: Beyond Length Scaling: Synergizing Breadth and Depth for Generative Reward Models

04 Agent Constraints as Both Blueprint and Quality Gate

Training data for tool-use agents is hard to build. User intents are vague, but tool calls have zero tolerance for errors. Synthetic data is either too simple to be useful or too complex to verify. CoVe decomposes tasks into explicit constraints (e.g., "must query order status before initiating refund"), then uses those constraints in two roles: guiding synthesis of complex multi-turn trajectories during generation, and automatically judging trajectory correctness during verification. Both SFT and RL training signals derive from the same constraint set, giving data quality a deterministic anchor.

A 4B model achieves 43%/59.4% success rates on τ²-bench, competing with models 17x its size.

Key takeaways: - Constraints simultaneously serve as generation blueprints and quality gates, resolving the "complexity vs. correctness" dilemma in agent training data. - 4B parameters competing with 70B+ on τ²-bench validates the approach. - Teams building agent data flywheels should consider this constraint-driven synthesis-verification loop.

Source: CoVe: Training Interactive Tool-Use Agents via Constraint-Guided Verification

Also Worth Noting

Today's Observation

Three threads converge on "structured evaluation" today. Mix-GRM finds that reward model CoT reasoning needs to distinguish breadth from depth — blindly extending the chain is counterproductive. RubricBench reveals that rubric-guided evaluation itself lacks calibration standards. We've been measuring with uncalibrated rulers. CoVe shows a different path: explicit constraints that guide both data generation and quality verification, turning evaluation criteria from subjective judgment into executable rules.

A shared direction emerges: "let the evaluator think longer" is no longer sufficient. The evaluation process itself needs engineering — decomposed dimensions, defined standards, designed constraints. Two years ago, LLM evaluation moved from single scores to multi-dimensional rubrics. That same demand has now reached reward models and agent training data quality. Evaluation is no longer a post-training acceptance step. It's infrastructure that runs through the entire pipeline.

If your team does RLHF or agent training, ask this: how many layers of structural design has your reward signal gone through? If the answer is "direct model scoring" or "one CoT chain end to end," each of today's three papers offers one improvement you can try immediately.