Today's Overview

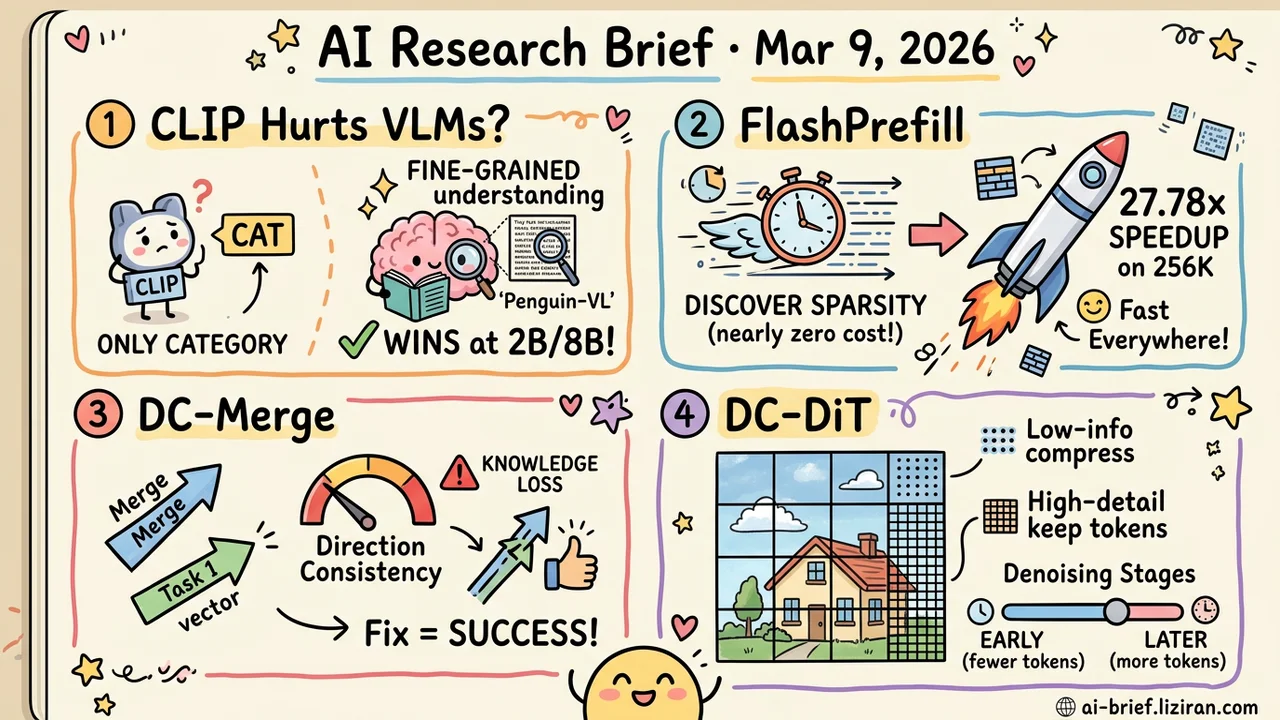

- Contrastive Pretraining Actively Hurts VLMs. CLIP optimizes for category discrimination, not fine-grained understanding. Tencent's Penguin-VL initializes the vision encoder from a text-only LLM, beating CLIP/SigLIP alternatives at 2B and 8B scale.

- Sparse Attention's Bottleneck Shifts from "How to Sparsify" to "How to Discover." FlashPrefill shows that attention sparsity patterns can be identified at near-zero cost. 28x speedup on 256K sequences, no degradation at 4K.

- Model Merging Failures Now Have a Quantifiable Diagnostic. DC-Merge finds that direction deviation of task vectors in singular space directly predicts knowledge loss. Fixing directional consistency systematically improves merge quality.

- Diffusion Models Learn to Allocate Compute by Information Density. DC-DiT gives high-detail regions more tokens and compresses low-information areas. The allocation strategy adapts across denoising stages and warm-starts from existing DiT checkpoints.

Featured

01 Multimodal VLMs Don't Need a Vision Encoder That's "Seen" Images

Nearly every mainstream VLM uses CLIP or SigLIP as its vision encoder. These models have trained on massive image-text pairs, so they should understand images best. Tencent's team spotted a fundamental mismatch: contrastive learning optimizes coarse category discrimination ("cat or dog"), while VLMs need fine-grained visual understanding ("what does line three say"). To maximize retrieval similarity, CLIP actively suppresses spatial detail and local features.

Penguin-VL takes a counterintuitive approach: initialize the vision encoder from a text-only LLM, skipping contrastive pretraining entirely. At 2B and 8B scale, it beats SigLIP-based models across document understanding, visual knowledge, and multi-view video comprehension. Math reasoning matches Qwen3-VL. For compact models, better visual representations matter more than more parameters.

One caveat: these results are limited to small models. Whether CLIP's contrastive pretraining remains suboptimal at larger scales is still an open question.

Key takeaways: - Contrastive pretraining optimizes category separation, not fine-grained understanding. This fundamentally conflicts with VLM objectives. - A text-only LLM as vision encoder beats CLIP/SigLIP alternatives at 2B and 8B scale. - Conclusion holds for compact models. Whether it extends to larger scales needs further validation.

Source: Penguin-VL: Exploring the Efficiency Limits of VLM with LLM-based Vision Encoders

02 Efficiency The Search Cost Can Be Worse Than the Attention Itself

Sparse attention for prefill acceleration isn't new. Most approaches share an awkward problem: figuring out which tokens matter costs as much as the attention you're trying to skip. FlashPrefill reframes this. It discovers that attention sparsity patterns (vertical, slash, and block-sparse) can be identified through fast block search at near-zero cost, with no sorting or score accumulation needed.

A dynamic thresholding mechanism cuts long-tail distributions to increase sparsity further. On 256K sequences, this delivers 27.78x speedup. Short contexts don't suffer either: 4K sequences still see 1.71x acceleration. This isn't a trick that only pays off at extreme lengths. Everyday inference benefits too.

Key takeaways: - The core bottleneck in sparse attention has shifted from "how to sparsify" to "how to discover sparsity patterns at zero cost." - 27.78x speedup at 256K with no short-context degradation. Broadly applicable in deployment. - Teams optimizing long-context inference should track this direction closely.

03 Training Model Merging Failures Hide in Singular Value Directions

Model merging produces inconsistent results, and until now there was no clear diagnostic framework for why a particular merge failed. DC-Merge proposes an actionable metric: directional consistency between the merged multi-task vector and individual task vectors in singular space. Greater deviation means greater knowledge loss.

The paper identifies two specific failure modes. First, uneven singular value energy distribution lets a few dominant components drown out semantically important but weaker signals. Second, geometric structure misalignment across task vectors in parameter space distorts directions during direct merging. The fix: smooth the singular value distribution first, then project onto a shared orthogonal subspace to align geometric structure. This achieves state-of-the-art on vision and vision-language benchmarks under both full fine-tuning and LoRA settings. Accepted at CVPR.

Key takeaways: - Directional consistency in singular space is a quantifiable diagnostic for merge quality. High deviation means knowledge loss. - Uneven singular value energy and geometric misalignment are two specific, fixable root causes of merge failure. - Works for both full fine-tuning and LoRA. Directly applicable to multi-task deployment.

Source: DC-Merge: Improving Model Merging with Directional Consistency

04 Image Gen Diffusion Models Stop Wasting Compute on Empty Skies

DiT's patchify operation slices images into fixed-size token sequences. Background sky and complex textures get identical compute budgets. DC-DiT adds an encoder-router-decoder module to the DiT backbone that learns to allocate tokens by regional information density end-to-end: low-information areas compress into fewer tokens, high-detail regions keep more. The visual segmentation emerges unsupervised.

The allocation strategy also adapts across denoising stages. Early noisy stages use fewer tokens for coarse structure; later stages increase token counts for fine detail. On ImageNet 256x256, DC-DiT beats the DiT baseline on both FID and IS under parameter-matched and compute-matched comparisons. It warm-starts from pretrained DiT checkpoints, needing at most one-eighth of the original training steps, and composes with other dynamic compute methods for further inference savings.

Key takeaways: - Dynamic token allocation by information density avoids wasting compute on low-information regions. - Compression strategy adapts across denoising stages: fewer tokens early for coarse structure, more tokens later for fine detail. - Warm-starts from existing DiT checkpoints. Low barrier to adoption.

Also Worth Noting

Today's Observation

CLIP vision encoders (2021), ViT fixed patches (2020), full-attention prefill (2017). These components were adopted not because they were optimal, but because they were reliable enough under the scaling-up paradigm. When compute is abundant, nobody risks architectural change to save 20% of redundant computation.

Efficiency constraints change the evaluation criteria. A 2B model running on-device, 256K context responding in real time, image generation on a tight inference budget: "good enough" stops being good enough. Penguin-VL finds CLIP's contrastive objective is fundamentally wrong for fine-grained understanding. FlashPrefill finds attention sparsity patterns cost almost nothing to identify. DC-DiT finds most patches process regions with no meaningful information. Three independent findings, one conclusion: the assumptions under which these components were adopted no longer hold under current efficiency constraints. They're all being questioned at once because deployment-side hard constraints (on-device compute, latency budgets, inference costs) have tightened rapidly over the past two years, pushing "optimizable but unnecessary" redundancies into "must fix" territory.

The second-order effect matters more. Once a "standard component" is disproved under specific constraints, the same questioning logic spreads quickly to other inherited designs. If you work on model optimization or system architecture, list every component in your pipeline that's there because "everyone uses it." Check each one against the assumptions from when it was adopted versus your current deployment constraints. The next default component to be replaced is probably already on your list.