Today's Overview

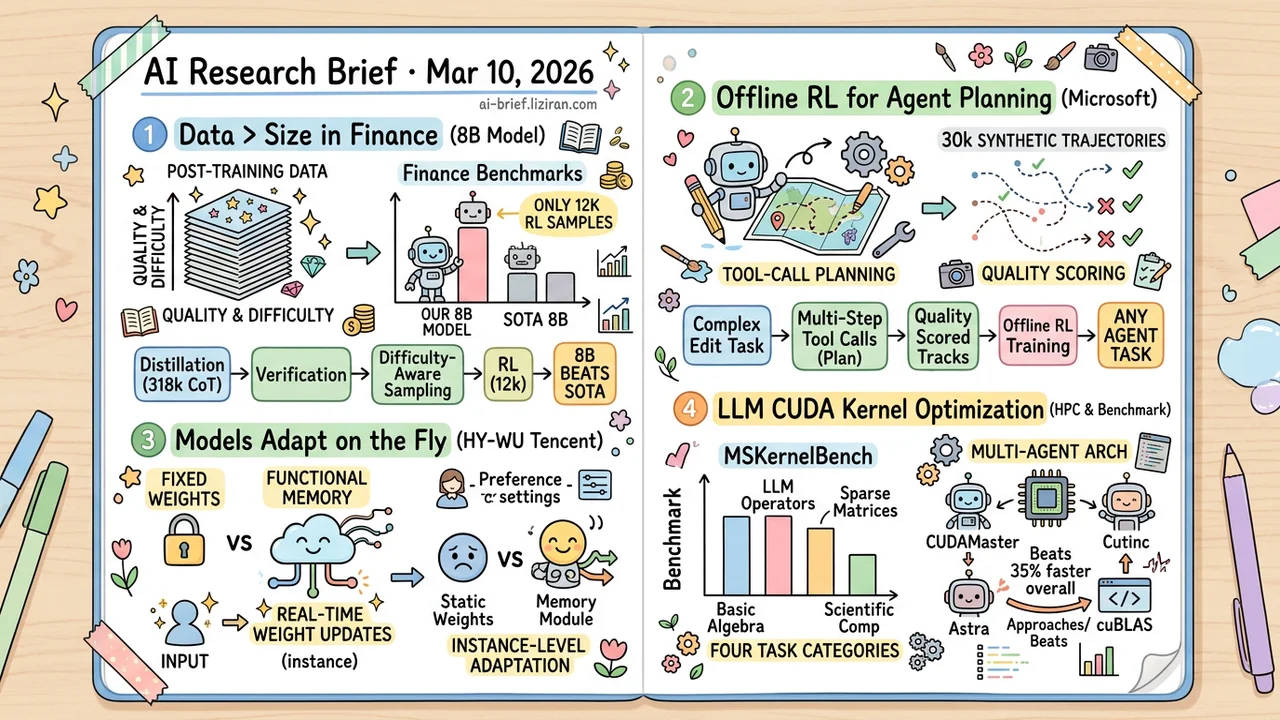

- Post-Training Data Matters More Than Model Size in Vertical Domains. A systematic ablation in finance shows that distillation quality control plus difficulty-aware sampling lets an 8B model beat same-scale SOTA with just 12k RL samples.

- Offline RL Turns Agent Planning From Guesswork Into Engineering. Microsoft trains tool-call planning on synthetic trajectories with quality scoring. The approach transfers to any multi-step agent task.

- Models Shouldn't Be Locked to Fixed Weights After Deployment. Tencent's HY-WU introduces a functional memory module that generates instance-level weight updates in real time, skipping test-time optimization overhead.

- LLM CUDA Kernel Optimization Expands to General HPC. A new benchmark, MSKernelBench, covers four task categories. A multi-agent architecture runs 35% faster than existing methods overall.

Featured

01 Training Post-Training Data Beats Model Scale in Vertical Domains

A systematic ablation study in finance delivers a clear verdict: post-training data quality and difficulty distribution matter more than model scale for vertical-domain performance. The team built two datasets: 318k multi-stage distilled and verified chain-of-thought examples for SFT, and 12k "hard but verifiable" tasks for RL. SFT's value lies in distillation source selection and CoT quality control, giving the model a solid reasoning foundation.

RL introduces difficulty-aware sampling that keeps only samples where the reward signal is precise and difficulty is moderate. Too easy means nothing learned. Unverifiable means noise. The 8B model consistently beat same-scale open-source SOTA across nine finance benchmarks spanning general financial tasks, sentiment analysis, and numerical reasoning.

The full data engineering pipeline — distillation, verification, difficulty filtering — carries no finance-specific design. It should transfer to other vertical domains, though real-world validation is still needed.

Key takeaways: - Post-training data quality and difficulty distribution outweigh model scale for vertical-domain performance. - Difficulty-aware sampling lets RL generalize effectively from just 12k samples. - The distillation → verification → difficulty filtering pipeline is reusable, but cross-domain transfer still needs validation.

Source: Unlocking Data Value in Finance: A Study on Distillation and Difficulty-Aware Training

02 Agent Offline RL Turns Agent Planning Into Engineering

Decompose image style editing into tool-call sequences, then train planning with offline RL on quality-scored trajectories. Microsoft's framework matters because the pattern is transferable. The core idea: build an orthogonal library of primitive transformation tools, then let a vision-language model (Qwen3-VL) use chain-of-thought reasoning to plan each step's tool choice and parameters.

Training data is cleverly sourced: roughly 30k synthetic trajectories carrying reasoning chains, planning sequences, and quality scores. This solves the supervision data gap for agent planning tasks. 4B and 8B models outperform baselines on most compositional tasks, confirmed by human evaluation.

The real significance goes beyond image editing. Any agent task requiring multi-step tool calls can adopt this same "synthetic trajectories + quality scoring + offline RL" recipe to train planning systematically.

Key takeaways: - Complex editing reframed as agent tool-call planning, replacing trial-and-error prompt optimization with offline RL. - 30k synthetic trajectories with reasoning chains solve the lack of supervised data for agent planning. - The "tool library + trajectory scoring + offline RL" pattern transfers to any multi-step agent task.

Source: Agentic Planning with Reasoning for Image Styling via Offline RL

03 Architecture Models Shouldn't Stay Frozen After Deployment

Foundation models are becoming long-running deployed systems, but weight adaptation is stuck in the previous era. New task or shifted user preference? Either fine-tune and overwrite old knowledge, or force a single parameter set to handle everything. Tencent's HY-WU takes a different path: instead of repeatedly rewriting shared weights, it introduces a "functional memory" module. A neural network generator synthesizes weight updates conditioned on current input, producing instance-specific operator parameters in real time.

No retraining or test-time optimization after deployment. The model keeps adapting on the fly. The paper validates on image editing, but the architectural pattern matters more: shifting adaptation from "overwriting a single weight point" to "navigating weight space on demand."

Key takeaways: - Under static weights, continual learning and personalization fundamentally interfere with each other. A single parameter point cannot serve diverging objectives. - HY-WU's memory module generates instance-level weight updates in real time, avoiding test-time optimization overhead. - Worth following for systems requiring post-deployment adaptation: recommendation engines, personalized assistants, evolving user preferences.

04 Code Intelligence LLM CUDA Optimization Goes Beyond ML Operators

LLM-driven CUDA kernel optimization has mostly been validated on PyTorch operators. The bulk of GPU performance engineering lives in general HPC and scientific computing. CUDAMaster extends the optimization scope to sparse matrix operations, scientific computing routines, and more. It ships MSKernelBench as a cross-domain evaluation benchmark covering basic algebra, LLM operators, sparse matrices, and scientific computing.

The system uses a multi-agent architecture with hardware profiling and automatic compilation/execution toolchains. Overall it runs about 35% faster than Astra, the previous best method. Some operators approach or beat cuBLAS. This moves "LLM as performance engineer" from demo toward practical use, though results are still primarily benchmark-level. Replacing hand-tuned kernels in real engineering workflows remains a ways off.

Key takeaways: - MSKernelBench is the first multi-domain CUDA kernel optimization benchmark, covering ML through scientific computing. - Multi-agent + hardware-aware architecture runs 35% faster overall; some kernels beat cuBLAS. - Direction is right, but still at the benchmark stage. Real engineering deployment needs more work.

Source: Making LLMs Optimize Multi-Scenario CUDA Kernels Like Experts