Today's Overview

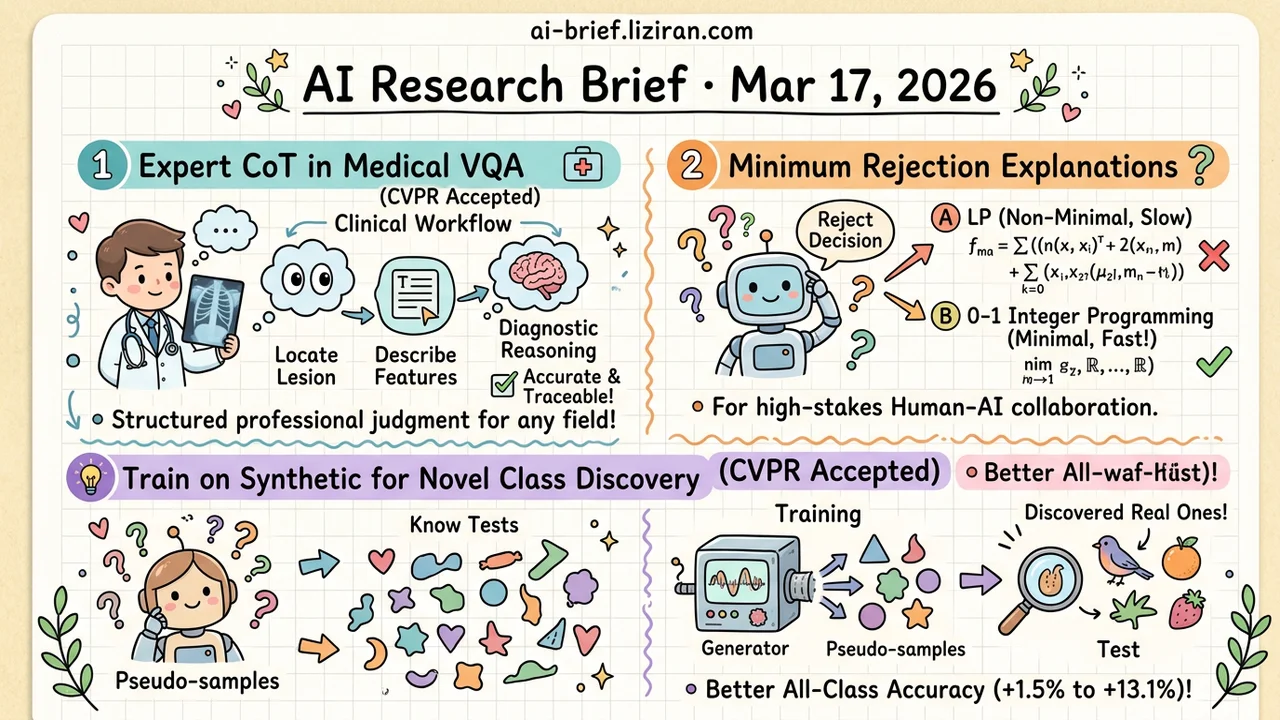

- Design CoT Supervision From Domain Experts' Actual Reasoning Process. In medical VQA, structured clinical workflows as CoT steps improve both accuracy and traceability. The approach transfers to any vertical requiring structured professional judgment. CVPR accepted.

- How Few Features Reproduce a Model's Rejection Decision? Framing minimum abductive explanation as 0-1 integer programming yields solutions faster than methods that don't guarantee minimality. Limited to linear models, but the problem framing matters for high-stakes human-AI collaboration.

- Train on Synthetic Novel Classes to Discover Real Ones at Inference. Ditching hash encoding for a pure feature-space approach eliminates the train-inference objective mismatch. Up to +13.1% all-class accuracy across seven benchmarks. CVPR accepted.

Featured

01 Reasoning Expert Reasoning Structure Sets the Ceiling for CoT

Step-CoT uses the actual clinical diagnostic workflow — lesion localization, feature description, diagnostic reasoning — as CoT supervision steps. Every reasoning step has an explicit professional basis and audit trail, rather than letting the model free-form its way through a chain. The dataset covers 10K+ real clinical cases with 70K VQA pairs, each chain annotated with structured intermediate steps by domain experts.

The training framework introduces a dynamic graph-based focus mechanism that teaches the model which steps are diagnostically critical and which are noise. This beats naive equal-weighting of all intermediate steps. Accuracy and interpretability improve in lockstep. CVPR accepted.

The transfer value is clear: any vertical requiring professional judgment — legal reasoning, financial analysis, code review — can replace free-form CoT supervision with the domain expert's actual reasoning structure. Don't hope the model discovers a good reasoning path on its own.

Key takeaways: - Structuring CoT supervision around domain experts' real reasoning workflows outperforms free-form chains on both accuracy and traceability - A dynamic graph focus mechanism helps the model distinguish critical reasoning steps from noise - Transferable to any vertical where structured professional judgment matters

Source: Step-CoT: Stepwise Visual Chain-of-Thought for Medical Visual Question Answering

02 Interpretability When a Model Says "I'm Not Sure," Users Need to Know Where

A medical diagnostic system refuses to give a judgment. The doctor doesn't want "insufficient confidence." They want to know which specific indicators caused the hesitation. This work from xAI defines the problem precisely: find the minimum set of features sufficient to reproduce the model's rejection decision — a minimized abductive explanation.

For accepted samples, they improve an existing log-linear time algorithm. For rejected samples, the problem becomes 0-1 integer linear programming. Theoretically NP-hard, but in practice their solver runs faster than LP-based methods that don't guarantee minimality.

The method is restricted to linear models — still far from neural network deployment. But "explain a rejection with the fewest possible features" as a problem definition has real value for human-AI collaboration interfaces in high-stakes domains.

Key takeaways: - Minimum abductive explanations reproduce rejection decisions with the fewest features, more actionable than full feature attribution - 0-1 integer programming, despite being NP-hard in theory, outperforms non-minimal LP methods in practice - Limited to linear models, but the "explain the rejection" framing applies broadly to high-stakes human-AI interfaces

03 Architecture Create Instead of Memorize: Generate Fake Novel Classes During Training

LTC's idea is direct. Instead of training on known classes only and hoping the model discovers new ones at inference, generate pseudo-novel-class samples during training so the model practices discovery. A lightweight online generator (based on kernel energy minimization and entropy maximization) continuously synthesizes pseudo-samples and co-evolves with the model at near-zero cost.

The approach completely drops hash encoding — the standard tool in on-the-fly category discovery (OCD). Pure feature-space methods eliminate the mismatch between training and inference objectives. Results across seven benchmarks: +1.5% to +13.1% all-class accuracy. CVPR accepted.

For e-commerce classification, content moderation, or any deployment that must continuously adapt to new categories, this "create first, then recognize" path is worth tracking.

Key takeaways: - Generating pseudo-samples replaces hash encoding, directly eliminating the train-inference objective mismatch - The lightweight generator co-evolves with the model, adding no inference overhead - Practical reference for scenarios requiring continuous novel-category discovery: e-commerce, content moderation

Source: Learning through Creation: A Hash-Free Framework for On-the-Fly Category Discovery