Today's Overview

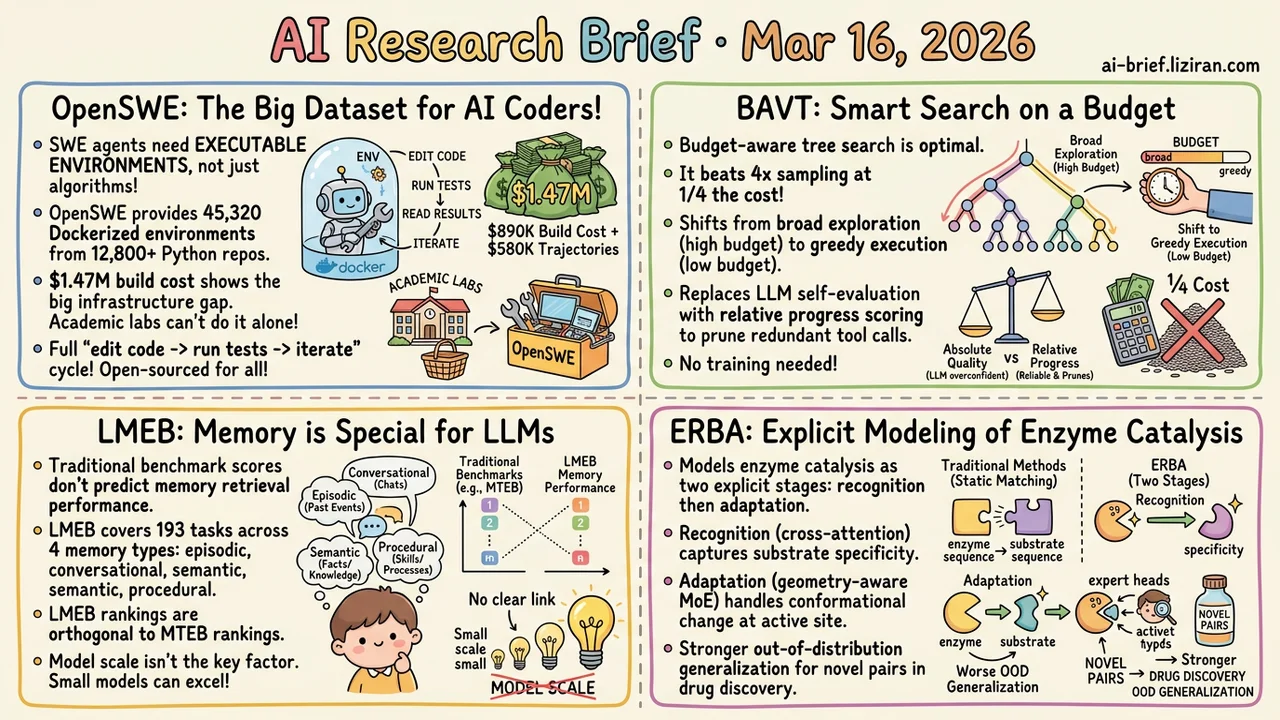

- SWE agent training is bottlenecked by executable environments, not algorithms. OpenSWE open-sources 45,320 Dockerized training environments across 12,800+ repos. The $1.47M build cost shows why academic labs can't fill this infrastructure gap alone.

- A budget-aware tree search beats 4x brute-force sampling at 1/4 the cost. It replaces LLM self-evaluation with relative progress scoring and needs no training to integrate into existing agent systems.

- Traditional embedding benchmark scores don't predict memory retrieval performance. LMEB covers 193 tasks across four memory types and finds results orthogonal to MTEB rankings. Model scale isn't the deciding factor either.

- Enzyme catalysis modeled as explicit "recognition then adaptation" stages. An MoE architecture routes by active-site type, yielding better out-of-distribution generalization for novel enzyme-substrate pairs in drug discovery.

Featured

01 Code Intelligence SWE Agents Need Infrastructure, Not Better Algorithms

Publicly available SWE training environments are limited in scale and repo diversity. Most lack executable feedback loops — agents can't practice the full "edit code → run tests → read results → iterate" cycle. OpenSWE fills that gap with a synthetic approach: a multi-agent pipeline on a 64-node cluster auto-generates Docker environments at scale. The result is 45,320 executable environments covering 12,800+ Python repos, with all Dockerfiles, eval scripts, and infra fully open-sourced.

The project cost roughly $1.47M ($890K for environment construction, $580K for trajectory sampling). That price tag alone explains why academic groups struggle to build SWE agent training infrastructure independently. Quality filtering matters too: difficulty-based screening removes trivially easy or unsolvable instances, distilling ~13,000 training trajectories from ~9,000 quality-assured environments.

OpenSWE-32B and 72B (trained on Qwen2.5) hit 62.4% and 66.0% on SWE-bench Verified, the best for that model family. One side finding worth flagging: SWE training boosted out-of-domain performance by +12 on math reasoning and +5 on science benchmarks. Iterative debugging in code environments may transfer positively to general reasoning. Model training teams should pay attention.

Key takeaways: - The real bottleneck for SWE agent training is executable environments, not algorithms. OpenSWE's 45,000+ open-sourced environments bridge the gap from demo to production. - $1.47M build cost reveals the infrastructure barrier. Full open-sourcing gives academic labs a real on-ramp. - SWE training's positive transfer to math and science reasoning challenges the assumption that code training only improves code ability.

Source: daVinci-Env: Open SWE Environment Synthesis at Scale

02 Agent Budget-Aware Agents Outperform 4x Brute-Force Sampling

The binding constraint for production agents isn't model capability. It's token and tool-call budgets: one runaway session burns the quota. BAVT encodes remaining budget ratio directly into its search strategy. With budget to spare, it explores broadly. As budget runs low, it shifts to greedy execution. This transition is provably optimal and requires no extra training or tuning.

A second smart design choice: evaluating each step by "relative progress" rather than "absolute quality." LLM self-evaluation is notoriously overconfident. Relative scoring sidesteps that problem and reliably prunes redundant tool calls. On four multi-hop QA benchmarks, BAVT under strict low budgets outperformed baselines given 4x the resources.

Key takeaways: - Budget-aware tree search beats brute-force sampling at 1/4 the resource cost. - Relative progress scoring replaces absolute scoring, solving the overconfident self-evaluation problem that wastes exploration. - Pure inference-time framework, no training needed. Drop it into existing agent systems.

Source: Spend Less, Reason Better: Budget-Aware Value Tree Search for LLM Agents

03 Retrieval Embedding Benchmarks Don't Predict Memory Retrieval

MTEB and similar benchmarks test "given a query, find the relevant passage." Memory-augmented systems face a different problem: information is fragmented, spans long time windows, and depends heavily on context. LMEB targets this gap with 193 zero-shot retrieval tasks across four memory types — episodic, conversational, semantic, and procedural — evaluating 15 mainstream embedding models on 22 datasets.

The headline finding: LMEB and MTEB performance are orthogonal. Models that rank high on traditional passage retrieval don't necessarily lead on long-horizon memory retrieval. Scale doesn't settle it either. 10B-parameter models don't consistently beat sub-billion ones.

Key takeaways: - Traditional retrieval benchmark scores can't predict memory retrieval ability. Evaluate them separately when choosing models. - Episodic, conversational, semantic, and procedural memory each place different demands on embeddings. - Model scale shows no stable positive correlation with memory retrieval performance.

Source: LMEB: Long-horizon Memory Embedding Benchmark

04 AI for Science Modeling Enzyme Catalysis as Two Distinct Stages

Predicting enzyme kinetic parameters (kcat, Km, Ki) is hard because catalysis unfolds in stages: the enzyme first recognizes the substrate, then adapts through conformational change. Most existing methods collapse this into a static sequence-matching problem. ERBA models the two stages explicitly. Cross-attention captures substrate recognition specificity. A geometry-aware MoE then handles conformational adaptation at the active site, routing different pocket structures to different experts.

The design tracks biological intuition: recognition and adaptation are fundamentally different mechanisms. Forcing them into a single representation loses information. Performance improves consistently across all three kinetic endpoints. Out-of-distribution generalization shows the most pronounced gains, making this more practical for drug discovery scenarios involving novel enzyme-substrate combinations.

Key takeaways: - The "recognition → adaptation" two-stage nature of catalysis is explicitly modeled, better matching biological mechanism than static matching. - MoE routes samples by pocket structure, giving the model specialized handling per active-site type. - Stronger out-of-distribution generalization matters most for drug discovery, where novel enzyme-substrate pairs are the norm.