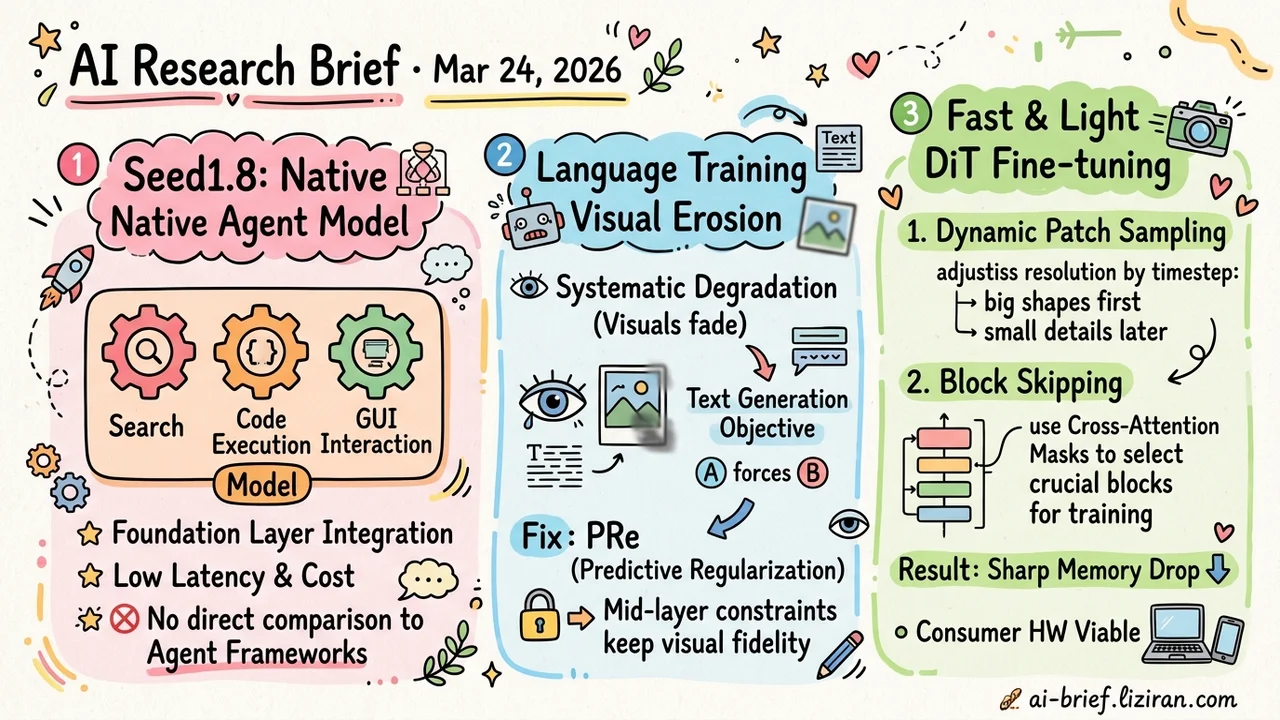

Today's Overview

- Seed1.8 unifies search, code execution, and GUI interaction at the foundation layer. ByteDance's agent-native model optimizes for latency and cost in production, but the model card lacks direct comparison against general-purpose model + framework setups.

- Language training systematically erodes visual representations in multimodal models. Cross-architecture, cross-scale diagnostics trace the problem to a single text generation objective that forces models to sacrifice visual fidelity. PRe mitigates degradation through mid-layer prediction constraints.

- DiT fine-tuning memory drops sharply while matching full fine-tuning quality. Dynamic patch sampling adjusts resolution by timestep; cross-attention masks select critical blocks for fine-tuning. Combined, they make consumer hardware viable for personalized image generation.

Featured

01 Agent Agent-Native Foundation or General Model + Framework?

Seed1.8's thesis is clear: instead of layering agent frameworks on top of general-purpose models, build multi-turn interaction, tool use, and multi-step execution as first-class citizens at the foundation layer. This isn't just a chat model with function calling bolted on. Search, code generation and execution, and GUI interaction share a single interface. The model natively understands how these capabilities coordinate.

Deployment got real attention too. Configurable thinking modes and optimized visual encoding for images and video show the team thought hard about latency and cost in agent workflows. Evaluation goes beyond standard benchmarks with application-aligned workflow tests covering base capabilities, multimodal understanding, and agent behavior.

The model card doesn't compare against the "general model + agent framework" baseline. That's exactly the comparison practitioners want. How much quantifiable advantage does first-class architectural integration deliver? Community benchmarks will have to answer that.

Key takeaways: - Search, code execution, and GUI interaction unified at the foundation layer rather than bolted on — an architecture direction worth tracking. - Latency and cost optimizations signal production intent, not demo-ware. - No direct comparison against general model + framework setups. Real-world advantage needs independent validation.

Source: Seed1.8 Model Card: Towards Generalized Real-World Agency

02 Multimodal Language Training Erodes Visual Representations

When multimodal LLMs train on language data, their internal visual representations degrade systematically. This CVPR paper diagnoses the problem across architectures and scales. Visual features in LLM middle layers show clear decay in both global structure and patch-level detail compared to initial inputs. The root cause: a single text generation objective forces models to sacrifice visual fidelity for better answer output.

The proposed fix, PRe (Predictive Regularization), is straightforward. Force degraded mid-layer features to predict the initial visual features. This adds a "don't lose this" constraint on visual representations. Experiments confirm the constraint improves vision-language task performance. Specific improvement margins and cross-task generalization need the full paper's data.

Key takeaways: - Visual degradation in MLLMs is systematic, not anecdotal. Teams training multimodal models should build this into their diagnostics. - The text generation objective is the root cause. Training objective design must balance language and vision. - PRe maintains visual capability through mid-layer prediction constraints. The approach is reusable beyond this specific implementation.

03 Training DiT Fine-Tuning Memory Drops, Quality Holds

Fine-tuning Diffusion Transformers for personalized image generation has a hard memory ceiling. DiT-BlockSkip attacks it with two cuts. First: dynamic patch sampling adjusts patch size by diffusion timestep. Large patches capture global structure early; small patches refine details late. Both get scaled to low resolution before entering the model. Second: block skipping uses cross-attention masks to identify which transformer blocks matter most for personalization, fine-tunes only those, and precomputes residual features for the rest.

Memory usage drops substantially while maintaining near-full-fine-tuning quality in both quantitative and qualitative evaluation. The paper mentions on-device deployment (phones, IoT). Actual feasibility needs hardware-specific benchmarks.

Key takeaways: - Dynamic patch sampling allocates resolution by timestep, balancing global structure and fine detail. - Cross-attention masks select critical blocks for fine-tuning, avoiding quality loss from blind pruning. - Accepted at CVPR. On-device deployment potential needs real hardware benchmarks to confirm.

Source: Memory-Efficient Fine-Tuning Diffusion Transformers via Dynamic Patch Sampling and Block Skipping