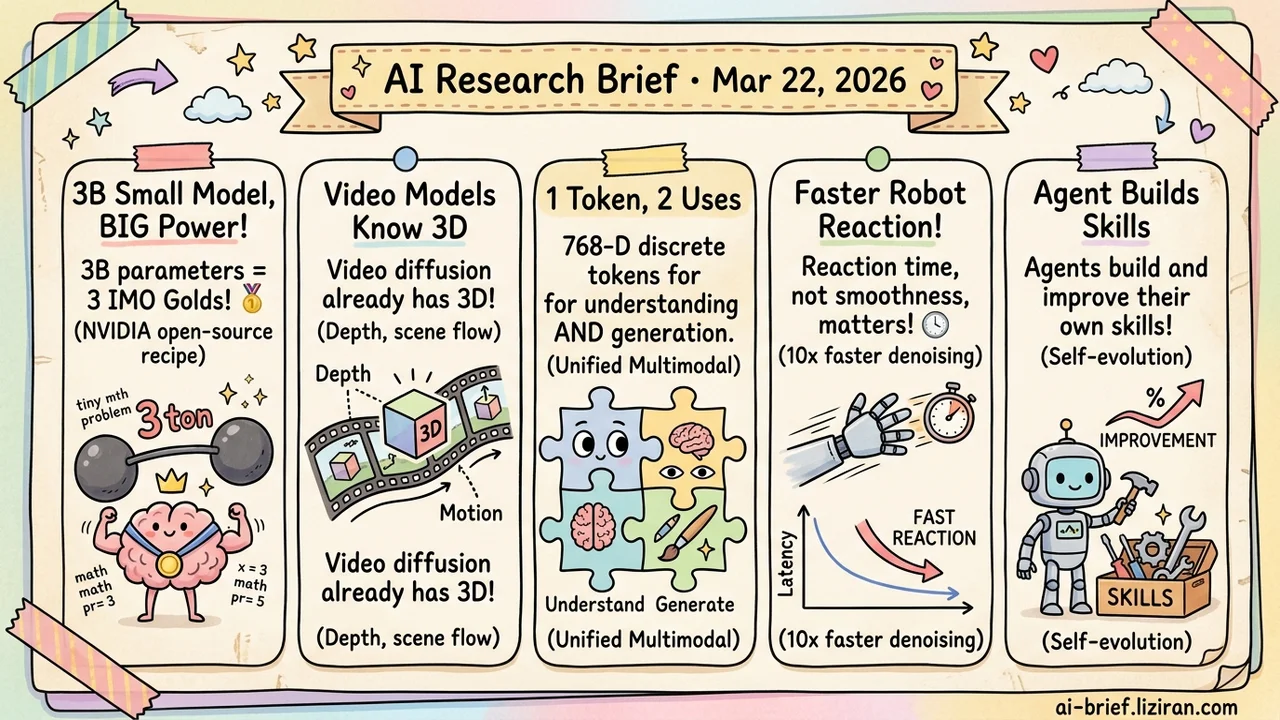

Today's Overview

- Cascade RL plus multi-domain distillation lets 3B active parameters win three olympiad golds. NVIDIA open-sourced the full training recipe. Small-model reasoning ceilings just moved.

- Video diffusion models already encode full 3D spatial priors internally. No 3D annotations or geometry modules needed. Extract intermediate features and you get depth and scene flow prediction.

- 768-dimensional discrete tokens serve both understanding and generation. CubiD uses fine-grained masked diffusion to sidestep high-dimensional combinatorial explosion. One fewer barrier to unified multimodal architectures.

- Reaction latency, not trajectory smoothness, is the real VLA deployment bottleneck. FASTER provides an explicit formula and compresses reactive denoising by roughly 10x.

- Agents that build and iterate their own skills outperform external skill injection. But percentage gains on extremely low baselines deserve a sober second look.

Featured

01 Training 3B Active Params, Three Olympiad Golds. The Secret Is the Recipe.

NVIDIA's Nemotron-Cascade 2 uses just 3B active parameters (30B total in a MoE) to reach gold-medal performance on IMO 2025, IOI, and ICPC finals. That's 20x fewer parameters than DeepSeek V3.2 Speciale at the same level. The architecture isn't the breakthrough. The training recipe is.

After SFT, the model enters cascade RL: reinforcement learning that progressively expands across reasoning, coding, and agentic domains. Each domain gets on-policy distillation from the strongest available teacher model. This solves a classic RL problem. Push performance in one domain and others regress. Multi-domain distillation sets a floor for each domain, capturing RL gains while preventing regression.

This is the second open-source model to hit three olympiad golds after DeepSeek, but at an order-of-magnitude higher intelligence density per parameter. NVIDIA released both weights and training data. For teams wanting to self-deploy reasoning models, this is the most complete and reproducible small-model training reference available.

Key takeaways: - Cascade RL with multi-domain on-policy distillation solves the "improve one domain, regress another" problem in RL training. - 3B active parameters match models 20x larger on reasoning tasks. Small-model reasoning ceilings have been severely underestimated. - Weights and training data are fully open-source. Self-deployment teams can reproduce the full pipeline.

Source: Nemotron-Cascade 2: Post-Training LLMs with Cascade RL and Multi-Domain On-Policy Distillation

02 Multimodal Video Generation Models Already Understand 3D Space

The default path to 3D understanding: feed massive 3D datasets, add depth sensors, bolt on geometry modules. Explicit 3D input, one way or another. VEGA-3D found a shortcut. Video diffusion models, in learning to generate temporally coherent frames, have already internalized complete 3D structure priors and physical regularities. Extract those intermediate-layer features, inject them into an MLLM through a gated fusion mechanism, and you get depth estimation, normal estimation, and scene flow prediction. No 3D annotations required. The approach outperforms existing methods across 3D scene understanding, spatial reasoning, and embodied manipulation benchmarks.

The data bottleneck for 3D understanding may not require more 3D data. The priors already exist inside video models, waiting to be mined.

Key takeaways: - Video generation models contain rich 3D spatial priors extractable without explicit 3D supervision. - Plug-and-play architecture gives existing MLLMs spatial understanding without retraining. - 3D scene understanding's data bottleneck may be solvable by reusing generative models rather than collecting more 3D data.

Source: Generation Models Know Space: Unleashing Implicit 3D Priors for Scene Understanding

03 Image Gen 768-D Discrete Tokens Can Serve Both Understanding and Generation

A combinatorial explosion wall sits between 8–32 dimensions and 768 dimensions. Discrete visual tokens for generation and high-dimensional representations for understanding have been separate systems. CubiD (CVPR) runs fine-grained masked diffusion directly on 768-D discrete representations: any dimension at any position can be independently masked and predicted. Generation steps stay fixed at T, regardless of feature dimensionality. From 900M to 3.7B parameters the model shows clean scaling behavior, reaching discrete generation SOTA on ImageNet-256.

The more important validation: discretized tokens retain their original representation capacity on understanding tasks. One set of tokens genuinely serves both purposes. Teams building vision-language unified architectures should track this direction, though high-dimensional discrete representations still need more generalization evidence on complex scenes.

Key takeaways: - Fine-grained masked diffusion decouples generation steps from feature dimensionality, bypassing high-dimensional combinatorial explosion. - The same 768-D discrete tokens validate on both understanding and generation. Shared representation is no longer theoretical. - Worth following for unified multimodal architectures, but generalization to complex scenes remains unproven.

Source: Cubic Discrete Diffusion: Discrete Visual Generation on High-Dimensional Representation Tokens

04 Robotics Reaction Latency Is the VLA Metric That Actually Matters

Async inference for VLA (Vision-Language-Action) models has focused on trajectory smoothness. FASTER points to a neglected dimension: how fast the model reacts after the environment changes. The paper delivers a clean mathematical result. Under action chunking, reaction time follows a uniform distribution determined by the first action's inference latency and execution step count. This formula directly guides deployment parameter selection.

Building on this analysis, FASTER proposes Horizon-Aware Scheduling. Flow-based VLAs prioritize converging near-term actions during sampling, compressing reactive denoising by roughly 10x (validated on pi-0.5 and X-VLA) while preserving long-horizon trajectory quality. Real-robot experiments, including a high-dynamics ping-pong task, show significantly reduced effective reaction latency on consumer GPUs.

Key takeaways: - Reaction time under action chunking has an explicit formula. It directly guides chunk size and inference step selection. - Prioritizing near-term action convergence compresses reactive denoising by roughly 10x. - For VLA real-time deployment, reaction latency deserves more optimization attention than trajectory smoothness.

Source: FASTER: Rethinking Real-Time Flow VLAs

05 Agent Self-Built Skills Beat Injected Ones. But Check the Baselines.

Instead of hand-designing skills and injecting them, let agents build and iterate their own through task execution. That's Memento-Skills. Structured markdown files serve as evolvable skill memory, continuously self-restructured through read-write reflection loops within an RL framework. No LLM parameter updates at any point.

The direction is sound. The numbers need context. A 116.2% "relative improvement" on Humanity's Last Exam comes from flipping an extremely low baseline; absolute accuracy remains low. The 26.2% gain on GAIA is more informative, but needs comparison against current SOTA absolute levels. The harder question is scale: will routing efficiency degrade as the skill library grows? How do you handle inter-skill conflicts? These only surface in real-world deployment, not benchmarks.

Key takeaways: - Agent-constructed evolvable skills fit the continuous learning paradigm better than externally designed injection. - Percentage improvements need baseline context. A 116% relative gain can mean single-digit absolute accuracy. - Skill library scaling, routing efficiency, and conflict management are the real deployment challenges.

Also Worth Noting

Today's Observation

Video generation models harbor unused 3D priors. The barrier to 768-D discrete generation was the transformation method, not the dimensionality itself. What these findings share matters more than the technical details: in many AI systems, the missing piece isn't the capability. It's the right way to extract it.

This has direct implications for technical decisions. When facing a capability gap, the default move is more data, more modules, more training stages. But if the capability is already encoded in intermediate representations of existing components, that incremental investment is redundant. The more efficient path: audit first. Use probe tasks to check whether your existing models already encode the information you need. If they do, the engineering focus shifts from "acquiring capability" to "extracting capability." Concrete action: before greenlighting a new capability project, spend a day running linear probes on your existing models' intermediate layers. That day might deliver more than a month of training a new module from scratch.