Today's Overview

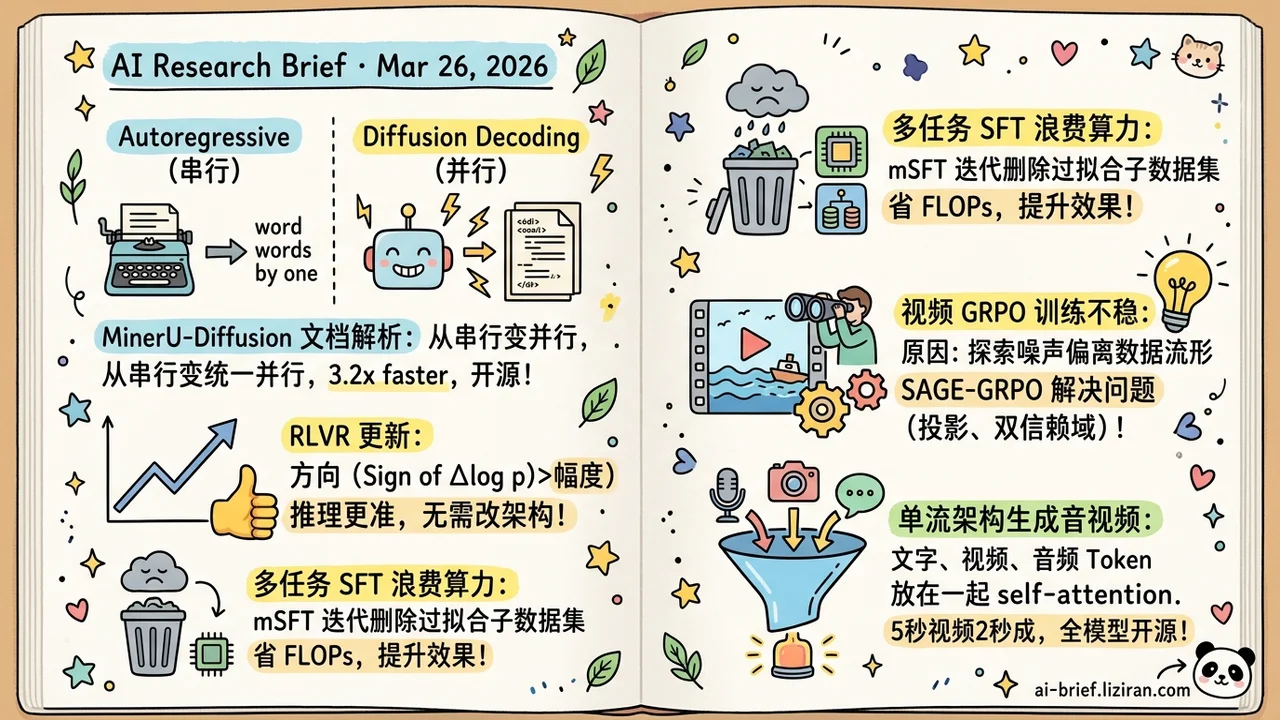

- Diffusion Decoding Replaces Autoregressive OCR, Going From Serial to Parallel. MinerU-Diffusion reframes document parsing as inverse rendering, using block-wise diffusion to generate structured source in parallel. 3.2x faster decoding, open-source.

- RLVR Update Direction Matters More Than Magnitude. The sign of token-level Δlog p pinpoints sparse, reasoning-critical updates more precisely than magnitude metrics. Two resulting methods need no architecture changes.

- Multi-Task SFT Wastes Compute You Don't See. Sub-datasets overfit at wildly different rates. mSFT iteratively drops the earliest overfitters, cutting FLOPs and improving results under low budgets.

- Video GRPO Instability Traced to Off-Manifold Exploration Noise. The ODE-to-SDE switch pushes sampling trajectories off the pretrained data manifold. SAGE-GRPO fixes this with manifold-projected exploration and dual trust regions, validated on HunyuanVideo.

- Joint Audio-Video Generation Doesn't Need Multi-Stream Architecture. Text, video, and audio tokens in a single sequence with plain self-attention. 5-second video in 2 seconds on one H100, full model stack open-sourced.

Featured

01 Multimodal What if OCR Isn't Reading, but Reverse-Rendering?

A rendering engine turns Markdown into a laid-out PDF. MinerU-Diffusion inverts the process: given the rendered result, a diffusion model recovers structured source code in parallel. Once you accept this inverse-rendering frame, left-to-right autoregressive decoding stops being a requirement and starts looking like historical baggage from sequential formats.

The method replaces token-by-token generation with a block-wise diffusion decoder, stabilized by uncertainty-driven curriculum learning for long sequences. Decoding runs 3.2x faster than autoregressive baselines, with consistent reliability gains. The team also designed a Semantic Shuffle benchmark testing whether the model actually reads document layout rather than relying on language priors. The diffusion approach wins clearly on that test.

110 HuggingFace upvotes and open-source code. Community reception suggests this isn't just theoretically elegant. Teams running document processing pipelines can evaluate it now.

Key takeaways: - Inverse rendering reframes document OCR from serial decoding to parallel diffusion: an architecture-level shift. - 3.2x decoding speedup has direct value for long-document production pipelines. - Open-source and ready to evaluate as an autoregressive replacement for document parsing.

Source: MinerU-Diffusion: Rethinking Document OCR as Inverse Rendering via Diffusion Decoding

02 Reasoning RLVR Updates: Direction Over Magnitude

The sign of token-level log-probability changes may be a better lens for understanding RLVR (reinforcement learning from verifiable rewards). Through statistical analysis and token replacement experiments, this work shows that the direction of Δlog p locates sparse, reasoning-critical updates more precisely than magnitude metrics like divergence or entropy.

Two practical methods follow. At inference time, extrapolating along the Δlog p direction improves accuracy. At training time, upweighting low-probability tokens accelerates learning. Neither requires architecture changes, keeping the adoption barrier low.

The intuition is clean and the validation solid. Generalization across more model scales and task types still needs confirmation.

Key takeaways: - Δlog p direction outperforms magnitude metrics for locating reasoning-critical sparse updates in RLVR. - Inference extrapolation and training reweighting apply without architecture changes. - Teams tuning RLVR should add direction analysis to their diagnostic toolkit.

Source: On the Direction of RLVR Updates for LLM Reasoning: Identification and Exploitation

03 Training Multi-Task SFT's Hidden Waste

Fine-tune multiple tasks together and their sub-datasets learn at wildly different speeds. Some overfit by epoch 3; others are still converging at epoch 10. The training loop doesn't care — it allocates compute to all of them equally.

mSFT does the obvious thing: monitor each sub-dataset, drop the first one to overfit, roll back to that dataset's best checkpoint, and continue training the rest. Across 10 benchmarks and 6 base models, it consistently beats 4 baselines. Sensitivity to its single new hyperparameter is low.

The more useful finding: under low compute budgets, mSFT improves results while reducing FLOPs. For resource-constrained teams, that matters more than absolute performance numbers.

Key takeaways: - Heterogeneous overfitting across sub-datasets is a widely overlooked efficiency loss in multi-task SFT. - mSFT iteratively drops overfitting sub-datasets to rebalance the mixture. Simple, but stable across models. - Simultaneous quality gains and FLOPs reduction under low budgets make this especially relevant for constrained settings.

Source: mSFT: Addressing Dataset Mixtures Overfitting Heterogeneously in Multi-task SFT

04 Video Gen Why Video GRPO Keeps Crashing: Off-Manifold Noise

Training instability with FlowGRPO for video generation is nearly universal. SAGE-GRPO identifies a specific structural cause: converting ODE (deterministic sampling) to SDE (stochastic sampling) during exploration injects noise that pushes trajectories off the data manifold defined by the pretrained model. Rollout quality collapses, reward estimates become unreliable, and training diverges.

The fix constrains exploration to stay on-manifold. At the micro level, log-curvature correction derives a manifold-aware SDE. At the macro level, dual trust regions prevent excessive policy drift. Experiments on HunyuanVideo 1.5 show consistent improvements in video quality, text alignment, and visual metrics over prior methods.

Key takeaways: - Video GRPO instability stems from exploration noise pushing samples off the pretrained data manifold, making reward estimates unreliable. - Manifold-projected exploration plus dual trust regions fix this cleanly. - Validated on HunyuanVideo 1.5; generalization to other video architectures still needs testing.

Source: Manifold-Aware Exploration for Reinforcement Learning in Video Generation

05 Architecture Single-Stream AV Generation Beats Multi-Stream

The trend in joint audio-video generation has been stacking specialized modules: multi-stream encoders, cross-attention alignment layers, modality-specific training strategies. daVinci-MagiHuman strips all of that away. Text, video, and audio tokens go into one sequence with standard self-attention.

The engineering payoff is immediate: no multi-stream synchronization logic to maintain. Inference optimizations (distillation, latent super-resolution, Turbo VAE) apply directly to a standard Transformer. One H100 generates a 5-second video in 2 seconds. Quality holds up: 80% human eval win rate against Ovi 1.1, speech WER at 14.6% (lowest among open-source models).

The full stack is open-sourced: base model, distilled variant, super-resolution model, and inference code.

Key takeaways: - Single token sequence for text, video, and audio eliminates cross-attention and multi-stream alignment overhead. - 2-second generation for 5-second video on one H100; distillation and Turbo VAE land easily on standard Transformer architecture. - Full model stack open-sourced including base, distilled, and super-resolution models.

Source: Speed by Simplicity: A Single-Stream Architecture for Fast Audio-Video Generative Foundation Model

Also Worth Noting

Today's Observation

Today's two RL papers look unrelated. One analyzes token-level update mechanics in RLVR for language reasoning. The other diagnoses training collapse in GRPO for video generation. Their findings point to the same conclusion: we don't understand how RL signals propagate inside generative models well enough, and that gap blocks RL recipes from transferring across modalities.

The RLVR direction analysis shows that the sign of token-level updates explains reasoning gains better than magnitude. The prior frame — "how much did parameters change" — may have been measuring the wrong thing. The manifold-aware video RL work shows GRPO collapses on video because exploration noise pushes samples off the pretrained manifold. SDE exploration that works in language fails on continuous flow-matching models.

The signal is clear: RL for generation is still in its diagnostic phase. Recipes validated on language and images cannot be assumed to transfer to video, audio, or other modalities. Each modality's generative model has different internal structure (autoregressive vs. flow matching vs. diffusion), and RL signals propagate differently through each. Diagnose first, then design.

If your team is porting GRPO or RLVR to a new setting, invest in a diagnostic round first. Run small-scale training. Monitor update direction and magnitude distributions at the token or timestep level. Confirm RL signals reach the positions that matter before committing to a recipe tuned for language models.