Today's Overview

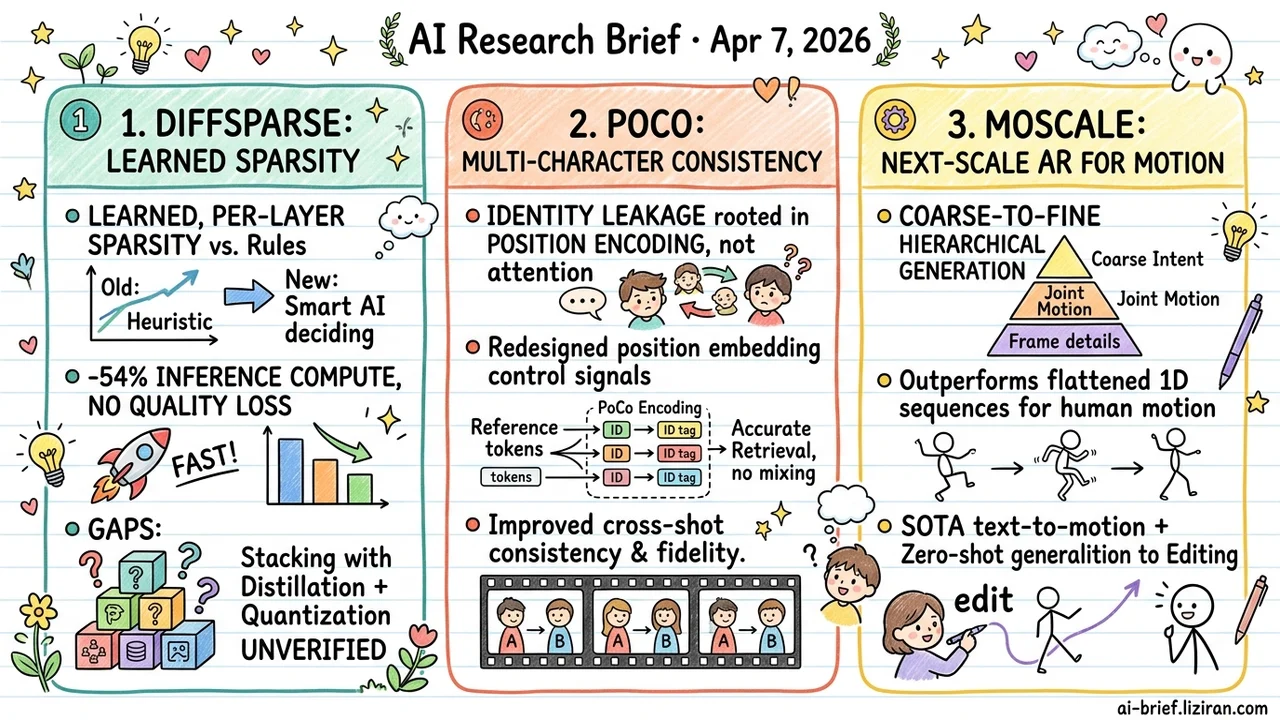

- Learned sparsity cuts diffusion inference compute by 54% with no quality loss. DiffSparse trains a lightweight predictor to decide per-layer, per-step token sparsity rates. Stacking with distillation and quantization remains unverified.

- Multi-character video identity leakage traces back to position encoding, not attention. PoCo redesigns control signals at the position embedding level, improving cross-shot consistency and reference fidelity. Sora2 is attacking the same problem.

- Next-scale AR extends from images to motion generation. Coarse-to-fine hierarchical generation outperforms flattened 1D sequences. CVPR-accepted text-to-motion hits SOTA and zero-shot generalizes to editing tasks.

Featured

01 Efficiency Learned Sparsity for Diffusion Inference

Token caching dominates diffusion transformer acceleration today, but existing methods assign sparsity with hand-designed rules. Which layers skip how many tokens is preset, blind to how different denoising steps and layers actually depend on those tokens. DiffSparse takes a different route: train a lightweight predictor that decides, at every denoising step and every layer, which tokens can be safely dropped. Sparsity becomes an end-to-end learned decision instead of a heuristic.

The method jointly optimizes per-layer sparsity rates through a differentiable framework, then uses a dynamic programming solver to allocate a global compute budget. A two-stage training strategy eliminates the requirement some methods have of keeping full forward passes at certain steps. On PixArt-α with 20-step sampling, DiffSparse cuts 54% of compute while generation quality metrics actually improve over the unmodified model. Consistent efficiency gains hold on FLUX and Wan2.1.

Whether this learned sparsity stacks with step distillation and quantization — the other two acceleration levers teams already use — isn't addressed. That's the gap to watch before production deployment.

Key takeaways: - Sparsity allocation upgrades from hand-crafted rules to learned per-layer, per-step decisions, adapting to actual token dependencies - Halving compute while improving quality metrics confirms massive redundant token computation in baseline models - Stacking with distillation and quantization is the open question for real deployment

Source: DiffSparse: Accelerating Diffusion Transformers with Learned Token Sparsity

02 Video Gen Identity Confusion Starts at Position Encoding

Multi-shot video generation has a persistent problem: when multiple similar-looking characters appear, models swap identities — grafting A's motion onto B. The common fix targets attention mechanisms. PoCo's team found the root cause sits deeper: reference image tokens for different characters share the same positional encoding space. Semantically similar tokens interfere during retrieval, so the model can't tell who is who from the start.

Their fix lets position encoding carry additional context control. Auxiliary information on each token enables precise matching while preserving implicit semantic consistency modeling. Cross-shot consistency and reference fidelity both improve. Sora2 is tackling the same direction, confirming this bottleneck is a real barrier to production video generation.

Key takeaways: - Identity leakage originates in position encoding, not attention: multiple similar references sharing positional space causes token retrieval confusion - Redesigning control signals at the position embedding level is an overlooked but effective angle - Multi-character, multi-shot consistency is a core bottleneck for commercial video generation; CVPR acceptance signals the field is formally engaging with it

03 Architecture Motion Generation Shouldn't Be Flattened to 1D

Next-token prediction has underperformed on motion generation. The core issue: human motion has a natural temporal hierarchy. Global intent comes first, then joint-level movement. Flattening that into a 1D token sequence destroys the structure. MoScale borrows the next-scale paradigm already proven in image generation: produce a coarse semantic outline of the motion first, then refine layer by layer down to individual frames.

To compensate for limited text-motion paired data, the method adds cross-scale hierarchical correction and intra-scale bidirectional re-prediction. CVPR-accepted, it achieves SOTA on text-to-motion and zero-shot generalizes to motion editing. The hierarchical representation transfers, not just the generation quality.

Beyond motion itself, the signal here is that next-scale AR is spreading from images to other sequential data types. Teams working on temporal generation tasks should track this paradigm migration.

Key takeaways: - Coarse-to-fine hierarchical generation captures long-range motion structure better than flattened 1D sequences - Next-scale AR extending from images to motion validates the paradigm's applicability to broader temporal data - Zero-shot generalization to editing tasks shows the hierarchical representation has utility beyond generation alone

Source: Next-Scale Autoregressive Models for Text-to-Motion Generation