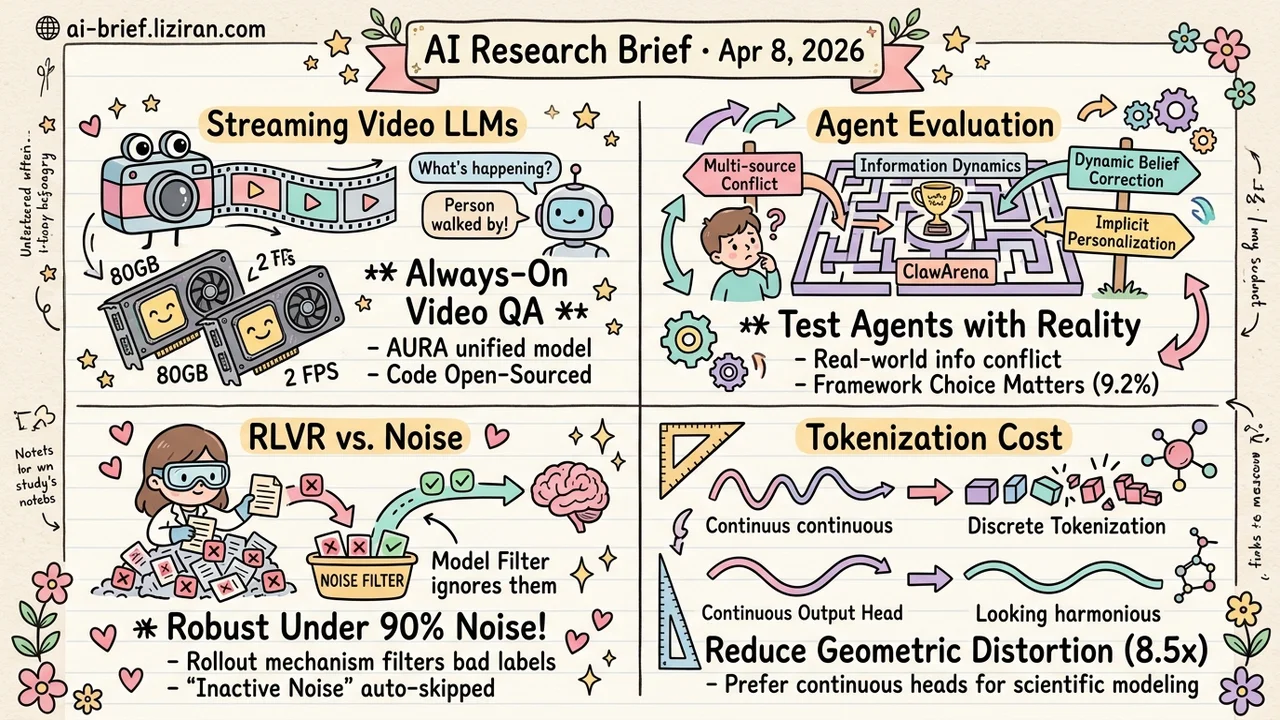

Today's Overview

- VideoLLM achieves 2 FPS streaming video QA. AURA unifies continuous perception and proactive response in one end-to-end architecture, with ASR+TTS integrated into a working interactive prototype.

- Agent belief maintenance gets its first systematic benchmark. ClawArena covers 64 scenarios with dynamic information updates, finding that framework design accounts for nearly 60% of the performance gap between models.

- RLVR's rollout mechanism naturally filters noisy labels. Wrong labels only affect training when the model independently reproduces the wrong answer. The method stays robust at 0.9 noise ratio.

- Breaking the circular dependency in test-based code selection. ACES uses leave-one-out AUC on a pass/fail matrix to weight tests by ranking consistency. Zero extra model calls required.

Featured

01 Multimodal Video QA Goes From "Upload and Wait" to Always-On

Video surveillance, AR glasses, live-streaming interaction: these scenarios don't need "upload a clip and get an answer." They need a model watching continuously and speaking up when appropriate. AURA is an end-to-end streaming framework that lets VideoLLMs process live video, answer open-ended questions, and initiate responses proactively — all at once.

The core design challenge is threefold. Video streams grow infinitely, but context windows don't. AURA manages this through dedicated context strategies and data construction that maintain stable understanding over long streams without naive truncation or periodic memory resets. Training objectives pull in opposite directions: continuous perception demands uninterrupted low-latency visual processing, while open-ended QA and proactive responses require high-quality text generation at specific moments. AURA's unified training framework balances both, preventing either capability from cannibalizing the other.

This contrasts with existing decoupled approaches that use separate modules to decide when to trigger responses. Pipeline architectures suffer from information bottlenecks in the trigger module during open-ended long-horizon interaction. The working demo runs at 2 FPS on two 80 GB GPUs with speech recognition and synthesis integrated. It achieves SOTA on streaming video understanding benchmarks, but the real significance is architectural: VideoLLMs moving from offline tools to always-on assistants.

Key takeaways: - End-to-end streaming architecture unifies continuous perception and proactive response, eliminating the decoupled trigger-response pipeline - 2 FPS with ASR+TTS integration shows this direction is ready for product prototyping - Teams working on video surveillance, AR assistance, or live-streaming should track this framework

Source: AURA: Always-On Understanding and Real-Time Assistance via Video Streams

02 Agent What Your AI Assistant Said Yesterday Might Already Be Wrong

Anyone who has deployed a persistent agent knows this scenario: two data sources contradict each other, users reveal preferences through corrections rather than explicit statements, and yesterday's correct answer becomes obsolete. ClawArena turns these real deployment pain points into a benchmark: 64 scenarios across 8 professional domains, 1,879 evaluation turns including 365 dynamic updates, specifically testing whether agents maintain correct beliefs as information evolves.

Two practical findings stand out. Model capability gaps (15.4%) and framework design gaps (9.2%) both significantly affect performance. Belief revision difficulty depends on the update's design strategy, not the mere fact of change. In other words, it's not that information changed — it's how it changed that determines difficulty. A self-evolving skill framework partially closes the model capability gap, good news for teams with limited model selection budgets.

Key takeaways: - First systematic benchmark for agent belief maintenance under dynamic information, covering multi-source conflict, belief revision, and implicit personalization - Framework design influences performance at roughly 60% of the model capability gap — choosing the right framework may beat upgrading to a larger model - Belief revision difficulty is driven by update strategy, providing concrete optimization directions for agent system design

Source: ClawArena: Benchmarking AI Agents in Evolving Information Environments

03 Training RLVR Has Built-In Noise Immunity

RLVR (reinforcement learning with verifiable rewards) has a structural advantage over supervised learning that has gone largely unnoticed: a label only produces a training signal when the model independently reproduces the corresponding answer through rollout. Wrong labels must be "rediscovered" by the model to cause harm. This acts as a natural noise filter that supervised learning simply doesn't have.

This paper systematically analyzes the mechanism, splitting noisy labels into two categories. "Inactive" labels — the model can't reproduce the wrong answer, so they just waste data. "Active" labels — the model happens to land on the wrong answer, causing real damage. An interesting dynamic emerges during training: clean and noisy sample accuracy rise almost in sync early on, with divergence appearing only later. The proposed OLR method exploits this pattern and maintains robustness at noise ratios up to 0.9, with 3.6%–3.9% average improvement on in-distribution tasks.

Key takeaways: - RLVR's rollout condition naturally filters noisy labels, a structural advantage over supervised learning - Noisy labels split into active vs. inactive categories; only errors the model can independently reproduce cause real harm - For teams with limited annotation resources, RLVR tolerates label noise far better than expected

Source: Can LLMs Learn to Reason Robustly under Noisy Supervision?

04 Code Intelligence Breaking the Circular Dependency in LLM Test Selection

Using LLM-generated tests to filter LLM-generated code has an inescapable problem: the tests themselves might be wrong, and judging test correctness requires knowing which code is correct first. Classic circular dependency. ACES solves this cleanly by sidestepping correctness entirely. The shift: from counting test votes to ranking consistency.

Leave-one-out evaluation holds out one test, uses the remaining tests to rank code solutions, then checks whether the held-out test's pass/fail pattern aligns with that ranking. AUC quantifies this alignment and becomes the test weight. The closed-form variant (ACES-C) provably approaches the optimal solution under mild assumptions. The iterative variant (ACES-O) relaxes those assumptions. Both need only a binary pass/fail matrix. Computational overhead is negligible. They achieve best-in-class Pass@k across multiple code generation benchmarks.

Key takeaways: - The circular dependency in test quality assessment breaks through ranking consistency rather than correctness judgments - The method requires only a pass/fail matrix with no extra model calls, making integration cost minimal - Teams building code generation pipelines can drop in ACES as a direct replacement for majority-vote filtering

Source: ACES: Who Tests the Tests? Leave-One-Out AUC Consistency for Code Generation

Also Worth Noting

Today's Observation

Three papers from different subfields independently dismantle the same hidden assumption: "input signals are clean." Each replaces it with a different mechanism.

ClawArena writes contradiction into the evaluation itself. Those 365 dynamic updates aren't noise — they're by design. The benchmark deliberately manufactures stale information and multi-source conflict. It tests not whether an agent can get the right answer, but whether it can maintain reasonable beliefs when no answer stays right for long. A difficulty gradient over update strategies replaces the assumption of stable ground truth.

The RLVR noise study surfaces a mechanism that was always there but nobody had examined. Rollout requires the model to independently reproduce an answer before the label takes effect. Most wrong labels simply can't activate. This isn't filtering noise — it's noise failing to enter the training loop in the first place. Supervised learning lacks this gate, which explains the large performance gap under identical noise ratios.

ACES performs a clean problem transformation: don't ask "is this test correct?" — ask "does this test's ranking agree with the others?" Leave-one-out AUC converts a correctness judgment into a consistency measure, bypassing the "you need ground truth to evaluate your tools" loop.

The shared conclusion isn't "we need cleaner data." It's that system structure itself must handle dirty signals gracefully. If you're building a pipeline that depends on external signals — labeled data, test cases, or real-time information streams — ask whether your system collapses when those signals are wrong, or whether it has structural tolerance built in.