Today's Overview

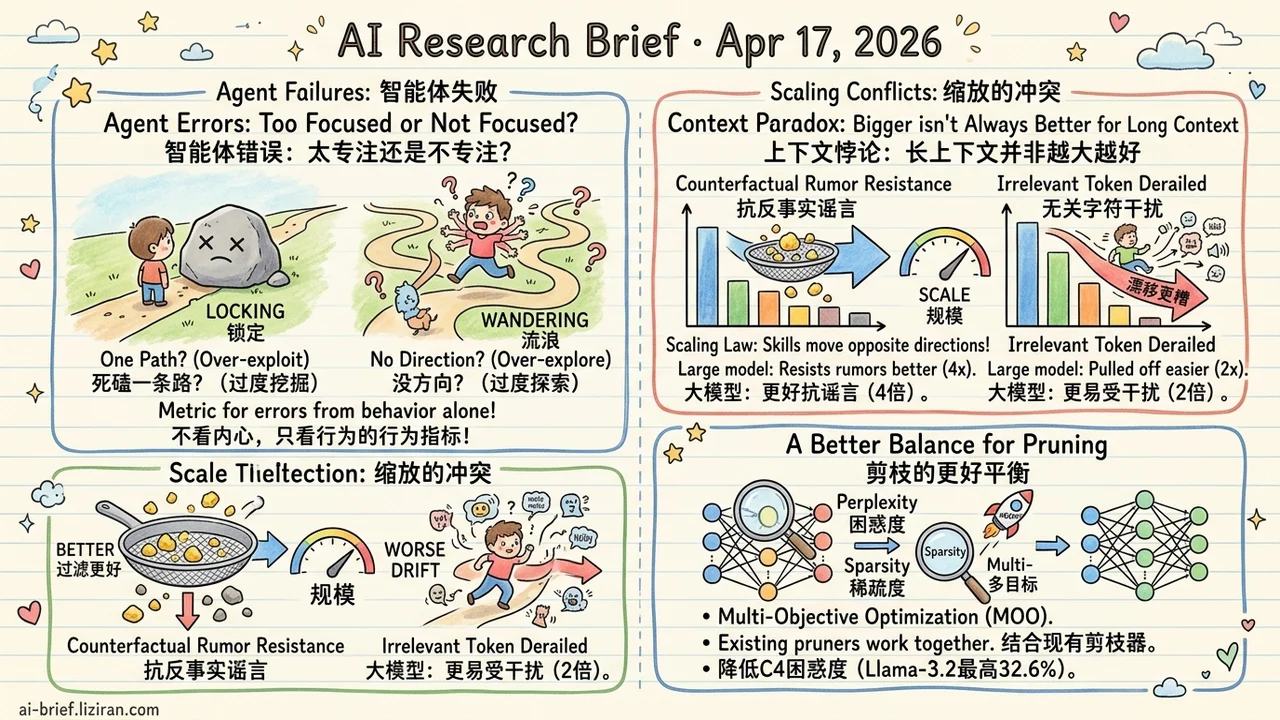

- Agent failures split into two measurable error modes: locking onto one path (over-exploit) and wandering without direction (over-explore) can be separated by black-box metrics, no access to model internals required, and frontier models differ clearly in failure profile.

- Scaling splits "reading context" into two subskills moving in opposite directions. Google gives the first scaling law for contextual entrainment across two model families: the largest model resists counterfactual rumors 4x better than the smallest, but gets derailed by irrelevant tokens 2x more often.

- Pruning one objective leaves the better solution on the table: Google's MOONSHOT reframes post-training one-shot pruning as multi-objective optimization and wraps existing pruners, cutting C4 perplexity by up to 32.6% on Llama-3.2 at 2:4 sparsity.

Featured

01 Splitting "My Agent Doesn't Work" Into Two Measurable Errors

When someone says "my agent doesn't work," the real question is usually left vague. Is it stuck grinding one dead path (over-exploit), or drifting without converging (over-explore)? Most teams can't say.

The authors build a controlled 2D grid environment with tasks expressed as DAGs, and tune map parameters to isolate either exploration or exploitation difficulty. The contribution is a policy-agnostic metric: you score each error type from behavior traces alone, without touching the model's internal policy. They run this on a batch of frontier models and find failure profiles differ sharply across vendors, with reasoning models generally more stable. Simple harness tweaks can improve both axes at once.

The caveat is obvious. The environment is synthetic, and in real tasks exploration and exploitation tangle together. Still, for anyone currently tuning an agent and wanting to make "doesn't work" mean something specific, the framework and open-source code are worth running against your own baseline.

Key takeaways: - Explore errors and exploit errors can be measured separately with black-box metrics, no policy access needed. - Reasoning models with harness tuning improve on both dimensions simultaneously. - Synthetic-environment findings extrapolate to real tasks with care.

Source: Exploration and Exploitation Errors Are Measurable for Language Model Agents

02 Bigger Models Resist Rumors Better, Resist Noise Worse

"Bigger model" usually reads as one kind of progress. Google's scaling law for contextual entrainment breaks that lump into two.

On Cerebras-GPT (111M–13B) and Pythia (410M–12B), the largest model resists counterfactual rumors 4x better than the smallest, while getting pulled off by irrelevant tokens 2x more often. Both curves are clean power-laws, pointing opposite directions. The authors read this as two functionally independent mechanisms: semantic filtering strengthens with scale, and mechanical copying also strengthens with scale, and the two diverge.

"Reading context" isn't one capability. It's two, modulated by the same dial in opposite directions. For RAG and long-context setups, upgrading to a larger model may fix one hallucination class while opening another.

Key takeaways: - Scaling doesn't monotonically make a model "understand" context better; it bifurcates two subskills. - RAG teams shouldn't assume a bigger model is safer. Test rumor resistance and noise resistance separately. - The pattern reproduces across two independent model families, so it's likely architecture-agnostic rather than one lab's training artifact.

Source: Better and Worse with Scale: How Contextual Entrainment Diverges with Model Size

03 Why Does Pruning Chase Only One Objective?

Post-training one-shot pruning is the best cost-value path for deployment compression. Take a pretrained model, prune it, skip retraining. Existing methods almost all optimize a single objective: layer-wise reconstruction error (preserve local outputs) or second-order Taylor approximation (preserve training loss).

MOONSHOT's observation is that neither objective wins consistently across architectures and sparsity levels. Google reformulates it as joint multi-objective optimization, wrapped around existing pruners rather than replacing them. At 2:4 sparsity, C4 perplexity drops by up to 32.6% on Llama-3.2 and Llama-2. ViT at 70% sparsity gains 5+ points on ImageNet-1k. The compute for inverse-Hessian calculation was re-engineered to stay tractable at billion-parameter scale, so state-of-the-art pruner efficiency is preserved.

Key takeaways: - The best single-objective choice itself depends on architecture and sparsity, so multi-objective is a reasonable engineering step. - The wrapper form means SparseGPT, Wanda, and similar methods benefit directly. - For teams compressing LLMs or ViTs onto edge devices, this is a shippable increment, not a paradigm-level break.

Source: MOONSHOT : A Framework for Multi-Objective Pruning of Vision and Large Language Models