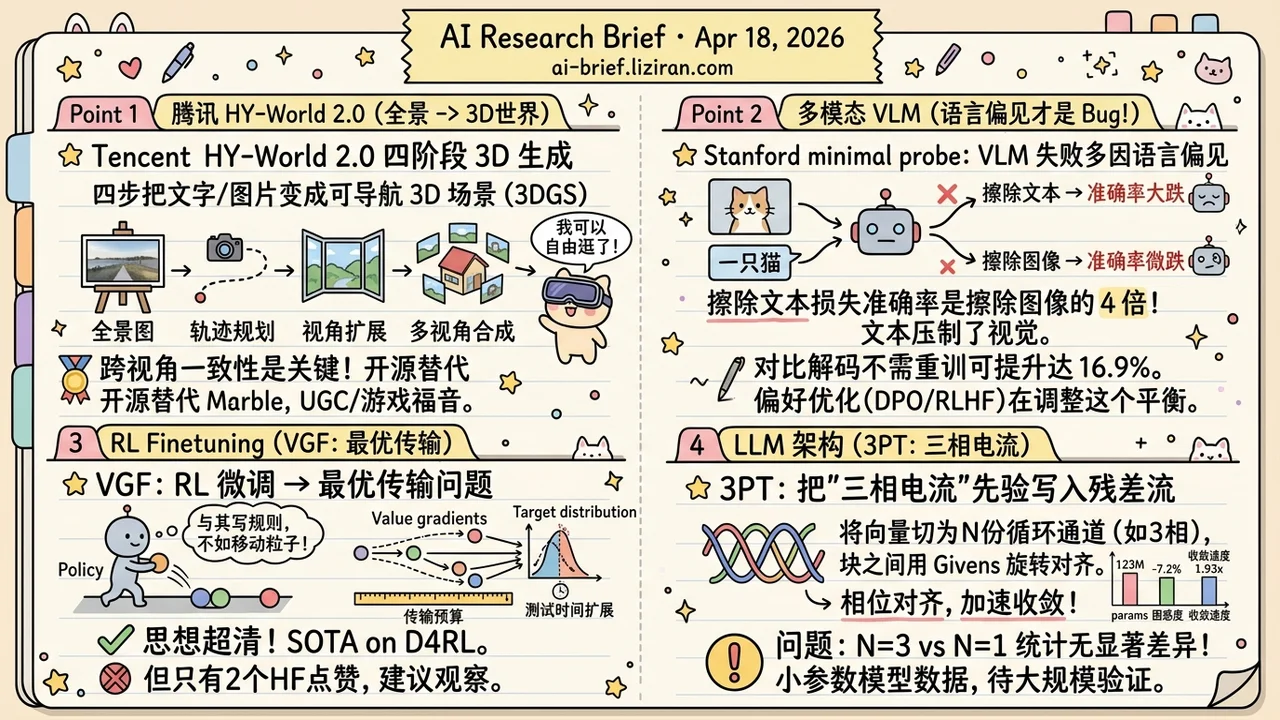

Today's Overview

- Tencent HY-World 2.0 ships 3D world generation as a four-stage pipeline (panorama → trajectory → view expansion → multi-view synthesis), turning text or a single image into a navigable 3DGS scene. It's the open-source answer to closed Marble.

- The bug in visual tasks is actually the language side. Stanford's centroid-replacement probe shows that erasing text information costs 4x more accuracy than erasing visual information across 7 VLMs. A contrastive decode built on that asymmetry gains up to 16.9% per task, no retraining.

- VGF reframes RL fine-tuning as optimal transport. Instead of parameterizing the policy, it moves reference-distribution particles along the value gradient, with a transport budget that maps cleanly onto test-time scaling. Clean idea, but only 2 HF upvotes — keep on the watchlist.

- 3PT bakes a "three-phase current" prior into the residual stream. Hidden vectors are sliced into cyclic channels and aligned block-to-block via Givens rotations. At 123M params, perplexity drops 7.2% over RoPE-only, but N=3 and N=1 are statistically indistinguishable.

Featured

01 Image Gen Tencent Breaks 3D World Gen Into Four Stages, Rivals Closed Marble

Tencent's HY-World 2.0 is a full engineering stack, not a single module. Feed it text or an image, get a scene you can freely navigate inside a 3D Gaussian Splatting engine. Four steps get you there: HY-Pano 2.0 generates a panoramic base, WorldNav plans camera trajectories, WorldStereo 2.0 extends views from keyframes while holding cross-frame consistency, and WorldMirror 2.0 does multi-view reconstruction.

The kit includes WorldLens — a render platform with IBL auto-lighting, collision detection, and character integration. Benchmarks claim SOTA among open-source, with quality comparable to closed Marble.

WorldStereo 2.0 is the critical stage. The perennial problem in 3D world generation has been geometric drift and texture jumps when the view moves. Get cross-frame consistency right at stage three and multi-view reconstruction has clean input to work with. That's how HY-World supports long-range free navigation where single-image or short-video approaches don't. The 68 HF upvotes aren't hype — open-source 3D world models have been undersupplied, and a "panorama → trajectory → expansion → synthesis" pipeline is more useful to product teams than any single new architecture.

Key takeaways: - 3D world generation crossed from research demo to usable engineering. Text or a single image produces a navigable 3DGS scene. - The four-stage pipeline (panorama base → trajectory planning → view expansion → multi-view synthesis) is the real lesson, not any single module. - Teams working on UGC, games, spatial computing, or embodied simulation should evaluate this stack first. Comparable to Marble without the license.

Source: HY-World 2.0: A Multi-Modal World Model for Reconstructing, Generating, and Simulating 3D Worlds

02 Multimodal The Bug in Visual Tasks Is Actually the Language Side

If a VLM fails a visual task, the visual module is the natural suspect. Stanford's minimal probe says otherwise. Replace each token with its nearest K-means centroid — effectively erasing that modality's structural content — and across 7 mainstream VLMs spanning three architectures, erasing the text side costs 4x more accuracy than erasing the visual side. This holds even for tasks that require visual reasoning. Language representations are suppressing vision.

Build a text-centroid contrastive decode on that asymmetry and you get up to 16.9% accuracy gains per task, with no retraining. The split between fine-tuning strategies is more interesting: standard fine-tuned models gain +5.6% on average, preference-optimized models only +1.5%. That suggests preference optimization is already adjusting modal balance, perhaps without meaning to.

The probe's real value isn't the numbers. It's that you can drop it onto any VLM as a diagnostic.

Key takeaways: - VLM failures on visual tasks aren't noise. They're structural language bias, measurable and correctable. - The centroid-replacement probe plugs into any VLM for diagnosis, no training needed. - The gap between SFT and preference optimization suggests RLHF/DPO is already shaping modal competition. Factor this into VLM training choices.

03 Training Moving Particles Instead of Parameterizing Policies

Behavior-regularized RL has to balance "don't drift too far" against "actually improve on the reference." The two mainstream paths at LLM scale — reparameterized policy gradients and rejection sampling — are hard to scale and too conservative, respectively. VGF reframes the problem as optimal transport: don't parameterize the policy at all, just move the reference distribution's particles along the value gradient, as far as the transport budget allows. That budget also maps naturally to test-time scaling.

The paper reports SOTA on D4RL, OGBench, and LLM RL tasks. The idea is clean. HF community attention isn't — 2 upvotes. Real stability and engineering cost at large-scale LLM RL finetuning need more reproductions before anyone should swap out a production pipeline.

Key takeaways: - Frames behavior-regularized RL as optimal transport, skipping explicit policy parameterization. - Transport budget maps directly onto test-time scaling, which is conceptually neat. - Low community attention, unreplicated benchmarks. Add to the watchlist, don't replace your RLHF stack yet.

Source: Reinforcement Learning via Value Gradient Flow

04 Architecture Three-Phase Current as a Residual-Stream Prior

3PT slices the hidden vector into N equal cyclic channels and uses Givens rotations between each attention and FFN block to keep phase alignment. The authors cite three-phase AC as the analogy — at N=3 the three phases cancel, leaving no anti-correlated pairs — and inject a fixed r(p)=1/(p+1) position profile into a 1D DC subspace orthogonal to the channels, composed with RoPE.

At 123M parameters on WikiText-103, perplexity drops 7.2% over a matched RoPE-only baseline. The extra parameter cost is 1,536. Convergence is 1.93x faster.

Validation scale is the catch. 123M params, one dataset, three seeds. N=3 and N=1 are statistically indistinguishable, and the authors concede N looks more like a parameter-sharing knob than an optimum. Architecture priors tend to look clean at small scale and dilute as you scale up. RoPE, Mamba, and MoE each needed two or three years of reproduction before anyone trusted them.

Key takeaways: - The mechanism is geometric: slice residual stream by phase, rotate between blocks. No new modules added. - Small-scale numbers look good, but N=3 vs N=1 being indistinguishable leaves the load-bearing claim unsettled. - File under "worth tracking." Wait for larger-scale and multi-dataset reproductions before adoption.

Source: Three-Phase Transformer