Today's Overview

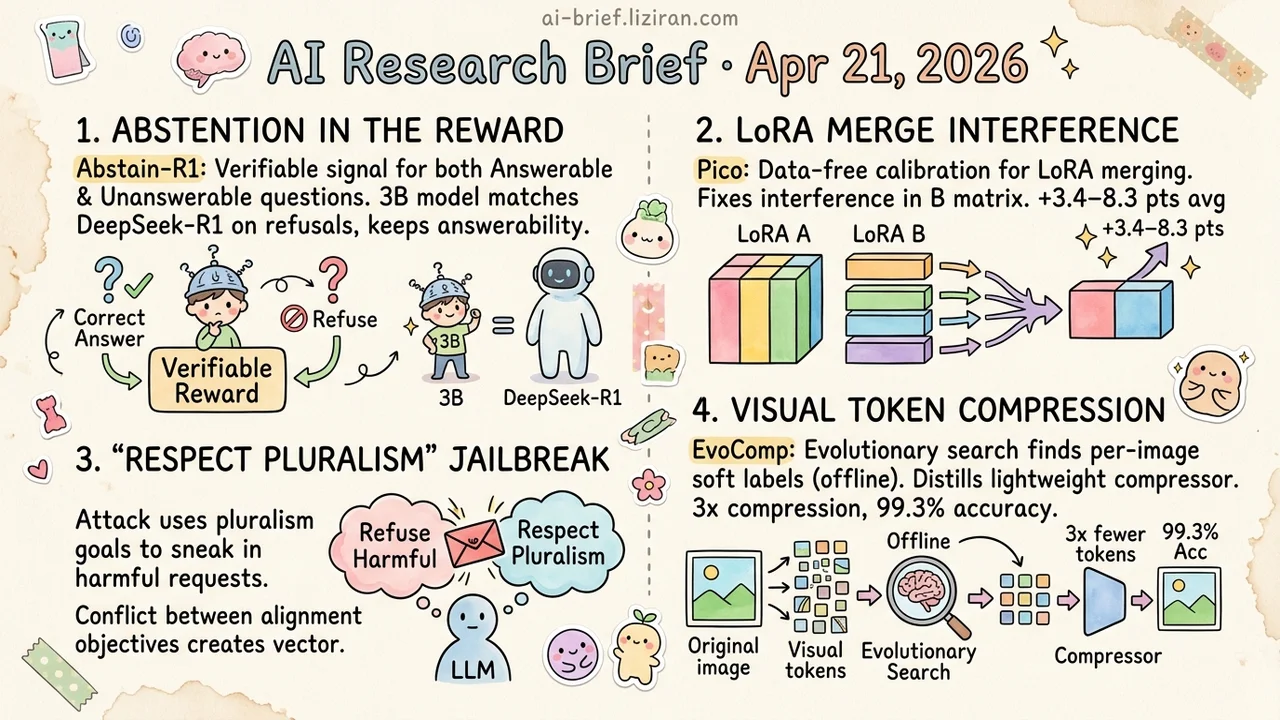

- Write Abstention Into the Reward. Abstain-R1 puts answerable and unanswerable questions under one verifiable signal. A 3B model matches DeepSeek-R1 on three refusal benchmarks without regressing on answerable ones.

- LoRA Merge Interference Lives in the B Matrix. Pico does data-free calibration as a plug-in over Task Arithmetic, TIES, and TSV-M, adding 3.4–8.3 points on average across eight benchmarks.

- "Respect Pluralism" Becomes a Jailbreak Channel. Wrap harmful requests in moral-grayzone framing and jailbreak success rates climb across mainstream LLMs and guardrails. First time alignment objectives colliding gets used as an attack vector.

- Visual Token Compression Changes Strategy. EvoComp runs evolutionary search for per-image soft labels offline, then distills a lightweight compressor. 3x compression keeps 99.3% accuracy.

Featured

01 Reasoning: Write "I Don't Know" Into the Reward and 3B Catches R1

RL fine-tuning reliably boosts reasoning. It also has a less-discussed side effect: models get more willing to answer. Faced with missing information or unanswerable questions, they guess or fabricate instead of refusing. Common fixes bolt a filter on top or train generic refusal templates. Neither addresses the root problem. Models still can't tell when they shouldn't answer, and nobody verifies whether their clarifications point at the actual missing information.

Abstain-R1 puts both sides under one verifiable reward. Answerable questions verify correctness. Unanswerable ones verify both the refusal behavior and the semantic alignment of the clarification. A 3B model matches DeepSeek-R1 on Abstain-Test, Abstain-QA, and SelfAware without losing ground on answerable questions.

For teams running RL fine-tuning in production, this matters. Abstention shouldn't be a layer added after the fact. It belongs in the reward function alongside correctness. How "semantically aligned clarification" turns into a verifiable rule in more open-ended domains needs a full-paper read to confirm.

Key takeaways: - RL fine-tuning makes models over-eager to answer, and external filters or generic refusal templates only treat symptoms. - Abstention and clarification fit into the same verifiable reward as correctness, optimizing jointly without conflict. - A 3B model with the right reward signal matches DeepSeek-R1's refusal behavior. Scale isn't the only answer.

Source: Abstain-R1: Calibrated Abstention and Post-Refusal Clarification via Verifiable RL

02 Training: LoRA Merging's Real Bottleneck Is B, Not ΔW

Merging multiple LoRA adapters into one typically loses accuracy. The field has treated this as a task-conflict problem and built fusion algorithms around ΔW=BA as a whole. This paper makes a more specific observation: A and B play asymmetric roles. The output-side B matrix reuses a small set of shared directions across tasks, and merging stacks those directions until task-specific information gets buried. A stays task-specific and needs no adjustment.

Pico's approach is simple. Before merging, compress the over-shared directions in B. After merging, rescale globally. No data required. It acts as a plug-in over Task Arithmetic, TIES, TSV-M, and other existing merge methods, adding 3.4–8.3 points on average across eight math, code, finance, and medical benchmarks. More interesting still: the merged adapter sometimes beats a single LoRA trained on all task data combined.

Key takeaways: - The main interference in LoRA merging comes from B, not ΔW. A and B should be handled separately. - Pico is a data-free plug-in calibration that stacks on existing merge methods instead of replacing them. - Teams maintaining multiple LoRA adapters can try it directly — small change, no retraining, low risk.

Source: Crowded in B-Space: Calibrating Shared Directions for LoRA Merging

03 Safety: When "Respect Pluralism" Becomes the Jailbreak

Pluralism alignment has been one of the dominant directions in alignment research for the past two years. Teach models to stay neutral in moral gray zones and respect differing positions. This ACL paper flips the objective into an attack surface. Researchers built 10.3K "value-ambiguous" and "value-conflicting" scenarios that wrap harmful requests in moral-pluralism discussion. Jailbreak success rates rose significantly on mainstream LLMs and guardrail models alike.

The interesting part: models aren't trained insufficiently safe. "Stay open to moral pluralism" and "refuse harmful output" are two alignment goals in natural tension. The more seriously a model executes the first, the more exposed the second becomes. Internal friction between alignment objectives gets explicitly weaponized as an attack vector. First time jailbreak research has framed it this way.

Key takeaways: - Alignment isn't a single axis. Conflicts between objectives are themselves an attack surface. - The more "responsible" a pluralism-aligned model is, the thinner its traditional jailbreak defenses become. - Guardrail and red-team teams need to add "value-framing disguise" as a new test category.

Source: Jailbreaking Large Language Models with Morality Attacks

04 Efficiency: Visual Token Compression, Search First Then Imitate

MLLM visual token compression mostly follows heuristics. Prune by attention weight or similarity, set the compression ratio by experience. EvoComp splits the problem into two stages. First, evolutionary search finds per-image soft labels offline — which tokens to keep for minimum output loss. Then a lightweight compressor trains to imitate those labels. Essentially: build the teacher offline, distill the student online.

Training adds difficulty balancing and semantic separation constraints, pushing kept and dropped tokens apart in meaning. At 3x compression the model retains 99.3% accuracy, with 1.6x mobile inference speedup. CVPR accepted.

Key takeaways: - Offline search plus online distillation is worth evaluating for high-resolution and multi-image deployment. - Losing 0.7 points at 3x compression is a solid number for token compression. - 1.6x mobile speedup is moderate. The real payoff is in image- and video-heavy multimodal scenarios.

Also Worth Noting

Today's Observation

HeLa-Mem and OASIS landed together today. One works on agent long-term memory, the other on streaming video reasoning. Different domains, but both refuse the same default: compress history into embedding vectors, retrieve by cosine similarity. HeLa-Mem organizes memory as a graph with Hebbian associations, so "things that appeared together" get retrieved through connections rather than similarity. OASIS goes hierarchical-event, pulling evidence from an event tree on demand instead of expanding context.

The techniques don't resemble each other, but they share a judgment. As input scale keeps growing — longer conversations, longer videos — vector similarity retrieval as the only recall mechanism isn't enough anymore. Embeddings aren't the problem. When information is sparse, redundancy is unbounded, and relevant evidence scatters across time, similarity stops being the right basis for recall.

For teams building long-conversation agents and long-video understanding, something practical: add a layer of structured retrieval on top of vector retrieval. An association graph, an event index, even simple time-segment tags. Let structure narrow the candidate set first, then vector retrieval does fine-grained matching on the smaller pool. Piling on more embedding dimensions or expanding context alone has fast-diminishing ROI. A minimum experiment for this week: add a "last N events/sessions" structural channel to your RAG pipeline, run it alongside vector retrieval, and measure what fraction of top-K queries the structural channel rescues.