Today's Overview

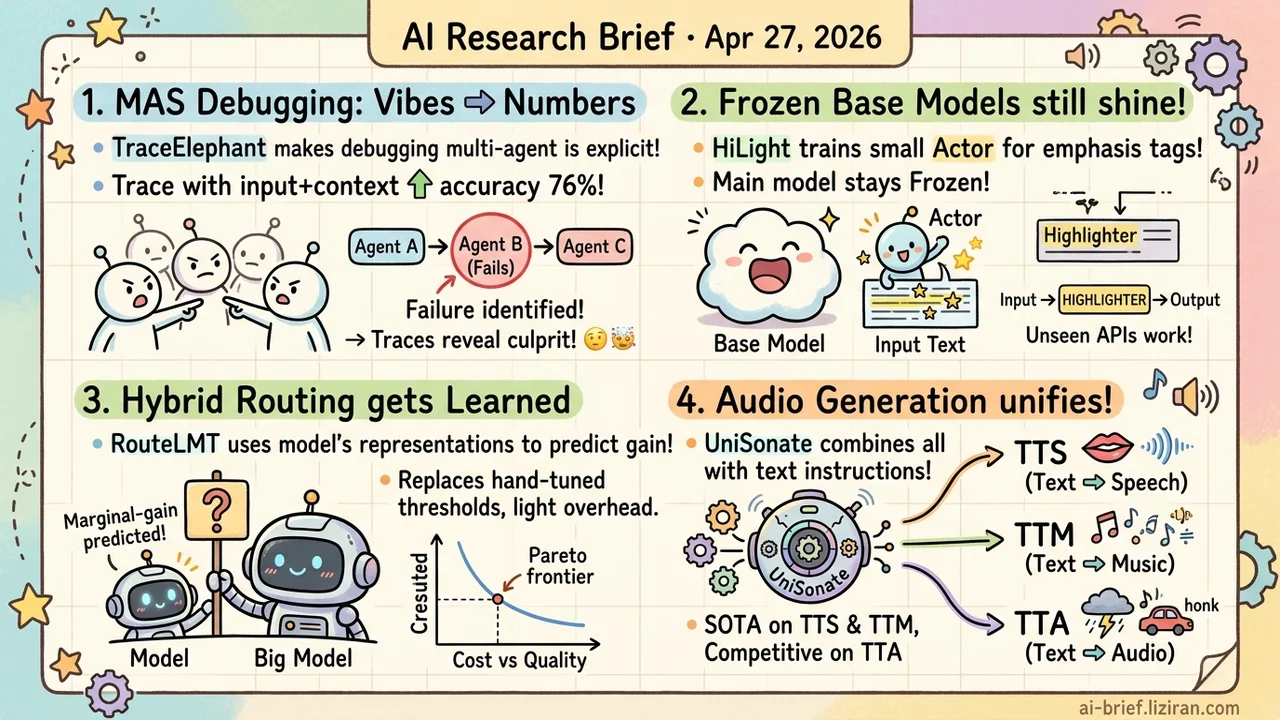

- Multi-Agent Debugging Moves from Vibes to Numbers. TraceElephant turns failure attribution into an explicit benchmark, with full execution traces lifting attribution accuracy 76% over agent-output-only views.

- Frozen Base Models Can Still Surface Key Evidence. HiLight trains a side Actor that adds emphasis tags to the input; the main model stays frozen, and the learned policy zero-shots to closed-source APIs.

- Hybrid Routing Becomes Something the Model Learns. RouteLMT replaces hand-tuned escalation thresholds with marginal-gain prediction read off the small model's own token representations, validated only on translation though.

- Audio Generation Catches Up on the Unified-Architecture Playbook. UniSonate stuffs TTS, TTM, and TTA into one text-instruction model, hitting SOTA on the first two but only "competitive" on TTA, the typical fault line for unification.

Featured

01 Agent: A Measuring Stick for Multi-Agent Debugging

The hardest part of running a multi-agent system isn't task failure itself. It's not knowing which agent took which step that derailed the run. Past MAS evaluation tracked only task-level success, treating the whole system as a black box. When something broke, debugging meant reading traces by hand.

TraceElephant makes attribution explicit. Given a failed multi-agent interaction, can a system pinpoint the responsible agent and the decisive step? A key finding: providing the full input-plus-context trace lifts attribution accuracy 76% over agent-output-only views. That gap means existing benchmarks feed evaluators much less than developers actually rely on to diagnose problems.

Benchmark coverage stays limited, and real-world failures will be messier. But moving failure attribution from "feel" to a quantifiable metric clears the way for systematic comparison of attribution methods.

Key takeaways: - Multi-agent debugging shifts from black box toward measurable; for teams shipping MAS products, this is infrastructure-level tooling. - Full execution traces beat output-only views by 76% on attribution accuracy, exposing what current benchmarks miss versus what developers actually need. - The benchmark reflects debug scenarios, not production environments; method-level usability still has to be checked in your own setting.

Source: Seeing the Whole Elephant: A Benchmark for Failure Attribution in LLM-based Multi-Agent Systems

02 Reasoning: Highlight the Evidence, Leave the Base Model Alone

HiLight separates evidence selection from reasoning. A lightweight Emphasis Actor adds emphasis tags to key spans in the raw input, and the main model stays fully frozen, running inference only on the annotated version. The Actor trains with RL, with the reward signal coming straight from the Solver's task result. No evidence-level labels, no access to base-model weights.

On long-context QA and sequential recommendation, the method consistently beats prompt-based and automated prompt-optimization baselines. The bigger result: the learned policy zero-shots to unseen Solvers, including closed-source API models. For engineering teams, that means stacking it as a preprocessor in front of GPT or Claude APIs, with the main pipeline untouched. Actor training cost and inference latency need the full paper to confirm.

Key takeaways: - Highlighting key spans loses less information than compressing or rewriting input, worth evaluating for long-context use cases. - Training depends only on Solver task rewards, removing the need for evidence-level annotations and lowering the data bar. - The policy zero-shots to unseen base models, so it can sit in front of commercial APIs without replacing the underlying model.

Source: Learning Evidence Highlighting for Frozen LLMs

03 Efficiency: Learn the Escalation Threshold Instead of Tuning It

Translation services often run hybrid architectures: small model handles the bulk, a fraction of requests escalate to the big one. Routing rules typically come from hand-tuned thresholds on language, length, and user tier. RouteLMT reframes the choice as budget allocation. The right thing to predict isn't "how hard is this request to translate." It's "how much extra quality does the big model deliver over the small one." Marginal gain is the signal to use.

The approach probes the small model's own internal representations on prompt tokens to estimate that gain. No external quality predictor, no need to run the big model first. The paper reports beating heuristic and absolute-quality-estimation baselines on the quality-budget Pareto frontier. The authors also flag regression risk, where some misrouted requests come out worse than the small model alone, and add a guarded variant as a fallback.

All experiments stay on translation, a relatively structured task. Whether this transfers to code generation, dialogue, or open long-form text is an open question.

Key takeaways: - Recasting "what to escalate" from hand-tuned thresholds to learned marginal-gain prediction is directly applicable for teams already running hybrid serving. - The signal comes only from the small model's token representations, with no extra predictor network and no need to query the big model first; deployment overhead is light. - Open-domain validation outside translation is missing; whether the signal generalizes is the next thing to watch.

Source: RouteLMT: Learned Sample Routing for Hybrid LLM Translation Deployment

04 Multimodal: Speech, Music, and Sound Effects in One Model

Audio generation has long been split. TTS, TTM, and TTA each carry their own control style and data format. UniSonate folds all three into one flow-matching framework driven by text instructions. The playbook tracks what image and video models did with unified architectures the past two years: a dynamic token-injection mechanism projects unstructured environmental audio into a structured temporal latent space, and multi-stage curriculum learning eases optimization conflict across tasks.

Results land where you'd guess. SOTA on TTS and TTM (WER 1.47%, SongEval Coherence 3.18), but TTA only "competitive fidelity." That's the typical sacrifice spot for unified models, and the real test of whether this path can ship. The paper also reports positive transfer from joint training, with prosody and structural coherence improving over single-task baselines. That holds longer-term significance than any single-point metric.

Audio quality isn't really a metrics question. Teams working on this should listen to the demo page before deciding whether the loss is acceptable.

Key takeaways: - Audio generation is replaying the unified-architecture move from image and video, with text instructions as the universal control interface. - TTS and TTM hit SOTA but TTA only stays "competitive," the typical sacrifice spot for unified models. Test on your own use case before adoption. - Joint-training positive transfer across tasks may matter more long-term than the single-point metrics.

Source: UniSonate: A Unified Model for Speech, Music, and Sound Effect Generation with Text Instructions

Also Worth Noting

Today's Observation

HiLight, CFB (2604.22335), and RouteLMT share no technical overlap. One adds emphasis on key spans at the input side. One imposes watermark-style constraints during decoding. One handles request routing between small and large models. They share one engineering assumption: the base LLM is off-limits. Three teams picked three different layers to intervene, all defaulting to frozen weights.

This isn't taste convergence among researchers. It's a deployment reality. Base-model migration costs too much, and fine-tuning still risks degradation without controllable fixes. "Don't touch the base" has become the default for many teams. Research output is starting to cluster around the periphery: the input layer, the decoding layer, and the routing layer of an essentially API-like main model.

Engineering teams have to read papers through this lens. When evaluating whether a method can ship, first check whether it assumes you can modify the base. If yes, every benchmark number implies an unclosed fine-tuning loop. If the base stays frozen, then estimate the added cost and latency of stacking the method in front of an existing commercial API. Next time you're reviewing external research, put "is the base frozen" at the top of the checklist.