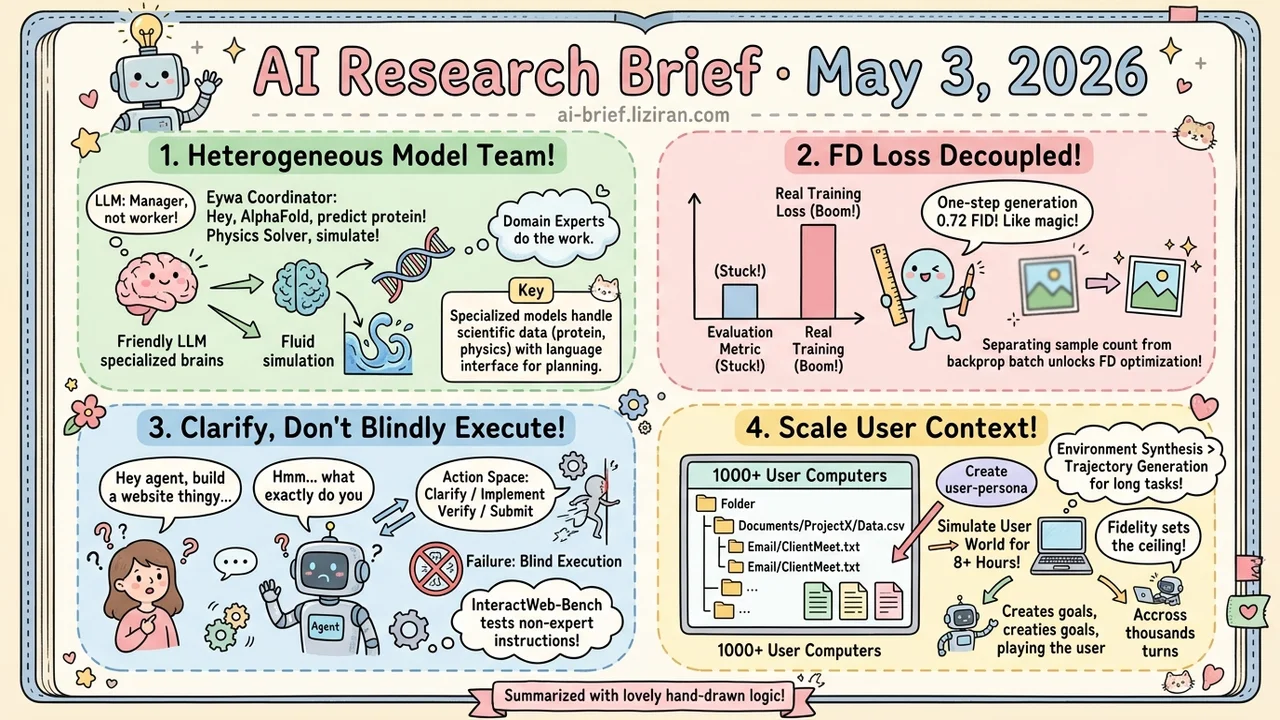

Today's Overview

- Heterogeneous scientific foundation model collaboration: Eywa pulls LLMs back from "general solver" to coordinator, handing protein structure and physics simulation tasks to domain-specialized predictors.

- FD estimation decoupled from gradient batches: Fréchet Distance, stuck as an evaluation metric for years, becomes a real training loss. One-step generation hits 0.72 FID on ImageNet 256 in post-training.

- Ambiguous instructions plus an interactive action space: InteractWeb-Bench makes "actively clarify intent" a required capability, and frontier multimodal agents fall into blind execution under it.

- Production-grade "world as the agent sees it": Synthetic Computers at Scale builds 1000 user-specific computers with 8+ hour simulations, shifting the long-horizon training bottleneck from trajectory generation to environment synthesis.

Featured

01 LLMs Step Aside When Domain Models Know Better

AlphaFold predicts protein structures, neural operators handle physics simulations, GNNs compute molecular properties. Domain-specific foundation models exist beyond LLMs, but most agent systems still assume everything has to fit through natural language. Eywa flips this. Rather than forcing LLMs to chew structured scientific data, it adds a "language reasoning interface" to predictors already trained on specialized data, leaving planning and decision-making to the LLM while domain models do the actual prediction.

The framework offers three integration modes: single-agent replacement (EywaAgent), drop-in for existing multi-agent systems (EywaMAS), and a planner-orchestrated version (EywaOrchestra). That covers a migration path from light retrofit to full orchestration. Experiments span physics, life sciences, and social sciences, with gains on structured-data tasks and reduced reliance on pure language reasoning.

One caveat worth holding: the abstract doesn't cover failure modes or error accumulation across heterogeneous model handoffs. Reliability of cross-domain agent systems is harder to read than benchmark numbers, so wait for the full paper before betting on this.

Key takeaways: - Heterogeneous foundation model collaboration, not another agent loop. The LLM moves from "general solver" to "coordinator." - Three integration modes give teams building scientific tooling a clean migration path from drop-in to full orchestration. - For cross-domain agent systems, error accumulation matters more than benchmark scores. Hold judgment until the full paper drops.

Source: Heterogeneous Scientific Foundation Model Collaboration

02 FID as a Training Loss, Once You Decouple the Batch

Split the sample count for FD estimation (50k) from the backprop batch (1024). That single change lets Fréchet Distance, stuck as an evaluation metric for years, actually optimize as a loss in representation space. Add FD-loss in post-training, and a one-step generator reaches 0.72 FID on ImageNet 256×256. The same loss converts multi-step generators to one-step directly: no distillation, no adversarial training, no per-sample target.

The authors also notice Inception FID drifts from visual quality under modern representations, and propose FDr^k, a metric that spans representation spaces.

The method explains in one sentence but unlocks a category. Findings like this often matter more than new models.

Key takeaways: - The blocker for using evaluation metrics as training losses was batch size. Decoupling estimation samples from gradient samples gets around it. - Adding FD-loss in post-training is a low-cost path to better generation quality. Worth a try for diffusion and AR teams. - A single FID ranking doesn't track visual quality reliably. Cross-representation metrics are more honest.

Source: Representation Fréchet Loss for Visual Generation

03 Frontier Web Agents Code Through Vague Specs

Web development agent benchmarks default to structured, complete requirement docs. Real non-expert users send instructions full of ambiguity, redundancy, and contradiction. InteractWeb-Bench puts those variables back in. Four user agent types and persona-driven prompt perturbations simulate non-expert phrasing, paired with a Clarify/Implement/Verify/Submit action space that lets the agent ask back, verify, or correct intent.

In experiments, frontier multimodal agents fall into blind execution. They don't ask for clarification, take instructions literally, and ship outputs misaligned with what the user actually wanted. The contribution isn't another leaderboard. It moves "actively clarify intent" from optional to required, pushing coding agent failure modes upstream from code quality to intent recognition.

Key takeaways: - Blind execution under vague instructions is the real blocker for shipping consumer coding agents. It breaks before code quality even matters. - Adding instruction perturbation and an interactive action space gets benchmarks closer to real usage than pure code generation. - For teams building web agents for non-experts, make Clarify a first-class capability, not auxiliary.

Source: InteractWeb-Bench: Can Multimodal Agent Escape Blind Execution in Interactive Website Generation?

04 Building a Computer the Agent Can Actually Use

For long-horizon productivity agents, the bottleneck isn't trajectory generation. It's whether the world the agent sees on boot looks real — directories that look human-organized, documents and spreadsheets and slides whose contents reference each other. Synthetic Computers at Scale productizes this layer of environment synthesis. First, synthesize a user-specific computer with a full file system and content-coherent artifacts. Then have one agent generate productivity goals worth roughly a month of human work for that user, and another agent play the user across 2000+ turns and 8+ hours of simulation.

Initial experiments ran 1000 synthetic computers. The resulting experience data drove clear gains on both in-domain and out-of-domain evaluations. The direction lines up with recent work like ClawGym on agent training infrastructure, but the division of labor is different. This stack handles "what the world looks like to the agent," not how the agent acts.

Key takeaways: - Long-horizon agent training is shifting from trajectory generation to environment synthesis. The ceiling is set by user context fidelity. - This complements action-side training frameworks rather than competing with them. - Personas can scale to billions in theory. The 8-hour-per-simulation compute bill is the real gate.

Source: Synthetic Computers at Scale for Long-Horizon Productivity Simulation

Also Worth Noting

Today's Observation

Three papers from completely different angles converged on the same point today, each pinning a different location. Eywa sees it this way: forcing mature domain foundation models like protein structure and physics simulation through natural language strips structural information the LLM can't reconstruct, so the agent loop has to cede control and treat non-language structured prediction as a first-class citizen. The visual generation taxonomy paper frames it differently: scaling appearance mapping further can't sustain spatial consistency, persistent state, or physical interaction, and the next move in visual generation is explicit world state, not more photorealistic pixels. Intern-Atlas pins yet another spot: the surface graph of paper citations doesn't feed AI scientist systems, so the next layer has to model method-to-method evolution explicitly as a graph rather than running more retrieval over citations.

Three completely unrelated scopes. Same conclusion: piling on at the surface representation layer (natural language, appearance, citations) won't carry the next step. The work is to surface the structured representation underneath.

Action for teams: Audit your pipeline and find the spot where "more data or a bigger model only buys a few more points." That's usually where the next layer of structure is implicit. The next move is plugging in a domain-specialized model, changing the intermediate representation, or making implicit structure first-class — not scaling more on the same surface.