Today's Overview

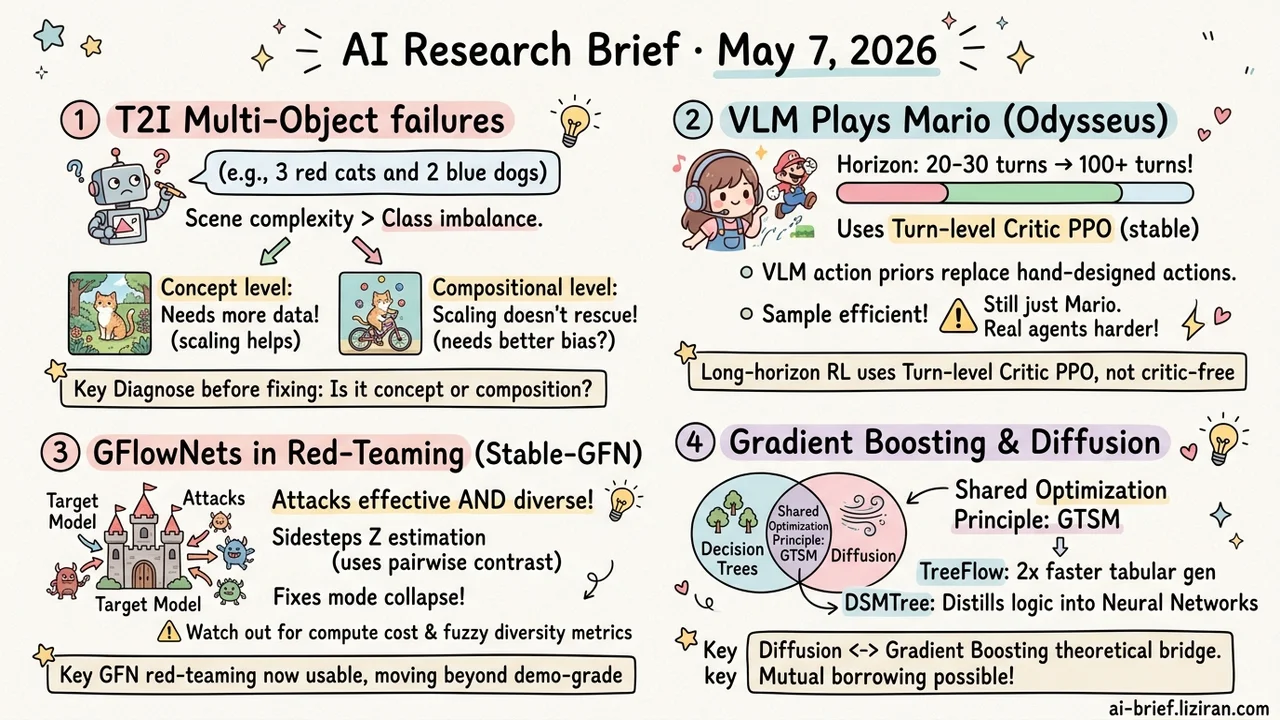

- Multi-Object Generation Failures Need Attribution Before Solutions: T2I multi-object failures come from scene complexity, not class imbalance. Concept-level issues respond to more data; compositional issues don't scale away.

- VLM Plays Mario to 100+ Turns With a New RL Recipe: Odysseus uses a turn-level critic PPO variant to push RL horizon from 20-30 to 100+. Pre-trained VLM action priors replace hand-designed action engineering.

- GFlowNets Move From Demo to Usable in Red-Teaming: Stable-GFN uses contrastive trajectory balance to bypass partition function estimation, fixing mode collapse head-on.

- Gradient Boosting Is Diffusion's Asymptotic Optimum: Decision trees and diffusion share the GTSM optimization principle. TreeFlow's 2x speedup on tabular generation is early landing evidence.

Featured

01 Multi-Object Failures Aren't About Class Imbalance

T2I models trip on multi-object scenes. Standard fixes change architecture or add CoT-style planning. This work steps back and asks how much the data alone explains.

The authors built a controlled synthetic data framework called mosaic, splitting the problem into two mechanisms. Concept generalization covers cases where every concept appears in training but distributions are uneven. Compositional generalization holds out specific combinations deliberately. Then they vary data scale.

Two findings stand out. Scene complexity matters far more than class imbalance, and counting tasks stay stubborn at low data scale. Compositional generalization collapses cleanly when more concept combinations are held out — scaling doesn't reliably rescue it. So multi-object failures need attribution first. Concept-level problems can be fixed by adding data; compositional problems may require new inductive bias. One caveat: all conclusions come from controlled synthetic data. Before transferring to LAION-scale real distributions, calibrate against your own failure cases.

Key takeaways: - Diagnose before you fix. Concept-level failures respond to data scaling; compositional ones may not. - Scene complexity is the bigger driver, not class imbalance. Counting is especially hard at low data scale. - Conclusions come from synthetic data. Cross-check against your real failure cases before changing architecture or adding planning modules.

Source: When Do Diffusion Models learn to Generate Multiple Objects?

02 100-Turn VLM RL: Mario Isn't the Point

Odysseus picks Super Mario as a testbed and pushes VLM decision-making RL horizon to 100+ turns. Average completion progress runs more than 3x ahead of frontier models. The bottleneck before this was sticky: RL training horizon stalled at 20-30 turns, and human-trajectory SFT was both expensive and limited in generalization.

The interesting part isn't Mario itself. It's the engineering takeaways. A PPO variant with a lightweight turn-level critic stays much more stable on long horizons than critic-free methods like GRPO or Reinforce++. Pre-trained VLMs already carry strong action priors, which removes the hand-designed action engineering that classical deep RL needed. Sample efficiency improves visibly. These are usable directly by teams building long-horizon agents.

Cold water first. Mario's visual space and state transitions are relatively controlled, and cross-game generalization stays inside the game domain. The gap to open-ended productivity agent environments is still several layers of abstraction away.

Key takeaways: - For long-horizon RL on VLMs, turn-level critic PPO beats critic-free methods on both stability and sample efficiency. - Pre-trained VLM action priors replace classical RL's hand-designed action engineering. Teams coming from classical RL miss this lever. - Don't extrapolate Mario clear rates to real agent settings. Environment control level dictates how far the method ports.

Source: Odysseus: Scaling VLMs to 100+ Turn Decision-Making in Games via Reinforcement Learning

03 GFlowNets in Red-Teaming: Mode Collapse Faced Head-On

LLM red-teaming wants attacks that are both effective and diverse. GFlowNet, a training paradigm that treats generation as flow matching, fits the goal in theory. The problem has been engineering: rewards jitter, mode collapse hits, outputs cluster on one or two templates.

Stable-GFN sidesteps the partition function Z, which is GFN's hardest quantity to estimate. Training uses pairwise contrast between trajectories, with a masking mechanism for noisy rewards and a fluency stabilizer that keeps the model out of gibberish local optima.

Reported numbers show clear gains over baselines on both attack success and diversity. Two things to watch. Pairwise contrast means each step computes an extra trajectory, so training cost isn't free. Red-teaming "diversity" is itself a suspect metric: high edit distance or cluster count doesn't mean attack patterns actually differ. For safety evaluation and adversarial sample teams, this is GFN moving from demo-grade to usable. Validate independently on your target model before plugging into production.

Key takeaways: - GFN red-teaming instability is addressed head-on, moving from demo to usable. - Contrastive training adds compute overhead. Cost the change before scaling up. - Diversity metrics are suspect. Define your own evaluation criteria before landing.

Source: Stable-GFlowNet: Toward Diverse and Robust LLM Red-Teaming via Contrastive Trajectory Balance

04 Gradient Boosting Is Diffusion's Asymptotic Optimum

The idealized version of gradient boosting turns out to be the asymptotic optimum of diffusion in a certain limit. That's the counterintuitive core finding of this theory work. The authors prove that decision trees and diffusion share one optimization principle in a suitable limit: Global Trajectory Score Matching (GTSM).

Two landing directions follow. TreeFlow runs 2x faster than diffusion baselines on tabular data generation, with better quality. DSMTree distills hierarchical tree decision logic into neural networks, getting within 2% error of teachers on most benchmarks.

The interesting part isn't that the two model families turn out to be the same thing. It's the bidirectional borrowing. Gradient boosting can adopt diffusion's training intuitions; diffusion can adopt tree structure ideas. How far this travels in production depends on follow-up work, but the theoretical bridge exists.

Key takeaways: - Decision trees and diffusion share the GTSM optimization principle. Gradient boosting is the asymptotic optimum. - TreeFlow's 2x speedup and DSMTree's within-2% distillation are early landing evidence. - Tabular generation and gradient boosting teams should track follow-up work. Bidirectional borrowing matters more than the theoretical bridge itself.

Source: Trees to Flows and Back: Unifying Decision Trees and Diffusion Models