Today's Overview

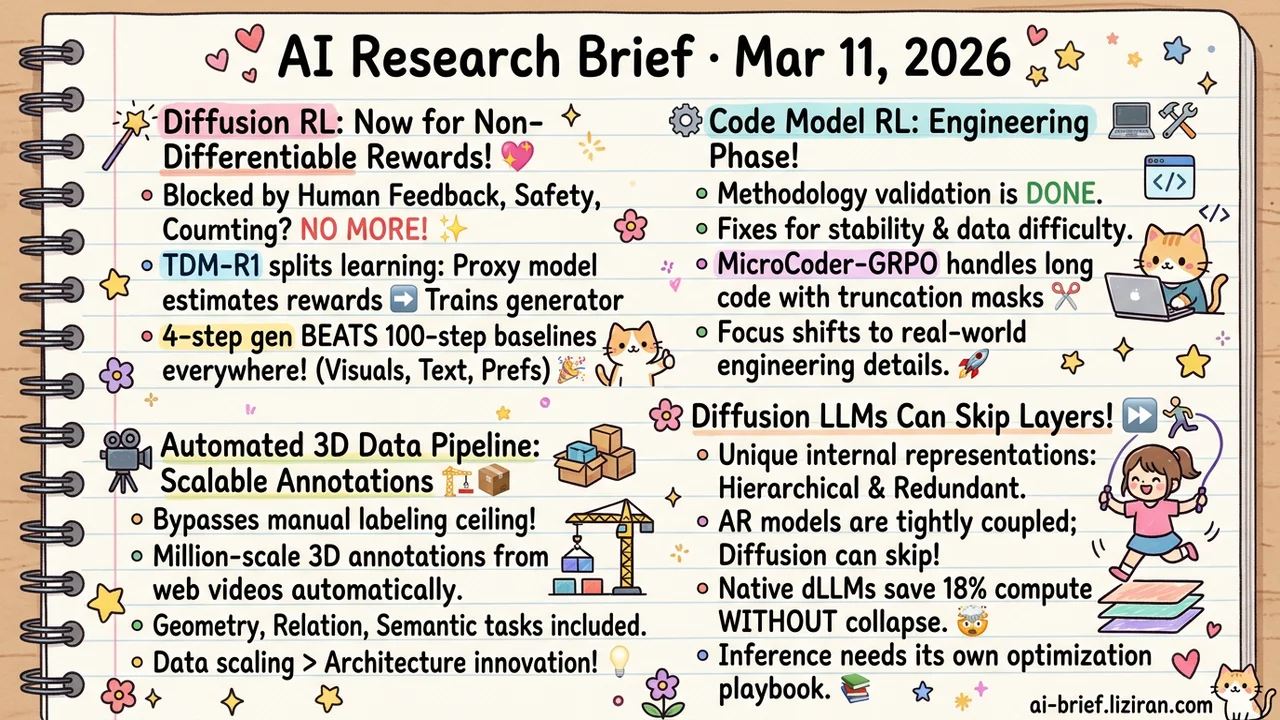

- Non-Differentiable Rewards Now Work for Few-Step Diffusion RL Training. 4-step generation beats 100-step baselines across the board. Human preference, safety, object counting — the signals that matter most in production are no longer locked out.

- Code Model RL Post-Training Enters Its Engineering Phase. Two teams independently tackled gradient stability and data difficulty distribution on the same day. The methodology validation stage is over.

- A Fully Automated Pipeline Extracts Million-Scale 3D Annotations from Web Videos. Bypassing the manual labeling ceiling, data scaling does more for 3D understanding than architecture innovation.

- Diffusion LLMs Can Skip Layers to Save 18% Compute Without Collapse. The first systematic layer-by-layer comparison reveals fundamentally different representation structures between dLLMs and autoregressive models. Acceleration tricks designed for AR don't transfer directly.

Featured

01 Training Non-Differentiable Rewards Meet Few-Step Diffusion

Few-step diffusion models have an awkward RL limitation: reward signals must be differentiable. Aesthetic scores qualify. But the most intuitive human feedback — binary "good/bad" judgments, counting accuracy, safety flags — all blocked.

TDM-R1 splits learning into two stages. A proxy model first learns to approximate non-differentiable rewards, then that proxy trains the generator. The key trick: extracting per-step reward signals along TDM's deterministic generation trajectory, so every denoising step gets feedback instead of only the final output.

Results are solid. 4-step generation doesn't just match the 100-step baseline; it beats it on text rendering, visual quality, and preference alignment, including out-of-domain generalization. Diffusion model RLHF is no longer locked into "optimize aesthetic scores only." Human preference, safety checks, object counting can all plug in now.

Key takeaways: - Non-differentiable rewards (human preference, counting, safety) can now drive RL training for few-step diffusion models. - 4-step generation beats 100-step baselines across metrics, cutting both training and inference cost. - Diffusion RLHF expands from aesthetic scores to nearly arbitrary reward signals.

Source: TDM-R1: Reinforcing Few-Step Diffusion Models with Non-Differentiable Reward

02 Code Intelligence Code Model RL: From "Can It Work" to "Making It Work"

Two teams hit different bottlenecks in code model RL post-training on the same day. MicroCoder-GRPO found that GRPO gradients destabilize as code outputs grow longer. Their fix: conditional truncation masks that selectively clip gradients on long outputs, paired with a diversity-driven temperature strategy to prevent mode collapse.

The other team attacked from the data side. Existing code training sets have severely imbalanced difficulty distributions — too many easy problems, leaving the model with nothing to learn during RL. They use LLM-based difficulty calibration and filtering to build training sets with better gradient signal.

Read together, these papers mark a phase transition. Code model RL post-training has moved past methodology validation into domain-specific engineering optimization.

Key takeaways: - Long output + GRPO gradient instability now has a targeted fix (conditional truncation masks). - Difficulty distribution in code RL training data matters more than dataset size. - Code model post-training is shifting from "can we do RL" to engineering-level detail work.

Source: Breaking Training Bottlenecks: Effective and Stable Reinforcement Learning for Coding Models | Scaling Data Difficulty

03 Multimodal Million-Scale 3D Annotations Grown from Web Videos

Holi-Spatial extracts million-scale 3D annotations directly from web video streams: 12K 3DGS scenes, 1.3M 2D masks, 320K 3D bounding boxes, 1.2M spatial QA pairs. The entire pipeline is fully automated. No human labeling required.

This sidesteps the obvious ceiling of existing 3D datasets that depend on manual annotation. The dataset covers geometry, relational, and semantic reasoning tasks. Fine-tuning VLMs on it produces clear spatial reasoning improvements, confirming that data scale genuinely matters for 3D understanding.

Key takeaways: - A fully automated pipeline generates large-scale 3D annotations from raw video, bypassing manual labeling bottlenecks. - Data infrastructure investment unlocks more 3D understanding capability than model architecture changes. - 66 community upvotes reflect strong industry demand for scalable 3D data solutions.

Source: Holi-Spatial: Evolving Video Streams into Holistic 3D Spatial Intelligence

04 Architecture Same Layer Skipping, Very Different Results

Autoregressive and diffusion language models have fundamentally different internal representations. That's the conclusion from the first systematic layer-by-layer comparison across LLaDA, Qwen2.5, and Dream-7B.

Diffusion objectives produce more hierarchical abstractions with significant redundancy in early layers. AR models couple tightly across layers — skip any one and performance drops sharply. Dream-7B, initialized from AR weights then trained with diffusion, still behaves more like an AR model than a native diffusion one. Initialization effects outlast training objectives.

Native dLLMs can skip layers directly, cutting compute by 18.75% while maintaining 90%+ performance on reasoning and code generation. No architecture changes needed, no KV cache sharing required. AR-designed acceleration methods don't carry over. dLLM deployment needs its own optimization playbook.

Key takeaways: - Diffusion LLMs' layerwise redundancy makes them naturally suited for layer-skipping acceleration. AR acceleration tricks don't transfer. - Initializing dLLMs from AR weights causes them to inherit AR representation patterns. Choose initialization strategy carefully. - As dLLMs move toward production, inference optimization needs architecture-specific redesign.

Also Worth Noting

Today's Observation

Three papers today each solved a specific engineering bottleneck: TDM-R1 brought non-differentiable rewards to diffusion RL, MicroCoder-GRPO fixed GRPO gradient instability on long code outputs, Scaling Data Difficulty tackled difficulty calibration for code RL training data. Different domains, different solutions, same signal. RL post-training for generative models has moved from "can the methodology work" to "every domain has its own engineering pitfalls."

This phase transition shifts where competitive moats live. Six months ago, "knowing how to do RL" was the barrier. Choosing GRPO vs. PPO, designing reward functions — these methodological decisions were the core advantage. Mainstream methods now have mature open-source implementations. What actually blocks people are domain-specific engineering details: how do you make non-differentiable rewards usable for diffusion models? How do you handle gradients when code outputs get long? How do you calibrate difficulty distribution in training data? This know-how lives in experiment logs and hyperparameter records, not paper abstracts.

If you're doing or planning RL post-training for generative models, spend time reproducing the engineering details of these three papers. Focus on TDM-R1's proxy reward training pipeline and MicroCoder's conditional truncation implementation. The methodology alpha has evaporated. The engineering implementation alpha is opening up.