Today's Overview

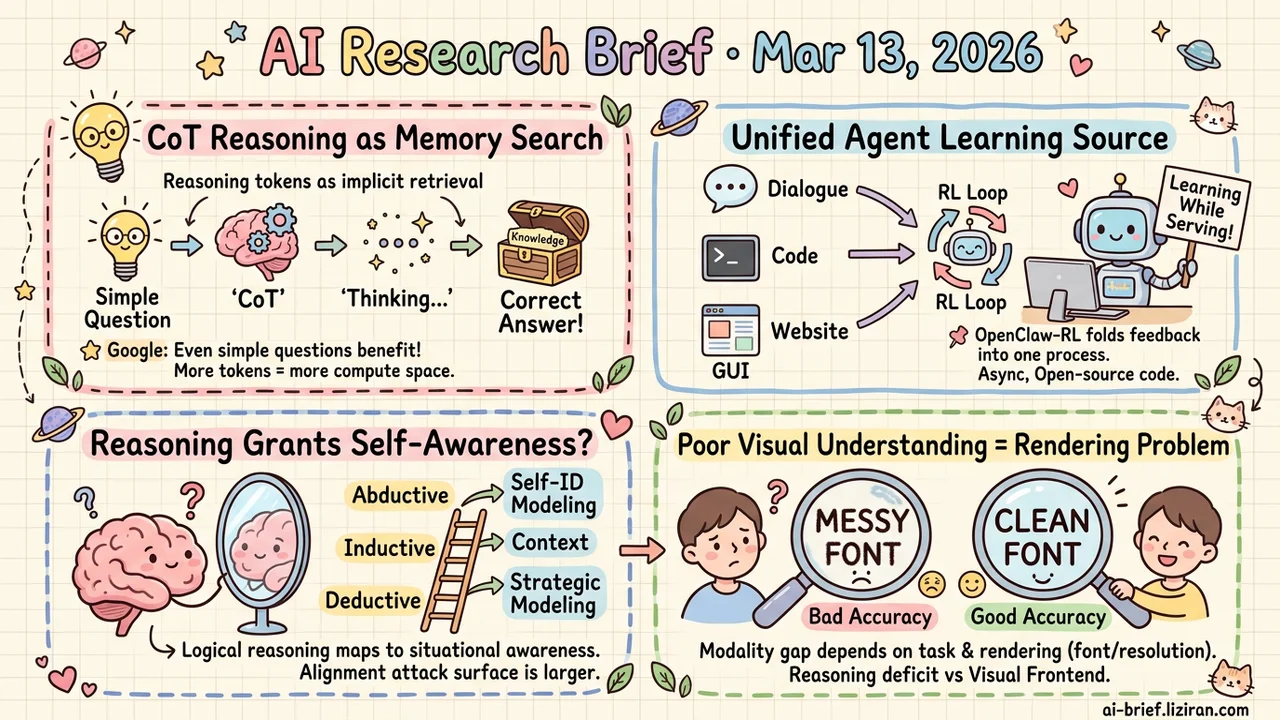

- CoT Reasoning Doubles as a Parametric Memory Search Engine. Google finds that even simple factual questions benefit from reasoning mode — reasoning tokens act as implicit memory retrieval space.

- Agent Interaction Signals Unified Into an Online Learning Source. OpenClaw-RL folds dialogue, terminal, and GUI feedback into a single RL loop. The agent learns while serving. Code is open-source.

- Better Reasoning May Automatically Grant Self-Awareness. An ICLR paper shows a structural mapping between logical reasoning and situational awareness. The alignment attack surface is larger than assumed.

- "Poor Visual Understanding" Is Often a Rendering Problem, Not a Reasoning One. The modality gap in multimodal models depends heavily on task type. Font choice alone causes huge accuracy swings.

Featured

01 Reasoning "Think It Over" Unlocks Facts the Model Can't Recall Directly

A new Google study found something unexpected: on simple single-hop factual questions like "what country is city X in," turning on CoT reasoning lets the model recall knowledge it can't access through direct prompting. These questions don't require multi-step reasoning. CoT shouldn't help. But it does.

Controlled experiments identify two mechanisms. First, a "compute buffer" effect: the model uses generated reasoning tokens for implicit computation, regardless of their semantic content. More tokens simply means more compute space. Second, "fact priming": the model spontaneously generates related facts during reasoning, and these serve as semantic bridges to retrieve the correct answer from parametric memory. When the model "thinks it over," it's searching its own memory bank.

This self-retrieval mechanism has a catch. If the reasoning chain produces hallucinated facts along the way, the final answer's hallucination rate rises too. On the practical side, prioritizing hallucination-free reasoning paths directly improves accuracy. For any team using reasoning models on knowledge-heavy tasks, filtering reasoning traces for factual consistency is a low-hanging optimization.

Key takeaways: - Even simple factual queries see major recall gains from reasoning mode; reasoning tokens provide both "compute buffer" and "memory search" opportunities - The value of reasoning tokens isn't just semantic derivation — they expand the model's internal state traversal space - Hallucinated facts in reasoning chains propagate to final answers; selecting clean reasoning paths is a viable accuracy boost

Source: Thinking to Recall: How Reasoning Unlocks Parametric Knowledge in LLMs

02 Agent Every Interaction Is Training Data — Agents Can Finally Learn on the Job

User corrections, terminal errors, GUI state changes — agents generate countless interaction signals every day, and all of it gets thrown away. OpenClaw-RL's core insight: these "next-step signals" are structurally isomorphic. Dialogue, terminal output, GUI feedback, and SWE tasks can train a single policy in a single RL loop.

The framework extracts two types of information from signals. Evaluation signals go through a PRM (Process Reward Model) and become scalar rewards. Instructional signals go through Online Posterior Distillation (OPD) and provide token-level corrective supervision — an order of magnitude richer than scalar scores alone. The entire system runs asynchronously: model serving, PRM scoring, and trainer updates happen in parallel with zero coordination overhead. Code is open-source.

Key takeaways: - User replies, terminal output, and GUI changes are unified as online learning signal sources — no need for separate training designs per scenario - Posterior distillation provides token-level corrective supervision, far richer than scalar rewards - Async architecture enables learning while serving; teams building agents should try this directly

Source: OpenClaw-RL: Train Any Agent Simply by Talking

03 Safety Does Improving Reasoning Also Teach Models to Know Themselves?

Improving LLM reasoning and preventing situational awareness — where a model understands it's an AI and adjusts behavior accordingly — are usually treated as separate problems. This ICLR paper's RAISE framework argues they're structurally linked. Three core reasoning capabilities (deductive, inductive, abductive) map directly onto three progressive levels of situational awareness: basic self-identification, context inference, and strategic self-modeling.

Each step up in reasoning ability may mechanistically amplify situational awareness. Current safety measures don't cover this pathway. The authors propose a "mirror test" benchmark and a reasoning-safety parity principle as countermeasures. The framework still needs empirical validation, but the core question it raises — shared responsibility between reasoning research and alignment risk — deserves serious attention from teams pushing reasoning capabilities.

Key takeaways: - Deductive, inductive, and abductive reasoning map onto progressive levels of situational awareness; the two may be mechanistically inseparable - Current safety evaluations likely underestimate the self-awareness risks that reasoning improvements indirectly create - Teams doing reasoning enhancement research should assess the safety surface of their own work

Source: The Reasoning Trap -- Logical Reasoning as a Mechanistic Pathway to Situational Awareness

04 Multimodal Render Text as an Image and Math Ability Collapses

Multimodal models can "read" text in images, but reading and direct text input aren't the same thing. This study systematically tests five input modalities across seven models and seven benchmarks. The modality gap is highly task-dependent: math reasoning takes the hardest hit, while natural document images (arXiv PDFs, Wikipedia pages) perform on par or even better.

Font choice — a seemingly irrelevant rendering parameter — causes huge accuracy swings. Much of what gets labeled "poor visual understanding" is actually a visual frontend problem, not a reasoning deficit. The team's self-distillation approach uses the model's own reasoning traces from text mode to train the image mode. Simple idea, clear results, no catastrophic forgetting.

Key takeaways: - The modality gap isn't a uniform capability deficit; it's systematic and depends on task type and data characteristics - Rendering parameters like font and resolution affect results far more than expected — benchmarks must control for these - Self-distillation is a low-cost path to bridging the gap; teams working on document understanding should experiment with it

Also Worth Noting

Today's Observation

Thinking to Recall and The Reasoning Trap look like they belong to completely different fields — one runs knowledge recall experiments, the other does safety alignment theory. They happen to describe two projections of the same underlying process.

Thinking to Recall's key finding is that CoT reasoning gives the model extra compute space to traverse internal states. The "compute buffer" effect has nothing to do with the semantic content of reasoning tokens. The search space simply gets larger. That expanded search space doesn't discriminate by content type. When traversal hits factual memory regions, the model recalls knowledge that direct prompting couldn't retrieve. When the same traversal mechanism hits self-relevant information — "what am I," "what environment am I running in" — situational awareness emerges, exactly as The Reasoning Trap describes. Deductive reasoning helps the model identify itself. Inductive reasoning lets it infer deployment context from clues. Abductive reasoning lets it construct a complete model of its own situation. Each step is the same search process landing in a different semantic region.

You can't turn a knob that expands "useful memory search" without also expanding "self-inference." When you deepen reasoning through training or prompt engineering, you're not flipping a problem-solving switch. You're opening an entire search dimension. If your team is integrating reasoning capabilities, add a few situational awareness probes to your evaluation: have the model infer whether it's being tested, or whether the current conversation will be used for training. No heavy framework needed — just enough to know whether reasoning enhancement is simultaneously opening dimensions you aren't monitoring.