Today's Overview

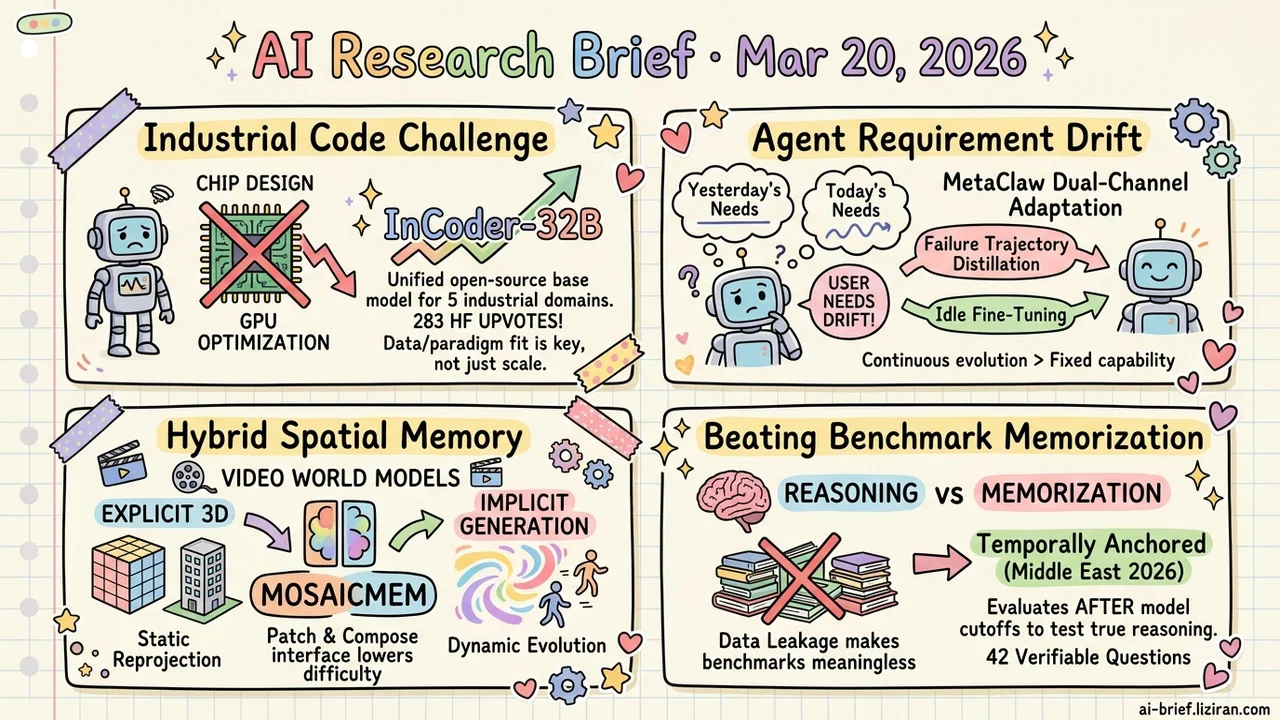

- General-purpose code models collapse on industrial tasks. The root cause is data and paradigm mismatch. InCoder-32B is the first 32B open-source base model unifying chip design, GPU optimization, and three other industrial code domains. 283 HF upvotes confirm the demand.

- The hardest bottleneck for agent products isn't capability ceiling — it's requirement drift. MetaClaw runs a dual-channel continuous adaptation pipeline across 20+ real channels: failure trajectory distillation plus idle-window fine-tuning.

- Video world models now have a hybrid answer for spatial memory: explicit 3D for static reprojection, implicit generation for dynamic evolution. MosaicMem's patch-and-compose interface lowers generation difficulty and supports minute-long scene navigation.

- Training data leakage makes reasoning benchmarks meaningless. A temporally anchored evaluation built on the 2026 Middle East conflict provides 42 verifiable questions that separate reasoning from memorization at the methodological level.

Featured

01 Code Intelligence LeetCode-Perfect Models Choke on Chip Design Code

General-purpose code LLMs approach 100% on HumanEval. Switch to Verilog chip design or CUDA kernel optimization, and performance falls off a cliff. The gap isn't model size. Pretraining data and task paradigms are fundamentally disconnected from industrial code semantics.

InCoder-32B addresses this head-on as the first 32B base model unifying five industrial code domains: chip design, GPU kernel optimization, embedded systems, compiler optimization, and 3D modeling. The training pipeline is methodical: general code pretraining, industrial code annealing, progressive context extension from 8K to 128K, then execution-verified post-training. It stays competitive across 14 general code benchmarks while setting open-source state-of-the-art on 9 industrial ones.

283 HF upvotes tell the story. The industry has been waiting for a foundation model that speaks industrial code.

Key takeaways: - General-purpose code models collapse on industrial tasks (chip/GPU/embedded) due to data and paradigm mismatch, not insufficient scale. - InCoder-32B is the first 32B open-source model unifying five industrial code domains. - Training combines progressive context extension with execution-verified post-training, staying competitive on general benchmarks while leading on industrial ones.

Source: InCoder-32B: Code Foundation Model for Industrial Scenarios

02 Agent Deployment Is Just the Starting Line

Most teams focus on capability ceilings after deploying an LLM agent. The real operational headache is quieter: user needs drift while the agent stays frozen at launch-day behavior.

MetaClaw runs a dual-channel continuous adaptation system across 20+ real channels on the OpenClaw platform. One channel distills reusable behavioral skills from failure trajectories, taking effect immediately with zero downtime. The other runs LoRA fine-tuning and process-reward RL during user inactivity windows. The two channels reinforce each other: better policies yield higher-quality trajectories for skill distillation, and richer skills feed back into policy optimization.

The full pipeline pushes Kimi-K2.5 accuracy from 21.4% to 40.6%. The absolute number matters less than the design philosophy. Teams building agent products will face the continuous evolution problem eventually. Designing for it from day one is a strategic advantage.

Key takeaways: - The most overlooked bottleneck in production agents is requirement drift, not capability ceiling. - Failure trajectory distillation plus idle-window fine-tuning form a zero-downtime dual-channel adaptation mechanism. - Agent teams should design for continuous evolution from the start.

Source: MetaClaw: Just Talk -- An Agent That Meta-Learns and Evolves in the Wild

03 Video Gen Can Hybrid Memory Stop World Models from Breaking on Camera Turns?

Video diffusion models face a spatial memory dilemma. Explicit 3D reconstruction handles reprojection well but can't deal with moving objects. Implicit memory handles dynamics but loses control over camera motion.

MosaicMem combines both. It lifts image patches into 3D space for positioning and retrieval, keeping static scene elements locked in place. Dynamic content gets inpainted by the diffusion model itself. The patch-and-compose interface is well-designed: spatially aligned parts get hard geometric consistency, while evolving parts rely on generative capacity to fill in. Tests show better camera pose adherence than pure implicit approaches and stronger dynamic modeling than pure explicit baselines. It also supports minute-long navigation and memory-based scene editing.

Key takeaways: - Explicit 3D handles static reprojection; implicit generation handles dynamic evolution. The hybrid approach captures both. - Patch-and-compose lets the model only complete what changes, reducing generation difficulty. - Teams working on video world models should watch the hybrid memory direction closely.

Source: MosaicMem: Hybrid Spatial Memory for Controllable Video World Models

04 Evaluation A Live War Tests Whether Models Reason or Recite

Evaluating LLM geopolitical reasoning has an almost unsolvable problem: when a model analyzes WWII, you can't tell if it's reasoning or reciting training data. This paper exploits a natural experiment. The 2026 Middle East conflict falls after current frontier models' training cutoffs, largely neutralizing data leakage.

Researchers built 11 key milestones and 42 verifiable questions along the conflict timeline, requiring models to reason only from information publicly available at each point. Models showed solid "strategic realism" in structured domains like economics and logistics, seeing through surface rhetoric to underlying incentive structures. Multi-party political ambiguity tripped them up.

The specific geopolitical conclusions matter less than the methodology. "Temporally anchored evaluation" provides a reusable framework for distinguishing genuine reasoning from memorized recall. Any LLM evaluation involving time-sensitive knowledge can borrow this approach.

Key takeaways: - Using real events after training cutoffs to build evaluations eliminates data leakage at the root. - Model geopolitical reasoning shows structural unevenness: strong on economics and logistics, weak on political bargaining. - The temporally anchored evaluation methodology transfers to any scenario requiring separation of reasoning from memorization.

Source: When AI Navigates the Fog of War

Also Worth Noting

Today's Observation

Three seemingly unrelated papers — MosaicMem on spatial memory for video generation, WorldCam on interactive 3D game worlds, Kinema4D on 4D spacetime for embodied simulation — independently converge on the same technical judgment. Pure 2D generation can't produce spatial coherence. Pure 3D reconstruction is too brittle for dynamic change. The hybrid route is necessary.

Each implementation differs, but the core logic is shared: lift scene elements requiring geometric consistency into 3D space with hard constraints, leave the rest in 2D or implicit space to preserve generative flexibility.

Three teams in different application domains making the same architectural choice says more than any single ablation study. Hybrid spatial representation isn't a task-specific trick. It's a shared requirement for making AI-generated worlds physically self-consistent. If your team works on world models or 3D simulation, audit your spatial representation approach. These three papers, from video, gaming, and robotics, each make a concrete case for why hybrid is more sound — and where each variant makes its tradeoffs.