Today's Overview

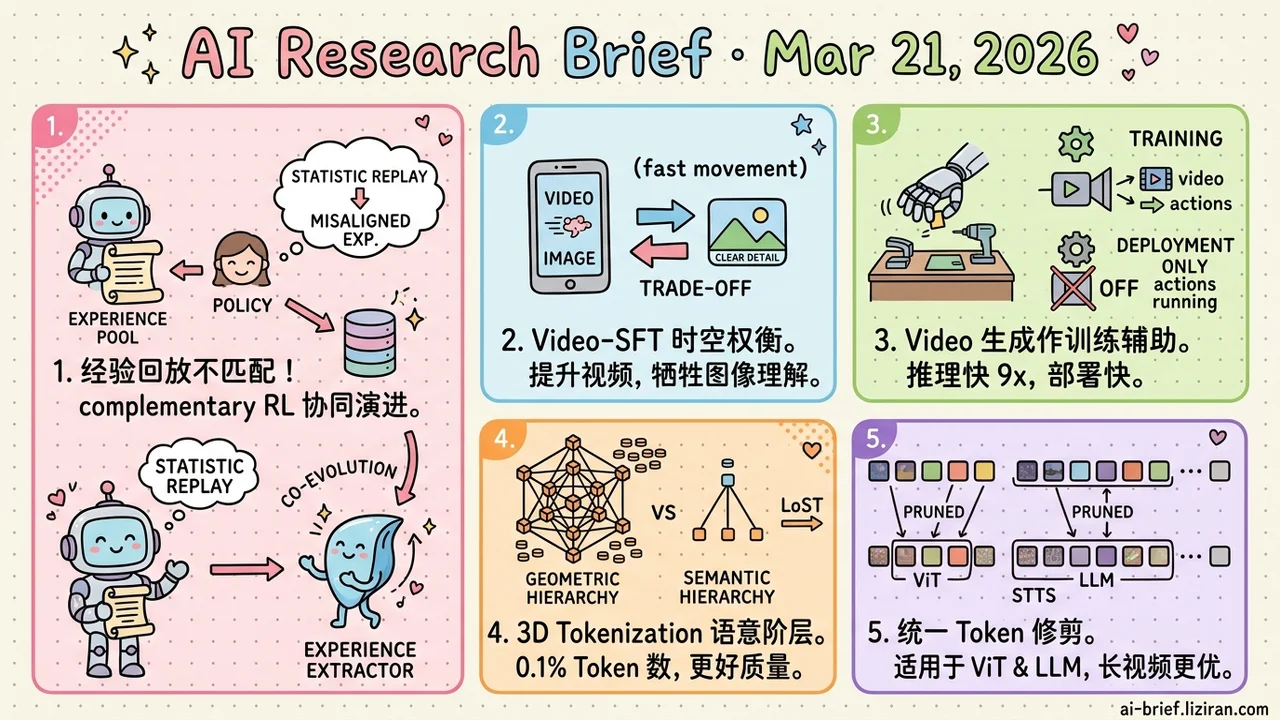

- Misaligned experience replay is a silent bottleneck in agent RL. Complementary RL lets the experience extractor adapt based on policy performance, enabling co-evolution instead of static accumulation.

- Video-SFT's temporal gains come at the cost of spatial understanding. Systematic experiments across architectures and scales confirm this is a structural trade-off, not a model-specific bug.

- Video generation as auxiliary supervision for robot policies, disabled at deployment. GigaWorld-Policy's decoupled design runs 9x faster than Motus with 7% higher success rate.

- 3D tokenization shifts from geometric to semantic hierarchy. LoST achieves better reconstruction quality with 0.1% of prior methods' token count.

- Token pruning across ViT and LLM finally unified. STTS is end-to-end trainable, with efficiency gains that scale as you sample more frames for long videos.

Featured

01 Agent Why Experience Replay Goes Stale

RL-trained agents have an under-discussed degradation problem. You accumulate historical experiences to help the policy learn, but as the policy improves, the experience pool stays frozen. Beginner-stage notes start misleading intermediate-level decisions. Existing approaches either store experiences statically or update too slowly to keep pace with policy iteration. The gap widens throughout training.

Complementary RL borrows from neuroscience's "complementary learning systems" theory. The experience extractor and policy network co-optimize within the same RL loop. The policy learns from sparse outcome rewards. The experience extractor adjusts based on whether its contributions actually helped the policy improve. Experience value is judged by real policy performance, not an independent standard.

Single-task performance improves by 10%, with solid scaling in multi-task settings. Code is available. The specific numbers matter less than the idea itself: co-evolution of experience and policy is an unavoidable problem in long-horizon agent training. Most agent frameworks haven't seriously addressed this layer.

Key takeaways: - Misaligned decay between experience replay and policy is an underestimated efficiency bottleneck in agent RL. - Complementary RL dynamically adapts the experience extractor based on actual policy performance, enabling co-evolution. - Teams building long-horizon agents should pay attention to experience management as a neglected dimension.

Source: Complementary Reinforcement Learning

02 Multimodal Video Fine-Tuning's Hidden Bill

Video-SFT is standard practice for multimodal LLMs. The default assumption: adding video data only makes the model stronger. This systematic study breaks that assumption. Across architectures and parameter scales, Video-SFT consistently degrades static image understanding while improving video performance. More counterintuitively, sampling more frames boosts video scores but doesn't lift image ability. Temporal and spatial understanding trade off structurally.

The authors propose an instruction-aware mixed-frame strategy for partial mitigation. "Partial mitigation" says it all: this trade-off is far from solved.

Key takeaways: - Video-SFT is not a free lunch. Temporal understanding gains come at the cost of spatial regression. - This pattern holds across architectures and scales. Not a single-model bug. - Teams doing video fine-tuning need to monitor image benchmarks alongside video metrics.

Source: Temporal Gains, Spatial Costs: Revisiting Video Fine-Tuning in Multimodal Large Language Models

03 Robotics Train with Video Generation, Deploy Without It

Jointly predicting visual dynamics and actions is expensive. Inference overhead is high, and action accuracy gets dragged down by video generation quality. GigaWorld-Policy takes a pragmatic decoupling approach. During training, the model predicts both action sequences and future video. Video generation provides physical-plausibility supervision. Through causal design, future video tokens don't influence action tokens. At deployment, video generation shuts off. Only action prediction runs.

This "use during training, discard at deployment" design delivers real gains: 9x faster inference than Motus with 7% higher task success rate. For robot deployments needing real-time response, this balance of inference efficiency and action accuracy matters more than chasing better generation numbers.

Key takeaways: - Decouples visual dynamics from action prediction: video generation serves as auxiliary supervision during training, gets disabled at deployment. - 9x faster than Motus with 7% higher success rate; 95% improvement over pi-0.5 on RoboTwin 2.0. - Inference efficiency matters more for real robot deployment than generation quality benchmarks.

Source: GigaWorld-Policy: An Efficient Action-Centered World–Action Model

04 Architecture 3D Generation Has Been Borrowing the Wrong Tokenization

0.1% of the token count. Better reconstruction quality. LoST's move: shift 3D tokenization from geometric hierarchy to semantic hierarchy. Current autoregressive 3D generation borrows geometric Level-of-Detail from rendering and compression. Encode coarse geometry first, then refine. This hierarchy was designed for rendering efficiency, not autoregressive modeling. Tokens lack semantic coherence, and sequences run long.

LoST reorders tokens by semantic salience. The first few tokens decode into a complete, plausible shape. Later tokens add detail. DINO feature space alignment ensures token order reflects "what matters" rather than "where things are." The result: 0.1%–10% of prior methods' token count at better reconstruction and generation quality.

Key takeaways: - The bottleneck in 3D autoregressive generation is tokenization assumptions, not model size. - Ordering tokens by semantic hierarchy instead of geometric hierarchy improves sequence efficiency by 2–3 orders of magnitude. - This infrastructure-level fix matters more for practical 3D deployment than scaling up models.

Source: LoST: Level of Semantics Tokenization for 3D Shapes

05 Efficiency Video LLM Token Pruning Always Misses Half the Problem

The inference bottleneck in video VLMs largely comes from spatiotemporal redundancy in visual tokens. Existing pruning approaches hit an awkward gap. ViT-side pruning ignores downstream language task needs. LLM-side pruning accepts all redundant tokens from ViT.

STTS unifies both stages. A lightweight scoring module prunes tokens in ViT and LLM simultaneously. Temporal pruning uses auxiliary loss. Spatial pruning follows LLM gradient backpropagation. No text-conditioned filtering, no token merging. End-to-end training is all it takes.

Across 13 video QA tasks, average performance drops less than 1 point. Efficiency gains grow as you sample more frames, making this especially practical for long video. The method isn't complex. It wins by solving a fragmentation problem with one unified framework.

Key takeaways: - Unifies token pruning across ViT and LLM, fixing the disconnect in approaches that prune each side independently. - End-to-end trainable without text-conditioned filtering; low integration cost. - Efficiency gains increase with more sampled frames. Clear deployment value for long videos.

Source: Unified Spatio-Temporal Token Scoring for Efficient Video VLMs

Also Worth Noting

Today's Observation

Three findings from today connect in an interesting way. Complementary RL reveals that agent experience pools go stale without active updates. CoVerRL finds that majority voting in label-free RL collapses output diversity. The Video-SFT study shows temporal gains come at a hidden spatial cost.

These problems span agent training, reasoning optimization, and multimodal fine-tuning. They share one underlying mechanism: optimization pressure creates visible progress on target metrics while inflicting systematic damage on dimensions that standard evaluations don't cover. Nobody monitors experience pool freshness during agent training. Nobody tracks output distribution entropy during reasoning training. Nobody watches image understanding retention during video fine-tuning. These aren't vague "training is hard" complaints. They're specific metric blind spots: degradation dimensions that default pipelines don't monitor and therefore don't expose.

"Pipeline runs, metrics go up" doesn't mean training is working correctly. A low-cost countermeasure: add 2–3 non-target degradation metrics to your training dashboard. Nothing fancy. Plot the curves you're currently ignoring: distribution shift between experience pool and current policy, output diversity entropy, periodic eval on non-target tasks. When those curves drop, trigger a review. Near-zero cost, but catches damage before it compounds into deployment problems.