Today's Overview

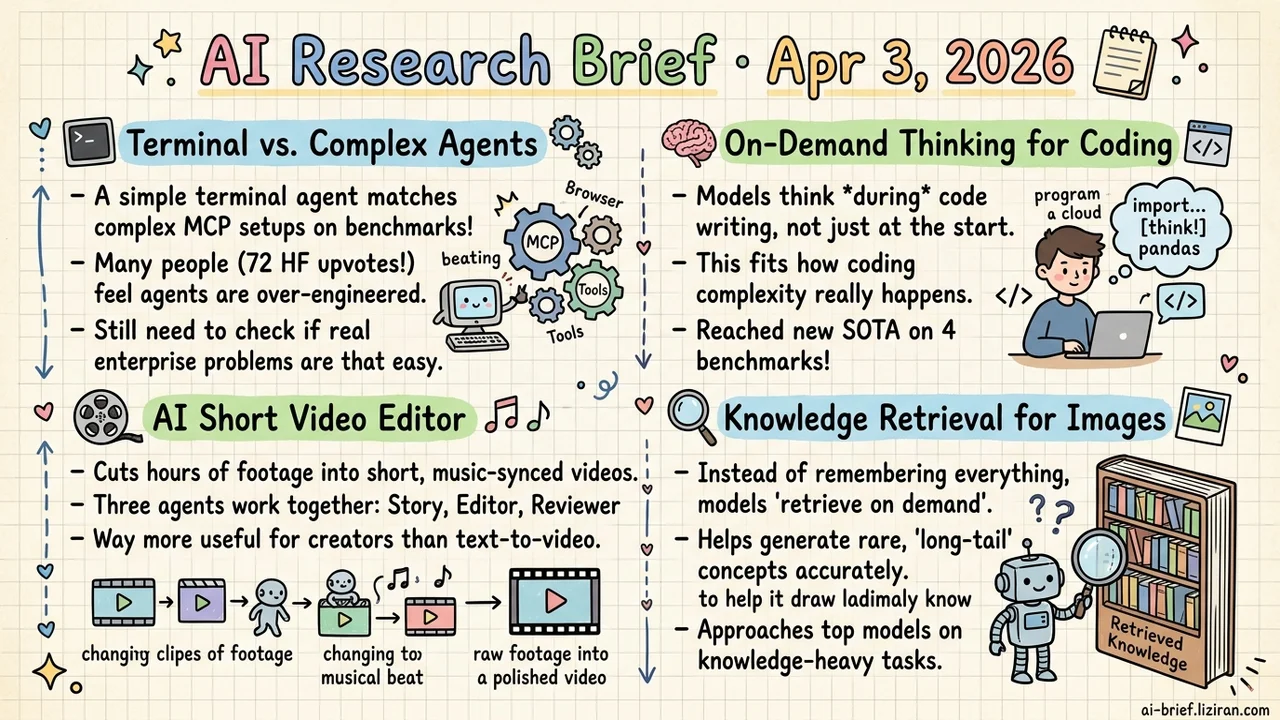

- A Terminal-Only Agent Matches Fully Equipped MCP Setups. 72 HF upvotes confirm practitioners' collective anxiety about agent over-engineering is real — but whether the benchmark tasks cover true enterprise complexity still deserves scrutiny.

- On-Demand Reasoning Tokens During Code Generation Hit SOTA Across Four Benchmarks. Think-Anywhere triggers reasoning at high-entropy positions, matching how complexity actually unfolds when you write code.

- Three-Layer Agent Collaboration Turns Hours of Footage Into Music-Synced Short Videos. Understanding and editing existing material delivers far more practical value to creators than text-to-video generation.

- Image Generation Shifts From "Memorize Everything" to "Retrieve on Demand." Unify-Agent uses an agentic pipeline to break through the knowledge ceiling on long-tail concepts, approaching top closed-source models after training on 143K trajectories.

Featured

01 Agent A Fully Armed Agent, Beaten by a Terminal Window

The industry is busy stacking agent capabilities: MCP protocols, browser control, multi-role orchestration, tool chain registries. More complexity, more power — supposedly. Then this paper shows a coding agent with just a terminal and file system can match or beat those heavyweight setups on enterprise automation tasks.

The approach is dead simple: skip GUIs and dedicated tool abstractions, let the model write code to call APIs, read/write files, and run CLI commands directly. 72 HF upvotes confirm this minimalist claim hit a nerve among practitioners tired of over-engineered agent stacks.

A reality check is warranted. The "enterprise automation" evaluation may not cover real-world complexity: permission management, error recovery, multi-system orchestration. These are exactly where simple approaches tend to break. Still, one point stands. When the base model is strong enough, complex agent architectures may not be solving the user's problem. They may be solving problems the framework itself created.

Key takeaways: - A terminal + file system agent matches or beats MCP and web agent approaches on enterprise automation benchmarks. - When base models are strong enough, stacking tool abstraction layers may yield negative marginal returns. - Whether the evaluation tasks cover real enterprise complexity needs closer examination.

Source: Terminal Agents Suffice for Enterprise Automation

02 Code Intelligence Why Not Front-Load All the Thinking?

Think-Anywhere's idea is intuitive: let models insert reasoning tokens at any point during code generation, instead of dumping all thinking upfront. A Tsinghua team first uses cold-start training to teach the model a "pause and think anywhere" pattern. Then outcome-based RL rewards let the model discover where reasoning insertions actually help. It hits SOTA on four major benchmarks — LeetCode, LiveCodeBench, and two others — beating existing upfront thinking methods and recent post-training approaches.

The analysis matters more than the numbers. The model tends to trigger reasoning at high-entropy positions, where uncertainty peaks. It learned to think when thinking matters, not to mechanically distribute compute.

Key takeaways: - On-demand reasoning tokens fit code generation better than "think first, write later," matching how complexity emerges incrementally. - A two-stage pipeline (cold-start + RL) lets models autonomously learn when and where to reason. - The approach generalizes across model architectures. Not an artifact of one specific design.

Source: Think Anywhere in Code Generation

03 Video Gen Three Agents Take Over the Editing Suite

Most video generation research chases text-to-video. Content creators spend far more time on a different problem: cutting hours of raw footage into a tight, music-synced edit. CutClaw decomposes this into three-layer agent collaboration. Hierarchical multimodal decomposition captures fine-grained visual and audio details alongside global structure. A Playwriter Agent orchestrates the narrative, anchoring visual scenes to musical beat changes. Editor and Reviewer Agents then collaborate on the final cut, selecting shots by aesthetic and semantic criteria.

This decomposition maps to real editing stages: understand the material, build the story, refine the output. 40 HF upvotes reflect creator community interest. How well it holds up across varying footage lengths and scene complexity needs a closer look at the experiments.

Key takeaways: - Editing existing footage matters more to creators' daily workflows than generating new content from text. - Multi-agent division of labor (narrative planning, shot selection, quality review) mirrors real editing stages and offers a reusable architecture pattern. - Hours-long footage processing is the headline capability, but real-world robustness depends on material complexity.

Source: CutClaw: Agentic Hours-Long Video Editing via Music Synchronization

04 Image Gen Teaching Generators to Look Things Up First

Unified multimodal models generate common concepts well. Long-tail knowledge is another story. Specific cultural symbols, obscure landmarks: the model fabricates details because these concepts never made it into parametric memory. Unify-Agent reframes image generation as an agentic pipeline: understand the prompt, actively retrieve multimodal evidence, redescribe based on retrieval results, then generate. The team built 143K agent trajectory samples for end-to-end supervised training and released FactIP, a benchmark covering 12 categories of long-tail cultural concepts.

On knowledge-intensive generation tasks, Unify-Agent clearly improves over the base model, approaching the world knowledge of top closed-source systems. The exact gap needs full-paper comparison to assess.

Key takeaways: - Parametric knowledge has a coverage ceiling. Long-tail concepts can't be solved by adding more training data. - An agentic "retrieve then generate" pipeline shifts image models from memory-driven to on-demand lookup. - Teams working on knowledge-intensive image generation should watch this approach closely.

Source: Unify-Agent: A Unified Multimodal Agent for World-Grounded Image Synthesis

Also Worth Noting

Today's Observation

Three of today's four picks fly the "agentic" flag. Each handles task complexity in a fundamentally different way.

Terminal Agents bets on minimalism: give the agent a terminal, let coding ability absorb all task complexity. Extra tool abstraction layers are dead weight. CutClaw takes the opposite path, using Playwriter, Editor, and Reviewer agents to explicitly model each stage of the editing workflow. Unify-Agent picks a third route: no multi-role orchestration, but retrieval and tool calls bolted onto an image model, offloading knowledge acquisition from parametric memory to runtime.

The underlying question is an architecture choice: who should bear the task complexity? When the base model is strong and the task is expressible as code, a simple interface is more reliable. That's Terminal Agents' bet. When the task involves multimodal perception and creative judgment, explicit role division remains necessary. That's CutClaw's position. When the bottleneck is knowledge boundaries rather than reasoning ability, external tools beat more parameters. That's Unify-Agent's call.

A practical decision framework: before starting an agent project, ask whether your task complexity can be fully expressed as code. If yes, try the terminal-level minimalist approach first. If not, identify whether the bottleneck is multi-step collaboration or knowledge acquisition, then decide between role specialization and external tool augmentation.