Today's Overview

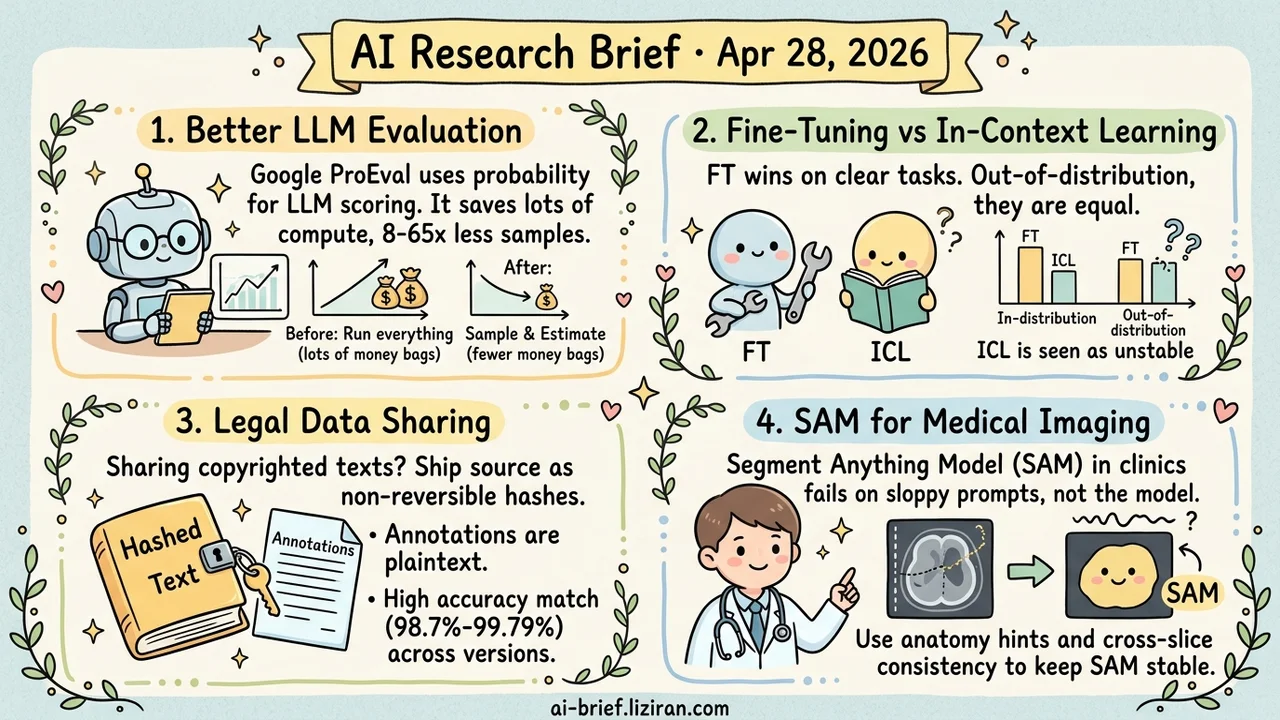

- Benchmark Eval Becomes a Probability Problem. Google's ProEval treats LLM benchmark scoring as Bayesian estimation with a pretrained Gaussian process surrogate, cutting sample budgets 8-65x at 1% error.

- FT vs ICL Finally Has a Clean Comparison. On formal-language tasks, in-distribution FT wins clearly, out-of-distribution they tie, and ICL's sensitivity to model scale and tokenization shows up as structural rather than noise.

- Copyrighted Corpora Get a Legal Workaround. Annotations release in plaintext while source text ships as non-reversible hashes; cross-edition alignment still hits 98.7%-99.79% token match.

- SAM in the Clinic Stalls on Prompts, Not the Model. Saliency-guided anatomical priors plus cross-slice consistency keep SAM stable when the only input is a sloppy midline point.

Featured

01 Evaluation: Turn Benchmarks Into Bayesian Estimation

A full LLM evaluation run keeps getting more expensive. Inference is slow, human grading is costly, and the available benchmark pool keeps growing. Google's ProEval reframes performance estimation as Bayesian quadrature with a pretrained Gaussian process as the surrogate function. "What will this model score on this benchmark" becomes a question you can answer in advance instead of running from scratch each time.

On reasoning, safety alignment, and classification tasks, hitting within 1% error takes 8-65x fewer samples. The same budget also surfaces a wider pool of failure cases. The value isn't compute savings but how evaluation budget gets allocated. Use the surrogate to filter out comparisons unlikely to show meaningful change. Save expensive human grading for the genuinely uncertain range.

The open question is how stable the prior transfer is. Cross-generation architecture shifts, or new benchmarks whose distribution drifts from what the GP saw during pretraining, will erode prior reliability. Any deployment hitting that boundary needs a calibration layer.

Key takeaways: - Evaluation can move from "run everything" to "sample-and-estimate plus active selection," changing the cost structure of comparison experiments. - The deployment posture is budget allocation: filter out clearly differentiated cases fast, send the uncertain ones to human review. - Prior transfer breaks on cross-generation models or new-distribution benchmarks; layer a calibration step on top before relying on it.

02 Training: Why FT vs ICL Studies Disagree, and What Fixes It

FT (fine-tuning) and ICL (in-context learning) comparisons keep landing in different places. The main reason is that natural-language benchmarks have fuzzy boundaries and likely data contamination. This paper runs a controlled testbed on formal languages with clear grammar, sampleable strings, and no training-data leakage. It adds a discriminative test: a model has to assign higher generation probability to in-language strings than out-of-language ones to count as having mastered the language.

Results land in three layers. In-distribution generalization is clearly better with FT than ICL. Out-of-distribution they tie. Inductive bias looks similar at low proficiency and only diverges at high proficiency. ICL is sensitive to model scale, model family, and tokenizer vocabulary, while FT stays much more stable across all three.

Conclusions from a controlled comparison transfer better than from a one-off A/B test. ICL's instability should count as an inherent property, not experimental noise.

Key takeaways: - In-distribution FT wins, out-of-distribution they tie, and inductive bias only diverges at high proficiency. - ICL sensitivity to model scale and tokenization is structural; don't expect a different prompt to stabilize it during model selection. - For reproducible capability comparisons, formal-language tasks are cleaner than NLP benchmarks.

Source: Fine-tuning vs. In-context Learning in Large Language Models: A Formal Language Learning Perspective

03 Safety: Turn Corpus Sharing's Legal Problem Into an Engineering One

NLP has a long-standing practical bottleneck. High-quality annotated corpora are often built on copyrighted novels and news, and researchers can rarely share full datasets legally. This ACL paper splits the corpus in two. Annotations go out in plaintext. Source text gets published as non-reversible hashes. Users have to legally hold the original material themselves, then apply the same hash to their own tokens to align with the annotations.

The hash holds up across edition differences. Different versions of the same novel still align on 98.7%-99.79% of tokens. The legal barrier becomes an engineering one. The authors open-sourced the implementation as novelshare, ready to use for teams doing literary or news-domain NLP.

Key takeaways: - Copyrighted material now has a legal sharing path for annotated corpora; plaintext annotations plus hashed source text is the core idea. - The hash holds up across edition differences, with cross-version alignment at 98.7%-99.79%. - Teams working on literary or news NLP can pick up the novelshare Python implementation directly.

Source: Overcoming Copyright Barriers in Corpus Distribution Through Non-Reversible Hashing

04 AI for Science: Clean Benchmark Prompts Aren't Clinical Prompts

SAM's medical-imaging benchmark wins mostly assume clean, precise prompts. Clinical workflows give it sloppy midline points that drift into adjacent anatomy and steer SAM toward inconsistent or incomplete masks. SPD's fix is layered. A lightweight saliency head learns a data-driven anatomical prior and outputs a confidence localization map as the anchor. Adjacent slices then validate and complete the noisy prompt, forming a consensus prompt close to expert reasoning. A cross-slice consistency objective folds local anatomical coherence into the loss.

On four MRI/CT benchmarks, region and boundary metrics consistently beat existing SAM adaptations and supervised baselines. The vertical-deployment bottleneck for foundation models shifts from "model capability" to "prompt resilience." For clinical deployment, investment at this layer typically returns more than model fine-tuning.

Key takeaways: - SAM's clinical failure modes cluster at prompt quality, not the model itself; prompt resilience is the real bottleneck for vertical foundation-model deployment. - Saliency-guided priors plus cross-slice consistency is a general pattern for weak annotations, transferable to documents and remote sensing where adjacent context exists. - For teams adapting SAM-style models to a vertical, evaluate real annotation quality variance first, then decide whether to invest at the prompt layer or the model layer.

Source: Learning from Noisy Prompts: Saliency-Guided Prompt Distillation for Robust Segmentation with SAM