Today's Overview

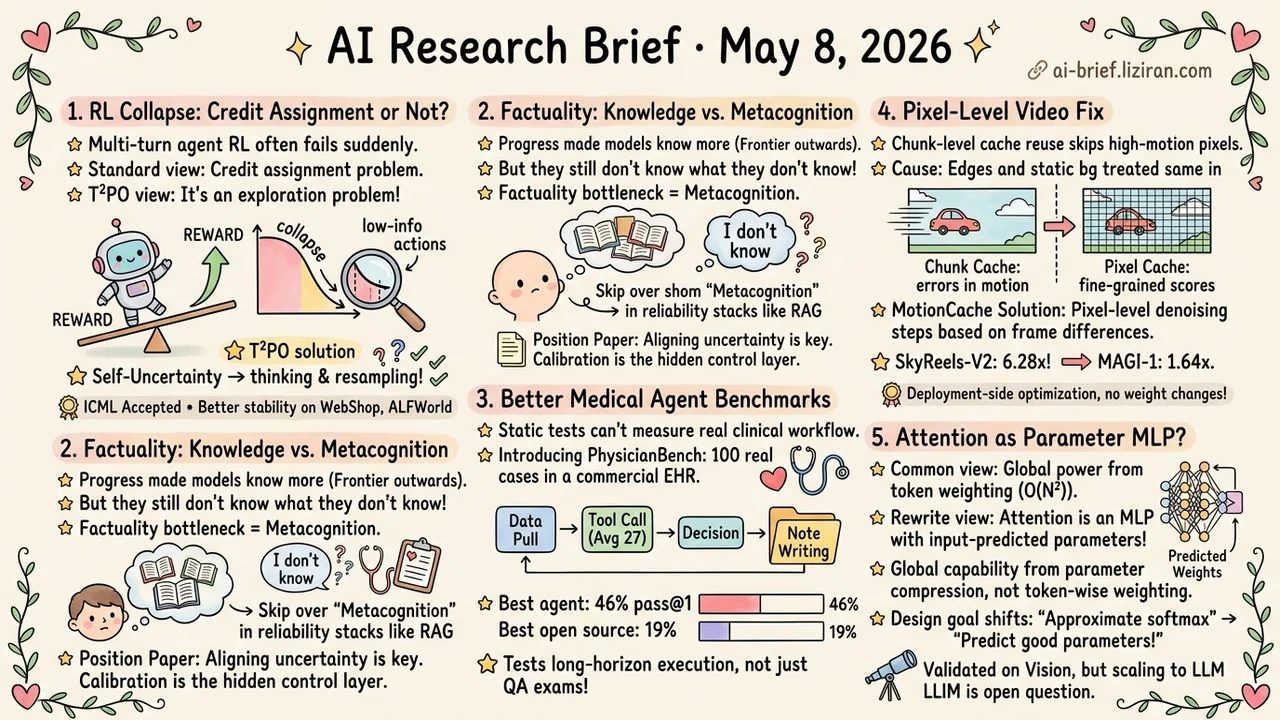

- Multi-Turn Agent RL Collapse May Not Be a Credit Assignment Problem. T²PO uses model self-uncertainty to trigger thinking and resampling. Stability and final performance both rise on WebShop, ALFWorld, and Search QA. ICML accepted.

- Factuality's Bottleneck Is Metacognition, Not Knowledge Volume. A position paper argues models still don't know what they don't know. Calibrated uncertainty is the hidden control layer in any agent reliability stack.

- A Better Scorecard for Putting Medical Agents to Work. PhysicianBench drops 100 real consultations into a commercial EHR environment. Each task averages 27 tool calls. Best agent pass@1 hits 46%; best open source only 19%.

- Pixel-Level Fix for Video Generation Caching. MotionCache assigns denoising steps per pixel using frame differences. SkyReels-V2 sees 6.28x speedup; MAGI-1 only 1.64x. Transfer depends heavily on the base model.

- What If Attention Is Just a Parameter-Prediction MLP. WeightFormer rewrites attention math as an MLP whose parameters are predicted from input. The linearization design goal shifts from "approximate softmax" to "predict good parameters."

Featured

01 Multi-Turn Agent RL Collapse Isn't About Credit Assignment

If you've trained a reasoning LLM with multi-turn RL, you know the collapse curve. Reward climbs, then suddenly tanks a few hundred steps in. The standard explanation blames credit assignment or weak trajectory filtering. T²PO offers a different diagnosis.

The policy keeps generating low-information actions. They neither reduce model uncertainty nor pull useful signal from the environment. The whole rollout spins in place. T²PO handles this in two layers. At the token level, it monitors marginal change in uncertainty and fires a "thinking" intervention if the change drops below threshold. At the turn level, it identifies interactions where exploration progress is negligible and resamples them outright.

Training stability and final performance both improve on WebShop, ALFWorld, and Search QA. The paper is ICML-accepted with open-source code. Threshold tuning and cross-task transfer need a closer read of the full paper. But the core idea — using a model's own uncertainty as an active training signal — runs orthogonal to mainstream credit-assignment plus trajectory filtering.

Key takeaways: - If multi-turn agent RL is unstable, check exploration efficiency first. Stop stacking tricks on top of credit assignment. - A model's own uncertainty can be an active training-time signal, not just an inference-time confidence value. - Teams running multi-turn agent training can compare this directly against their existing stabilization approach.

Source: T²PO: Uncertainty-Guided Exploration Control for Stable Multi-Turn Agentic Reinforcement Learning

02 Models Don't Know What They Don't Know

Factuality progress over the past few years has mostly come from making models remember more — pushing the knowledge frontier outward. This paper names a layer that gets skipped. Models still don't recognize their own ignorance, so even on the simplest factoid QA they hallucinate with confidence.

The authors argue past the binary "answer or refuse" framing. A third path: align linguistic uncertainty with internal uncertainty, also called metacognition. For tool builders, this is exactly the layer that gets jumped over when assembling a reliability stack. The default move is RAG plus a refusal threshold. The real question underneath — whether the model's actual uncertainty is being faithfully expressed — usually goes unaddressed.

One caveat. This is a position paper. The authors admit the problem is intrinsically hard and don't offer an implementation. Naming it clearly is the contribution.

Key takeaways: - Most factuality gains come from "remembering more," not "knowing what you don't know." The two paths get conflated in engineering practice. - For agent systems, calibrated uncertainty is the control layer that decides when to retrieve and when to trust the model directly. - This is a position paper, not a method. Implementation remains open.

Source: Hallucinations Undermine Trust; Metacognition is a Way Forward

03 Medical Agents Need an Execution-Grounded Scorecard

Earlier medical agent benchmarks mostly tested static knowledge or single-step decisions: memorize medical QA, simulate one prescription. The distance from real clinical workflow is wide. PhysicianBench moves 100 real consultation cases into an electronic health record (EHR) environment. The interactions use the same API as a commercial EHR vendor, and each task averages 27 tool calls — pulling data across encounters, making decisions, writing notes — with verifiable execution feedback at every step.

Scoring runs across 670 stage-level checkpoints. Of 13 agents tested, the best pass@1 is 46%; best open source hits 19%. The gap is wide, but the more important point is that the benchmark actually measures clinical execution, not exam scores.

Key takeaways: - The hard part of medical agents is long-horizon, compound, cross-encounter workflow. Single-step QA benchmarks no longer say anything useful. - Teams building vertical medical agents should treat execution-grounded evaluation as a pre-launch scorecard. - The 46% vs 19% proprietary–open-source gap shows open source still has obvious catching up to do on long-horizon tool use.

Source: PhysicianBench: Evaluating LLM Agents in Real-World EHR Environments

04 Where Video Caching Goes Blind

Within a single frame, fast-moving object edges and near-static background get treated identically by the cache. That's the blind spot in current AR video generation step-skipping. The root cause is chunk-level cache reuse: a whole time window decides reuse or recompute together. Static backgrounds tolerate this fine. Fast-moving objects accumulate visible error.

MotionCache pushes granularity to the pixel level. It uses frame-to-frame difference as a lightweight motion proxy. High-motion pixels get more denoising steps; static regions reuse aggressively. SkyReels-V2 sees 6.28x speedup, MAGI-1 only 1.64x, with VBench quality essentially preserved. The 4x gap between two models means speedup is highly architecture-dependent. Transferring to other AR video models needs per-model evaluation.

Key takeaways: - Chunk-level cache skipping is blind to high-motion pixels. Frame-difference-weighted pixel-level scoring is a direct fix. - This is deployment-side optimization with no weight changes. AR video inference services can try it directly. - Speedup varies 4x across models. Confirm the transfer effect per model before relying on the numbers.

Source: Motion-Aware Caching for Efficient Autoregressive Video Generation

05 What If Attention Is Just a Parameter-Prediction MLP?

Intuitively, attention's global modeling power comes from explicit token-to-token weighting. That intuition is exactly why attention has to be O(N²). WeightFormer offers a math rewrite that gives pause. Attention is equivalent to an MLP whose parameters are predicted from input. The "global capability" actually comes from those predicted parameters compressing global context — not from token-wise weighting itself.

From this angle, linear-complexity designs no longer have to circle around "how to approximate softmax attention." The question becomes "how to predict good parameters from input." The authors run several parameter-prediction strategies on vision models and show the path works at medium scale. Whether it scales to LLM size is an open question. The math rewrite itself already opens a new design space.

Key takeaways: - Attention can be mathematically rewritten as an MLP with input-predicted parameters. Global capability comes from parameter compression, not explicit token weighting. - The viewpoint shift moves linearization design from "approximate attention" to "predict good parameters." - Vision-scale validation works. LLM-scale generalization needs follow-up work.

Source: Linear-Time Global Visual Modeling without Explicit Attention

Also Worth Noting

Today's Observation

The interesting thing today isn't any single paper. It's that "long-horizon agent reliability" gets struck by four or five different layers at once. T²PO addresses training stability — keeping multi-turn RL from collapsing. The metacognition piece names self-awareness — models not knowing what they don't know. PhysicianBench and AcademiClaw redefine the task itself — what real long-horizon work looks like that current models can't do. Counting probes set a minimum reliability bar; even counting goes unstable. The Marginal Token Allocator reframes the evaluation paradigm: score on token economy, not text generation.

Each paper points at a different failure location. None claims a breakthrough. Stitched together, the implicit signal is this: today's agent unreliability on real long-horizon tasks isn't a single-point problem. It's a five-layer resonance across training, self-awareness, task definition, minimal testing, and evaluation framing. Patching any one layer alone won't be enough.

Concrete next steps. If your team builds a vertical agent, pick the layer closest to your business and start monitoring there. For medical or legal long-horizon tool use, the PhysicianBench approach — turning your real internal workflow into a regression set with verifiable execution traces — is directly portable. If you run multi-turn RL, add T²PO's marginal-uncertainty monitoring to your training logs. It catches exploration collapse earlier than a reward curve does. If you build evaluators, add minimal reliability probes like the counting test to CI instead of only running large benchmarks.